Primers • Python

- Python

- Python versions

- Python style guide

- Python notebooks: Jupyter and Colab

- The Zen of Python

- Indentation

- Code comments

- Variables

- Print function

- Input function

- Order of operations

- Basic data types

- Containers

- Functions

- Nested functions

- Type hinting

- Control flow

- Lambda functions

- Lambda function use-cases

- The pros and cons of lambda functions

- Misuse and overuse scenarios

- Misuse: naming lambda expressions

- Misuse: needless function calls

- Overuse: simple, but non-trivial functions

- Overuse: when multiple lines would help

- Overuse: lambda with map and filter

- Misuse: sometimes you don’t even need to pass a function

- Overuse: using lambda for very simple operations

- Overuse: when higher order functions add confusion

- The Iterator Protocol: How

forloops work in Python - Generators

- Decorators

- File I/O

- Magic methods

- Exceptions

- Modules

- Classes

- Selected built-ins

- References

- Further Reading

- Citation

Python

- Python is a high-level, dynamically typed multiparadigm programming language, created by Guido van Rossum in the early 90s. It is now one of the most popular languages in existence.

- Python’s syntactic clarity allows you to express very powerful ideas in very few lines of code while being very readable. It’s basically executable pseudocode!

-

As an example, here is an implementation of the classic Quicksort algorithm in Python:

def quicksort(arr): if len(arr) <= 1: return arr pivot = arr[len(arr) // 2] left = [x for x in arr if x < pivot] middle = [x for x in arr if x == pivot] right = [x for x in arr if x > pivot] return quicksort(left) + middle + quicksort(right) quicksort([3, 6, 8, 10, 1, 2, 1]) # Returns "[1, 1, 2, 3, 6, 8, 10]"

Note: This primer applies to Python 3 specifically. Check out the Python 2 primer if you want to learn about the (now old) Python 2.7.

Python versions

- As of Janurary 1, 2020, Python has officially dropped support for Python 2.

- If you haven’t experimented with Python at all and are just starting off, we recommend you begin with the latest version of Python 3.

- You can double-check your Python version at the command line after activating your environment by running

python --version.

Python style guide

- Google’s Python Style Guide is a fantastic resource with a list of dos and don’ts for formatting Python code that is commonly followed in the industry.

Python notebooks: Jupyter and Colab

- Before we dive into Python, we’d like to briefly talk about notebooks and creating virtual environments.

- If you’re looking to work on different projects, you’ll likely be utilizing different versions of Python modules. In this case, it is a good practice to have multiple virtual environments to work on different projects.

- Python Setup: Remote vs. Local offers an in-depth coverage of the various remote and local options available.

The Zen of Python

- The Zen of Python by Tim Peters are 19 guidelines for the design of the Python language. Your Python code doesn’t necessarily have to follow these guidelines, but they’re good to keep in mind. The Zen of Python is an Easter egg, or hidden joke, that appears if you run:

import this

- which outputs:

The Zen of Python, by Tim Peters

Beautiful is better than ugly.

Explicit is better than implicit.

Simple is better than complex.

Complex is better than complicated.

Flat is better than nested.

Sparse is better than dense.

Readability counts.

Special cases aren't special enough to break the rules.

Although practicality beats purity.

Errors should never pass silently.

Unless explicitly silenced.

In the face of ambiguity, refuse the temptation to guess.

There should be one-- and preferably only one --obvious way to do it.

Although that way may not be obvious at first unless you're Dutch.

Now is better than never.

Although never is often better than *right* now.

If the implementation is hard to explain, it's a bad idea.

If the implementation is easy to explain, it may be a good idea.

Namespaces are one honking great idea -- let's do more of those!

- For more on the coding principles above, refer to The Zen of Python, Explained.

Indentation

- Python is big on indentation! Where in other programming languages the indentation in code is to improve readability, Python uses indentation to indicate a block of code.

- The convention is to use four spaces, not tabs.

Code comments

- Python supports two types of comments: single-line and multi-line, as detailed below:

# Single line comments start with a number symbol.

""" Multiline strings can be written using three double quotes,

and are often used for documentation (hence called "docstrings").

They are also the closest concept to multi-line comments in other languages.

"""

Variables

- In Python, there are no declarations unlike C/C++; only assignments:

some_var = 5

some_var # Returns 5

- Accessing a previously unassigned variable leads to a

NameErrorexception:

some_unknown_var # Raises a NameError

- Variables are “names” in Python that simply refer to objects. This implies that you can make another variable point to an object by assigning it the original variable that was pointing to the same object.

a = [1, 2, 3] # Point "a" at a new list, [1, 2, 3, 4]

b = a # Point "b" at what "a" is pointing to

b += [4] # Extend the list pointed by "a" and "b" by adding "4" to it

a # Returns "[1, 2, 3, 4]"

b # Also returns "[1, 2, 3, 4]"

ischecks if two variables refer to the same object, while==checks if the objects that the variables point to have the same values:

a = [1, 2, 3, 4] # Point "a" at a new list, [1, 2, 3, 4]

b = a # Point "b" at what a is pointing to

b is a # Returns True, "a" and "b" refer to the *same* object

b == a # Returns True, the objects that "a" and "b" are pointing to are *equal*

b = [1, 2, 3, 4] # Point b at a new list, [1, 2, 3, 4]

b is a # Returns False, "a" and "b" do not refer to the *same* object

b == a # Returns True, the objects that "a" and "b" are pointing to are *equal*

- In Python, everything is an object. This means that even

None(which is used to denote that the variable doesn’t point to an object yet) is also an object!

# Don't use the equality "==" symbol to compare objects to None

# Use "is" instead. This checks for equality of object identity.

"etc" is None # Returns False

None is None # Returns True

- Python has local and global variables. Here’s an example of local vs. global variable scope:

x = 5

def set_x(num):

# Local var x not the same as global variable x

x = num # Returns 43

x # Returns 43

def set_global_x(num):

global x

x # Returns 5

x = num # global var x is now set to 6

x # Returns 6

set_x(43)

set_global_x(6)

Print function

- Python has a

printfunction:

print("I'm Python. Nice to meet you!") # Returns I'm Python. Nice to meet you!

- By default, the print function also prints out a newline at the end. Override the optional argument

endto modify this behavior:

print("Hello, World", end="!") # Returns Hello, World!

Input function

- Python offers a simple way to get input data from console:

input_string_var = input("Enter some data: ") # Returns the data as a string

- Note: In earlier versions of Python,

input()was named asraw_input().

Order of operations

- Just like mathematical operations in other languages, Python uses the BODMAS rule (also called the PEMDAS rule) to ascertain operator precedence. BODMAS is an acronym and it stands for Bracket, Of, Division, Multiplication, Addition, and Subtraction.

1 + 3 * 2 # Returns 7

- To bypass BODMAS, enforce precedence with parentheses:

(1 + 3) * 2 # Returns 8

Basic data types

- Like most languages, Python has a number of basic types including integers, floats, booleans, and strings.

Numbers

- Integers work as you would expect from other languages:

x = 3

x # Prints "3"

type(x) # Prints "<class 'int'>"

x + 1 # Addition; returns "4"

x - 1 # Subtraction; returns "2"

x * 2 # Multiplication; returns "6"

x ** 2 # Exponentiation; returns "9"

x += 1 # Returns "4"

x *= 2 # Returns "8"

x % 4 # Modulo operation; returns "3"

- Floats also behave similar to other languages:

y = 2.5

type(y) # Returns "<class 'float'>"

y, y + 1, y * 2, y ** 2 # Returns "(2.5, 3.5, 5.0, 6.25)"

- Some nuances in integer/float division that you should take note of:

3 / 2 # Float division in Python 3, returns "1.5"; integer division in Python 2, returns "1"

3 // 2 # Integer division in both Python 2 and 3, returns "1"

10.0 / 3 # Float division in both Python 2 and 3, returns "3.33.."

# Integer division rounds down for both positive and negative numbers

-5 // 3 # -2

5.0 // 3.0 # 1.0

-5.0 // 3.0 # -2.0

- Note that unlike many languages, Python does not have unary increment (

x++) or decrement (x--) operators, but accepts the+=and-=operators. - Python also has built-in types for complex numbers; you can find all of the details in the Python documentation.

Use Underscores to Format Large Numbers

-

When working with a large number in Python, it can be difficult to figure out how many digits that number has. Python 3.6 and above allows you to use underscores as visual separators to group digits.

-

In the example below, underscores are used to group decimal numbers by thousands.

large_num = 1_000_000

large_num # Returns 1000000

Booleans

- Python implements all of the usual operators for Boolean logic, but uses English words rather than symbols (

&&,||, etc.):

t = True

f = False

type(t) # Returns "<class 'bool'>"

t and f # Logical AND; returns "False"

t or f # Logical OR; returns "True"

not t # Logical NOT; returns "False"

t != f # Logical XOR; returns "True"

- The numerical value of

TrueandFalseis1and0:

True + True # Returns 2

True * 8 # Returns 8

False - 5 # Returns -5

- Comparison operators look at the numerical value of

TrueandFalse:

0 == False # Returns True

1 == True # Returns True

2 == True # Returns False

-5 != False # Returns True

None,0, and empty strings/lists/dicts/tuples all evaluate toFalse. All other values areTrue.

bool(0) # Returns False

bool("") # Returns False

bool([]) # Returns False

bool({}) # Returns False

bool(()) # Returns False

- Equality comparisons yield boolean outputs:

# Equality is ==

1 == 1 # Returns True

2 == 1 # Returns False

# Inequality is !=

1 != 1 # Returns False

2 != 1 # Returns True

# More comparisons

1 < 10 # Returns True

1 > 10 # Returns False

2 <= 2 # Returns True

2 >= 2 # Returns True

# Seeing whether a value is in a range

1 < 2 and 2 < 3 # Returns True

2 < 3 and 3 < 2 # Returns False

# Chaining makes the above look nicer

1 < 2 < 3 # Returns True

2 < 3 < 2 # Returns False

- Casting integers as booleans transforms a non-zero integer to

True, while zeros get transformed toFalse:

bool(0) # Returns False

bool(4) # Returns True

bool(-6) # Returns True

- Using logical operators with integers casts them to booleans for evaluation, using the same rules as mentioned above. However, note that the original pre-cast value is returned.

0 and 2 # Returns 0

-5 or 0 # Returns -5

Strings

- Python has great support for strings:

hello = 'hello' # String literals can use single quotes

world = "world" # or double quotes; it does not matter.

# But note that you can nest one in another, for e.g., 'a"x"b' and "a'x'b"

print(hello) # Prints "hello"

len(hello) # String length; returns "5"

hello[0] # A string can be treated like a list of characters, returns 'h'

hello + ' ' + world # String concatenation using '+', returns "hello world"

"hello " "world" # String literals (but not variables) can be concatenated without using '+', returns "hello world"

'%s %s %d' % (hello, world, 12) # sprintf style string formatting, returns "hello world 12"

- String objects have a bunch of useful methods; for example:

s = "hello"

s.capitalize() # Capitalize a string; returns "Hello"

s.upper() # Convert a string to uppercase; prints "HELLO"

s.rjust(7) # Right-justify a string, padding with spaces; returns " hello"

s.center(7) # Center a string, padding with spaces; returns " hello "

s.replace('l', '(ell)') # Replace all instances of one substring with another;

# returns "he(ell)(ell)o"

' world '.strip() # Strip leading and trailing whitespace; returns "world"

- You can find a list of all string methods in the Python documentation.

String formatting

- Python has several different ways of formatting strings. Simple positional formatting is probably the most common use-case. Use it if the order of your arguments is not likely to change and you only have very few elements you want to concatenate. Since the elements are not represented by something as descriptive as a name this simple style should only be used to format a relatively small number of elements.

- More on string formatting [here].

Old style/pre-Python 2.6

- The old style uses

'' % <tuple>as follows:

'%s %s' % ('one', 'two') # Returns 'one two'

'%d %d' % (1, 2) # Returns '1 2'

New style/Python 2.6

- The new style uses

''.format()as follows:

'{} {}'.format('one', 'two') # Returns 'one two'

'{} {}'.format(1, 2) # Returns '1 2'

- Note that both the old and new style of formatting are still compatible with the newest releases of Python, which is version 3.8 at the time of writing.

- With the new style formatting, you can give placeholders an explicit positional index (called positional arguments). This allows for re-arranging the order of display without changing the arguments. This operation is not available with old-style formatting.

'{1} {0}'.format('one', 'two') # Returns 'two one'

- For the example

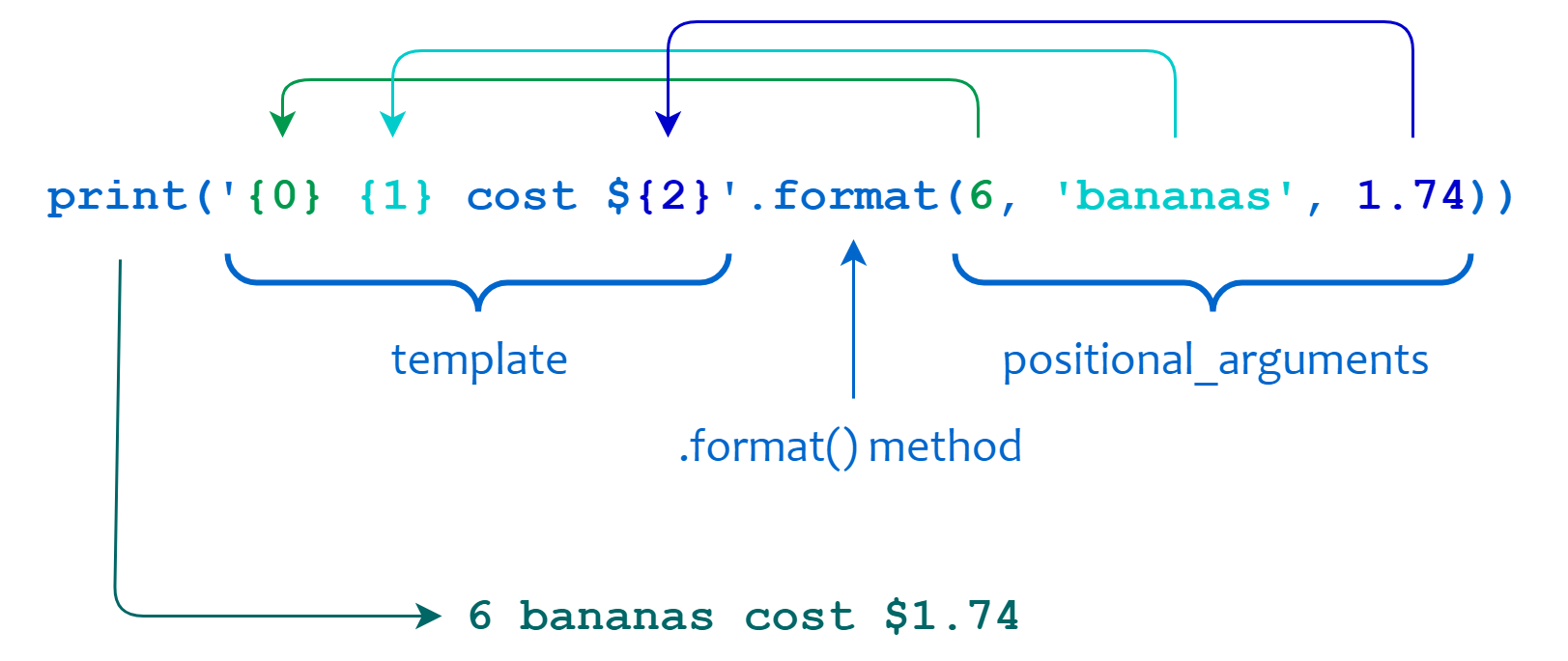

print('{0} {1} cost ${2}'.format(6, 'bananas', 1.74)), the output is6 bananas cost $1.74, as explained below:

- You can also use keyword arguments instead of positional parameters to produce the same result. This is called keyword arguments.

'{first} {second}'.format(first='one', second='two') # Returns 'one two'

- For the example

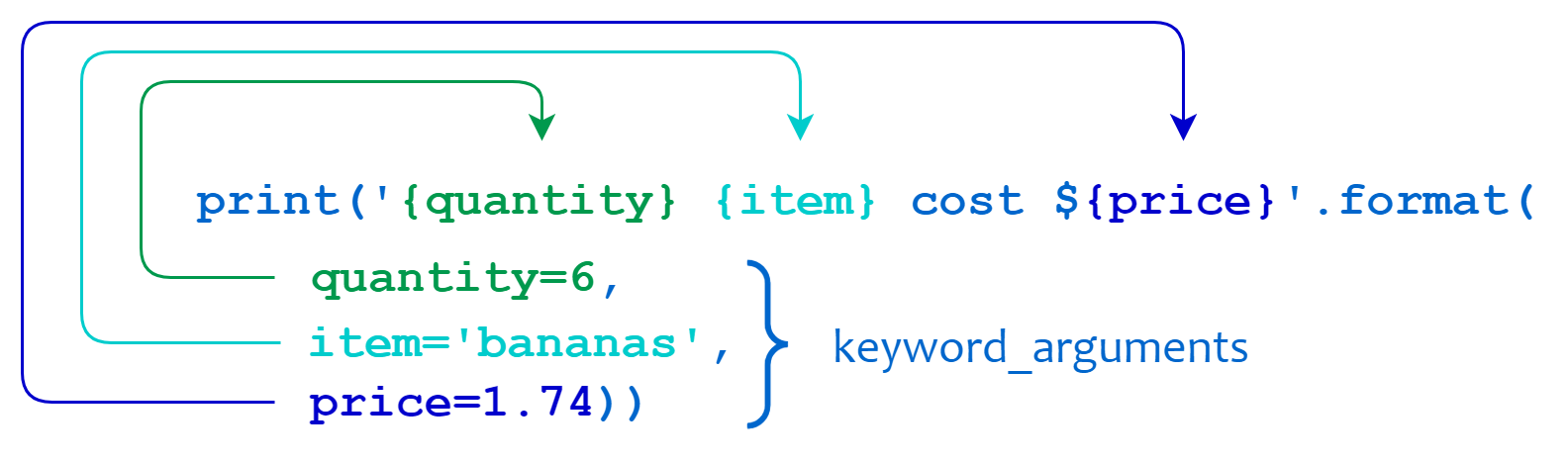

print('{quantity} {item} cost ${price}'.format(quantity=6, item='bananas', price=1.74)), the output is6 bananas cost $1.74, as explained below:

f-strings

- Starting Python 3.6, you can also format strings using f-string literals, which are much more powerful than the old/new string formatters we discussed earlier:

name = "Reiko"

f"She said her name is {name}." # Returns "She said her name is Reiko."

# You can basically put any Python statement inside the braces and it will be output in the string.

f"{name} is {len(name)} characters long." # Returns "Reiko is 5 characters long."

Padding and aligning strings

- By default, values are formatted to take up only as many characters as needed to represent the content. It is however also possible to define that a value should be padded to a specific length.

-

Unfortunately the default alignment differs between old and new style formatting. The old style defaults to right aligned while the new style is left aligned.

- To align text right:

'%10s' % ('test',) # Returns " test"

'{:>10}'.format('test') # Returns " test"

- To align text left:

'%-10s' % ('test',) # Returns "test "

'{:10}'.format('test') # Returns "test "

'{:<10}'.format('test') # Returns "test "

- Again, the new style formatting surpasses the old variant by providing more control over how values are padded and aligned. You are able to choose the padding character and override the default space character for padding. This operation is not available with old-style formatting.

'{:_<10}'.format('test') # Returns "test______"

'{:0<10}'.format('test') # Returns "test000000"

- And also center align values. This operation is not available with old-style formatting.

'{:^10}'.format('test') # Returns " test "

- When using center alignment where the length of the string leads to an uneven split of the padding characters the extra character will be placed on the right side. This operation is not available with old-style formatting.

'{:^6}'.format('zip') # Returns " zip "

- You can also combine the field numbering (say,

{0}for the first argument) specification with the format type (say,{:s}for strings):

'{2:s}, {1:s}, {0:s}'.format('test1', 'test2', 'test3') # Returns "test3, test2, test1"

'{2}, {1}, {0}'.format('test1', 'test2', 'test3') # Returns "test3, test2, test1"

- Unpacking arguments:

'{2}, {1}, {0}'.format(*'abc') # Returns "c, b, a"

- Unpacking arguments by name:

'Coordinates: {latitude}, {longitude}'.format(latitude='37.24N', longitude='-115.81W') # Returns "Coordinates: 37.24N, -115.81W"

coord = {'latitude': '37.24N', 'longitude': '-115.81W'}

'Coordinates: {latitude}, {longitude}'.format(**coord) # Returns 'Coordinates: 37.24N, -115.81W'

- f-strings can also be formatted similarly:

- As an example:

test2 = "test2" test1 = "test1" test0 = "test0" f'{test2:10.3}, {test1:5.2}, {test0:2.1}' # Returns "tes , te , t " - Yet another one:

s1 = 'a' s2 = 'ab' s3 = 'abc' s4 = 'abcd' print(f'{s1:>10}') # Prints a print(f'{s2:>10}') # Prints ab print(f'{s3:>10}') # Prints abc print(f'{s4:>10}') # Prints abcd

- As an example:

Truncating long strings

- Inverse to padding it is also possible to truncate overly long values to a specific number of characters. The number behind the

.in the format specifies the precision of the output. For strings that means that the output is truncated to the specified length. In our example this would be 5 characters.

'%.5s' % ('xylophone',) # Returns "xylop"

'{:.5}'.format('xylophone') # Returns "xylop"

Combining truncating and padding

- It is also possible to combine truncating and padding:

'%-10.5s' % ('xylophone',) # Returns "xylop "

'{:10.5}'.format('xylophone') # Returns "xylop "

Numbers

-

Of course it is also possible to format numbers.

-

Integers:

'%d' % (42,) # Returns "42"

'{:d}'.format(42) # Returns "42"

- Floats:

'%f' % (3.141592653589793,) # Returns "3.141593"

'{:f}'.format(3.141592653589793) # Returns "3.141593"

Padding numbers

- Similar to strings numbers can also be constrained to a specific width.

'%4d' % (42,) # Returns " 42"

'{:4d}'.format(42) # Returns " 42"

- Again similar to truncating strings the precision for floating point numbers limits the number of positions after the decimal point. For floating points, the padding value represents the length of the complete output (including the decimal). In the example below we want our output to have at least 6 characters with 2 after the decimal point.

'%06.2f' % (3.141592653589793,) # Returns "003.14"

'{:06.2f}'.format(3.141592653589793) # Returns "003.14"

- For integer values providing a precision doesn’t make much sense and is actually forbidden in the new style (it will result in a

ValueError).

'%04d' % (42,) # Returns "0042"

'{:04d}'.format(42) # Returns "0042"

- Some examples:

- Specify a sign for floats:

- Show the sign always:

'{:+f}; {:+f}'.format(3.14, -3.14) # Returns "+3.140000; -3.140000" - Show a space for positive numbers, but a sign for negative numbers:

'{: f}; {: f}'.format(3.14, -3.14) # Returns " 3.140000; -3.140000" - Show only the minus – same as

{:f}; {:f}:'{:-f}; {:-f}'.format(3.14, -3.14) # Returns "3.140000; -3.140000"

- Show the sign always:

- Same for ints:

- Show the sign always:

'{:+d}; {:+d}'.format(3, -3) # Returns "+3; -3" - Show a space for positive numbers, but a sign for negative numbers:

'{: d}; {: d}'.format(3, -3) # Returns " 3; -3"

- Show the sign always:

- Converting the value to different bases using replacing

{:d},{:x}and{:o}:- Note that format also supports binary numbers:

"int: {0:d}; hex: {0:x}; oct: {0:o}; bin: {0:b}".format(42) # Returns "int: 42; hex: 2a; oct: 52; bin: 101010'" - With

0x,0o, or0bas prefix:"int: {0:d}; hex: {0:#x}; oct: {0:#o}; bin: {0:#b}".format(42) # Returns "int: 42; hex: 0x2a; oct: 0o52; bin: 0b101010"

- Note that format also supports binary numbers:

- Using the comma as a thousands separator:

'{:,}'.format(1234567890) # Returns "1,234,567,890" - Expressing a percentage:

points = 19 total = 22 'Correct answers: {:.2%}'.format(points/total) # Returns "Correct answers: 86.36%" - Using type-specific formatting:

import datetime d = datetime.datetime(2010, 7, 4, 12, 15, 58) '{:%Y-%m-%d %H:%M:%S}'.format(d) # Returns "2010-07-04 12:15:58" - Nesting arguments and more complex examples:

for align, text in zip('<^>', ['left', 'center', 'right']): '{0:{fill}{align}16}'.format(text, fill=align, align=align) # Returns: # 'left<<<<<<<<<<<<' # '^^^^^center^^^^^' # '>>>>>>>>>>>right' width = 5 for num in range(5,12): for base in 'dXob': print('{0:{width}{base}}'.format(num, base=base, width=width), end=' ') print() # Prints: # 5 5 5 101 # 6 6 6 110 # 7 7 7 111 # 8 8 10 1000 # 9 9 11 1001 # 10 A 12 1010 # 11 B 13 1011

- Specify a sign for floats:

- f-strings can also be formatted similarly:

- Format floats:

val = 12.3 f'{val:.2f}' # Returns 12.30 f'{val:.5f}' # Returns 12.30000 - Format width:

for x in range(1, 11): print(f'{x:02} {x*x:3} {x*x*x:4}') # Prints: # 01 1 1 # 02 4 8 # 03 9 27 # 04 16 64 # 05 25 125 # 06 36 216 # 07 49 343 # 08 64 512 # 09 81 729 # 10 100 1000

- Format floats:

Index of a Substring in a Python String

- If you want to find the index of a substring in a string, use the

str.find()method which returns the index of the first occurrence of the substring if found and-1otherwise.

sentence = "Today is Saturaday"

- Find the index of first occurrence of the substring:

sentence.find("day") # Returns 2

sentence.find("nice") # Returns -1

- You can also provide the starting and stopping position of the search:

# Start searching for the substring at index 3

sentence.find("day", 3) # Returns 15

- Note that you can also use

str.index()to accomplish the same end result.

Replace One String with Another String Using Regular Expressions

-

If you want to either replace one string with another string or to change the order of characters in a string, use

re.sub(). -

re.sub()allows you to use a regular expression to specify the pattern of the string you want to swap. -

In the code below, we replace 3/7/2021 with Sunday and replace 3/7/2021 with 2021/3/7.

import re

text = "Today is 3/7/2021"

match_pattern = r"(\d+)/(\d+)/(\d+)"

re.sub(match_pattern, "Sunday", text) # Returns 'Today is Sunday'

re.sub(match_pattern, r"\3-\1-\2", text) # Returns 'Today is 2021-3-7'

Containers

- Containers are any object that holds an arbitrary number of other objects. Generally, containers provide a way to access the contained objects and to iterate over them.

- Python includes several built-in container types: lists, dictionaries, sets, and tuples:

from collections import Container # Can also use "from typing import Sequence"

isinstance(list(), Container) # Prints True

isinstance(tuple(), Container) # Prints True

isinstance(set(), Container) # Prints True

isinstance(dict(), Container) # Prints True

# Note that the "dict" datatype is also a mapping datatype (along with being a container):

isinstance(dict(), collections.Mapping) # Prints True

Lists

- A list is the Python equivalent of an array, but is resizable and can contain elements of different types:

l = [3, 1, 2] # Create a list

l[0] # Access a list like you would any array; returns "1"

l[4] # Looking out-of-bounds is an IndexError; Raises an "IndexError"

l[::-1] # Return list in reverse order "[2, 1, 3]"

l, l[2] # Returns "([3, 1, 2] 2)"

l[-1] # Negative indices count from the end of the list; prints "2"

l[2] = 'foo' # Lists can contain elements of different types

l # Prints "[3, 1, 'foo']"

l.append('bar') # Add a new element to the end of the list

l # Prints "[3, 1, 'foo', 'bar']"

x = l.pop() # Remove and return the last element of the list

x, l # Prints "bar [3, 1, 'foo']"

- Lists be “unpacked” into variables:

a, b, c = [1, 2, 3] # a is now 1, b is now 2 and c is now 3

# You can also do extended unpacking

a, *b, c = [1, 2, 3, 4] # a is now 1, b is now [2, 3] and c is now 4

# Now look how easy it is to swap two values

b, a = [a, b # a is now [2, 3] and b is now 1

- As usual, you can find all the gory details about lists in the Python documentation.

Iterate over a list

- You can loop over the elements of a list like this:

animals = ['cat', 'dog', 'monkey']

for animal in animals:

print(animal)

# Prints "cat", "dog", "monkey", each on its own line.

- If you want access to the index of each element within the body of a loop, use the built-in

enumeratefunction:

animals = ['cat', 'dog', 'monkey']

for idx, animal in enumerate(animals):

print('#%d: %s' % (idx + 1, animal))

# Prints "#1: cat", "#2: dog", "#3: monkey", each on its own line

List comprehensions

- List comprehensions are a tool for transforming one list (any iterable actually) into another list. During this transformation, elements can be conditionally included in the new list and each element can be transformed as needed.

From loops to comprehensions

- Every list comprehension can be rewritten as a

forloop but not everyforloop can be rewritten as a list comprehension. - The key to understanding when to use list comprehensions is to practice identifying problems that smell like list comprehensions.

- If you can rewrite your code to look just like this

forloop, you can also rewrite it as a list comprehension:

new_things = []

for item in old_things:

if condition_based_on(item):

new_things.append("something with " + item)

- You can rewrite the above

forloop as a list comprehension like this:

new_things = ["something with " + item for item in old_things if condition_based_on(item)]

- Thus, we can go from a

forloop into a list comprehension by simply:- Copying the variable assignment for our new empty list.

- Copying the expression that we’ve been

append-ing into this new list. - Copying the

forloop line, excluding the final:. - Copying the

ifstatement line, also without the:.

- As a simple example, consider the following code that computes square numbers:

nums = [0, 1, 2, 3, 4]

squares = []

for x in nums:

if x % 2 == 0

squares.append(x ** 2)

squares # Returns [0, 4, 16]

- You can make this code simpler using a list comprehension:

nums = [0, 1, 2, 3, 4]

[x ** 2 for x in nums if x % 2 == 0] # Returns [0, 4, 16]

- List comprehensions do not necessarily need to contain the

ifconditional clause:

nums = [0, 1, 2, 3, 4]

[x ** 2 for x in nums] # Returns [0, 1, 4, 9, 16]

- You can also use

if/elsein a list comprehension. Note that this actually uses a different language construct, a conditional expression, which itself is not part of the comprehension syntax, while theifafter thefor…inis part of the list comprehension syntax.

nums = [0, 1, 2, 3, 4]

# Create a list with x ** 2 if x is odd else 0, for each element in x

[x ** 2 if x % 2 else 0 for x in nums] # Returns [0, 1, 0, 9, 0]

- List comprehensions can emulate

mapand/orfilteras follows:

[someFunc(i) for i in [1, 2, 3]] # Returns [11, 12, 13]

[x for x in [3, 4, 5, 6, 7] if x > 5] # Returns [6, 7]

- On the other hand, list comprehensions can be equivalently written using a combination of the

listconstructor, and/ormapand/orfilter:

list(map(lambda x: x + 10, [1, 2, 3])) # Returns [11, 12, 13]

list(map(max, [1, 2, 3], [4, 2, 1])) # Returns [4, 2, 3]

list(filter(lambda x: x > 5, [3, 4, 5, 6, 7])) # Returns [6, 7]

Nested Loops

- In this section, we’ll tackle list comprehensions with nested looping.

- Here’s a

forloop that flattens a matrix (a list of lists):

flattened = []

for row in matrix:

for n in row:

flattened.append(n)

- And here’s a list comprehension that does the same thing:

flattened = [n for row in matrix for n in row]

- Nested loops in list comprehensions do not read like English prose. A common pitfalls is to read this list comprehension as:

flattened = [n for n in row for row in matrix]

- But that’s not right! We’ve mistakenly flipped the

forloops here. The correct version is the one above. - When working with nested loops in list comprehensions remember that the

forclauses remain in the same order as in our originalforloops.

Slicing

- In addition to accessing list elements one at a time, Python provides concise syntax to access sublists; this is known as slicing:

nums = list(range(5)) # range is a built-in function that creates a list of integers

nums # Returns "[0, 1, 2, 3, 4]"

nums[2:4] # Get a slice from index 2 to 4 (exclusive); returns "[2, 3]"

nums[2:] # Get a slice from index 2 to the end; returns "[2, 3, 4]"

nums[:2] # Get a slice from the start to index 2 (exclusive); returns "[0, 1]"

nums[:] # Get a slice of the whole list; returns "[0, 1, 2, 3, 4]"

nums[:-1] # Slice indices can be negative; returns "[0, 1, 2, 3]"

- Assigning to a slice (even with a source of different length) is possible since lists are mutable:

# Case 1: source of the same length

nums1 = [1, 2, 3]

nums1[1:] = [4, 5] # Assign a new sublist to a slice

nums1 # Returns "[1, 4, 5]"

# Case 2: source of different length

nums2 = nums1

nums2[1:] = [6] # Assign a new sublist to a slice

nums2 # Returns "[1, 6]"

id(nums1) == id(nums2) # Returns True since lists are mutable, i.e., can be changed in-place

- Similar to tuples, when evaluating a range over list indices (something of the form

[x:y]wherexandyare some indices into the list), if our right-hand value exceeds the list length, Python simply returns the items from the list up until the value goes out of index range.

a = [1, 2, 3] # Max addressable index: 2

a[:3] # Does NOT return an error, instead returns [1, 2, 3]

List functions

l = [1, 2, 3]

l_copy = l[:] # Make a one layer deep copy of l into l_copy

# Note that "l_copy is l" will result in False after this operation.

# This is similar to using the "copy()" method, i.e., l_copy = l.copy()

del l_copy[2] # Remove arbitrary elements from a list with "del"; l_copy is now [1, 2]

l.remove(3) # Remove first occurrence of a value; l is now [1, 2]

# l.remove(2) # Raises a ValueError as 2 is not in the list

l.insert(2, 3) # Insert an element at a specific index; l is now [1, 2, 3].

# Note that l.insert(n, 3) would return the same output, where n >= len(l),

# for example, l.insert(3, 3).

l.index(3) # Get the index of the first item found matching the argument; returns 3

# l.index(4) # Raises a ValueError as 4 is not in the list

l_copy += [3] # This is similar to using the "extend()" method

l + l_copy # Concatenate two lists; returns [1, 2, 3, 1, 2, 3]

# Again, this is similar to using the "extend()" method; with the only

# difference being that "list.extend()" carries out the operation in place,

# while '+' creates a new list object (and doesn't modify "l" and "l_copy").

l.append(l_copy) # You can append lists using the "append()" method; returns [1, 2, 3, [1, 2, 3]]

1 in l # Check for existence (also called "membership check") in a list with "in"; returns True

len(l) # Examine the length with "len()"; returns 4

- List concatenation using

.extend()can be achieved using the in-place addition operator,+=.

# Extending a list with another iterable (in this case, a list)

l = [1, 2, 3]

l += [4, 5, 6] # Returns "[1, 2, 3, 4, 5, 6]"

# Note that l += 4, 5, 6 works as well since the source argument on the right is already an iterable (in this case, a tuple)

l += [[7, 8, 9]] # Returns "[1, 2, 3, 4, 5, 6, [7, 8, 9]]"

# For slicing use-cases

l[1:] = [10] # Returns "[1, 10]"

- Instead of needing to create an explicit list using the source argument (on the right), as a hack, you can simply use a trailing comma to create a tuple out of the source argument (and thus imitate the above functionality):

# Extending a list with another iterable (in this case, a list)

l = [1, 2, 3]

l += 4 # TypeError: 'int' object is not iterable

l += 4, # Equivalent to l += (4,); same effect as l += [4]; returns "[1, 2, 3, 4]"

l += [5, 6, 7], # Returns "[1, 2, 3, 4, [5, 6, 7]]"

# For slicing use-cases

l[1:] = 10, # Equivalent to l[1:] = (10,); same effect as l[1:] = [10]; returns "[1, 10]"

Dictionaries

- A dictionary stores

(key, value)pairs, similar to aMapin Java or an object in Javascript. In other words, dictionaries store mappings from keys to values. - You can use it like this:

d = {"one": 1, "two": 2, "three": 3} # Create a new dictionary with some data

d['one'] # Lookup values in the dictionary using "[]"; returns "1"

'two' in d # Check if a dictionary has a given key; returns "True"

d['four'] = 4 # Set an entry in a dictionary

d['four'] # Returns "4"

- You can find all you need to know about dictionaries in the Python documentation.

Accessing a non-existent key

d = {"one": 1, "two": 2, "three": 3} # Create a new dictionary with some data

print(d["four"]) # Returns "KeyError: 'four' not a key of d"

- In the above snippet,

fourdoes not exist ind. We get aKeyErrorwhen we try to accessd[four]. As a result, in many situations, we need to check if the key exists in a dictionary before we try to access it.

<dict>.get()

- Use the

get()method to avoid the KeyError:

d.get("four") # Returns "4"

d.get("five") # Returns None

- The

get()method supports a default argument which is returned when the key being queried is missing:

d.get("five", 'N/A') # Get an element with a default; returns "N/A"

d.get("four", 'N/A') # Get an element with a default; returns "4"

- A good use-case for

get()is getting values in a nested dictionary with missing keys where it can be challenging to use a conditional statement:

fruits = [

{"name": "apple", "attr": {"color": "red", "taste": "sweet"}},

{"name": "orange", "attr": {"taste": "sour"}},

{"name": "grape", "attr": {"color": "purple"}},

{"name": "banana"},

]

colors = [fruit["attr"]["color"]

if "attr" in fruit and "color" in fruit["attr"] else "unknown"

for fruit in fruits]

colors # Returns ['red', 'unknown', 'purple', 'unknown']

- In contrast, a better way is to use the

get()method twice like below. The first get method will return an empty dictionary if the keyattrdoesn’t exist. The secondget()method will return unknown if the key color doesn’t exist.

colors = [fruit.get("attr", {}).get("color", "unknown") for fruit in fruits]

colors # Returns ['red', 'unknown', 'purple', 'unknown']

defaultdict

- We can also use

collections.defaultdictwhich returns a default value without having to specify one during every dictionary lookup.defaultdictoperates in the same way as a dictionary in nearly all aspects except for handling missing keys. When accessing keys that don’t exist,defaultdictautomatically sets a default value for them. The factory function to create the default value can be passed in the constructor ofdefaultdict.

from collections import defaultdict

# create an empty defaultdict with default value equal to []

default_list_d = defaultdict(list)

default_list_d['a'].append(1) # default_list_d is now {"a": [1]}

# create a defaultdict with default value equal to 0

default_int_d = defaultdict(int)

default_int_d['c'] += 1 # default_int_d is now {"c": 1}

- We can also pass in a lambda as the factory function to return custom default values. Let’s say for our default value we return the tuple

(0, 0).

pair_dict = defaultdict(lambda: (0,0))

print(pair_dict['point'] == (0,0)) # prints True

- Using a

defaultdictcan help reduce the clutter in your code, speeding up your implementation.

Key membership check

- We can easily check if a key is in a dictionary with

in:

d = {"one": 1, "two": 2, "three": 3} # Create a new dictionary with some data

doesOneExist = "one" in d # Return True

Iterating over keys

- Python also provides a nice way of iterating over the keys inside a dictionary. However, when you are iterating over keys in this manner, remember that you cannot add new keys or delete any existing keys, as this will result in an

RuntimeError.

d = {"a": 0, "b": 5, "c": 6, "d": 7, "e": 11, "f": 19}

# iterate over each key in d

for key in d:

print(d[key]) # This is OK

del d[key] # Raises a "RuntimeError: dictionary changed size during iteration"

d[1] = 0 # Raises a "RuntimeError: dictionary changed size during iteration"

del

- Remove keys from a dictionary with the

deloperator:

d = {"one": 1, "two": 2, "three": 3} # Create a new dictionary with some data

del d["one"] # Delete key "numbers" of d

d.get("four", "N/A") # "four" is no longer a key; returns "N/A"

Key datatypes

- Note that as we saw in the section on tuples, keys for dictionaries have to be immutable datatypes, such as ints, floats, strings, tuples, etc. This is to ensure that the key can be converted to a constant hash value for quick look-ups.

invalid_dict = {[1, 2, 3]: "123"} # Raises a "TypeError: unhashable type: 'list'"

valid_dict = {(1, 2, 3): [1, 2, 3]} # Values can be of any type, however.

- Get all keys as an iterable with

keys(). Note that we need to wrap the call inlist()to turn it into a list, as seen in the putting it all together section on iterators. Note that for Python versions <3.7, dictionary key ordering is not guaranteed, which is why your results might not match the example below exactly. However, as of Python 3.7, dictionary items maintain the order with which they are inserted into the dictionary.

list(filled_dict.keys()) # Can returns ["three", "two", "one"] in Python <3.7

list(filled_dict.keys()) # Returns ["one", "two", "three"] in Python 3.7+

- Get all values as an iterable with

values(). Once again we need to wrap it inlist()to convert the iterable into a list by generating the entire list at once. Note that the discussion above regarding key ordering holds below as well.

list(filled_dict.values()) # Returns [3, 2, 1] in Python <3.7

list(filled_dict.values()) # Returns [1, 2, 3] in Python 3.7+

Iterate over a dictionary

- It is easy to iterate over the keys in a dictionary:

d = {'person': 2, 'cat': 4, 'spider': 8}

for animal in d:

legs = d[animal]

print('A %s has %d legs' % (animal, legs))

# Prints "A person has 2 legs", "A cat has 4 legs", "A spider has 8 legs"

- If you want access to keys and their corresponding values, use the

itemsmethod:

d = {'person': 2, 'cat': 4, 'spider': 8}

for animal, legs in d.items():

print('A %s has %d legs' % (animal, legs))

# Prints "A person has 2 legs", "A cat has 4 legs", "A spider has 8 legs"

Dictionary comprehensions

- These are similar to list comprehensions, but allow you to easily construct dictionaries.

- As an example, consider a

forloop that makes a new dictionary by swapping the keys and values of the original one:

flipped = {}

for key, value in original.items():

flipped[value] = key

- That same code written as a dictionary comprehension:

flipped = {value: key for key, value in original.items()}

- As another example:

nums = [0, 1, 2, 3, 4]

even_num_to_square = {x: x ** 2 for x in nums if x % 2 == 0}

print(even_num_to_square) # Prints "{0: 0, 2: 4, 4: 16}"

- Yet another example:

{x: x**2 for x in range(5)} # Returns {0: 0, 1: 1, 2: 4, 3: 9, 4: 16}

Sets

- A set is an unordered collection of distinct elements. In other words, sets do not allows duplicates and thus lend themselves for uses-cases involving retaining unique elements (and removing duplicates) canonically. As a simple example, consider the following:

animals = {'cat', 'dog'} # Note the syntax similarity to a dict.

'cat' in animals # Check if an element is in a set; prints "True"

'fish' in animals # Returns "False"

animals.add('fish') # Add an element to a set

'fish' in animals # Returns "True"

len(animals) # Number of elements in a set; returns "3"

animals.add('cat') # Adding an element that is already in the set does nothing

len(animals) # Returns "3"

animals.remove('cat') # Remove an element from a set

len(animals) # Returns "2"

animals # Returns "{'fish', 'dog'}"

- You can start with an empty set and build it up:

animals = set()

animals.add('fish', 'dog') # Returns "{'fish', 'dog'}"

- Similar to keys of a dictionary, elements of a set have to be immutable:

invalid_set = {[1], 1} # Raises a "TypeError: unhashable type: 'list'"

valid_set = {(1,), 1}

- Make a one layer deep copy using the

copymethod:

s = {1, 2, 3}

s1 = s.copy() # s is {1, 2, 3}

s1 is s # Returns False

Set operations

# Do set intersection with &

other_set = {3, 4, 5, 6}

filled_set & other_set # Returns {3, 4, 5}

# Do set union with |

filled_set | other_set # Returns {1, 2, 3, 4, 5, 6}

# Do set difference with -

{1, 2, 3, 4} - {2, 3, 5} # Returns {1, 4}

# Do set symmetric difference with ^

{1, 2, 3, 4} ^ {2, 3, 5} # Returns {1, 4, 5}

# Check if set on the left is a superset of set on the right

{1, 2} >= {1, 2, 3} # Returns False

# Check if set on the left is a subset of set on the right

{1, 2} <= {1, 2, 3} # Returns True

- As usual, everything you want to know about sets can be found in the Python documentation.

Iterating over a set

- Iterating over a set has the same syntax as iterating over a list; however since sets are unordered, you cannot make assumptions about the order in which you visit the elements of the set:

animals = {'cat', 'dog', 'fish'}

for idx, animal in enumerate(animals):

print('#%d: %s' % (idx + 1, animal))

# Prints "#1: fish", "#2: dog", "#3: cat"

Set comprehensions

- Like lists and dictionaries, we can easily construct sets using set comprehensions.

- As an example, consider a

forloop that creates a set of all the first letters in a sequence of words:

first_letters = set()

for w in words:

first_letters.add(w[0])

- That same code written as a set comprehension:

first_letters = {w[0] for w in words}

- As another example:

from math import sqrt

nums = {int(sqrt(x)) for x in range(30)}

print(nums) # Prints "{0, 1, 2, 3, 4, 5}"

- Yet another example:

{x for x in 'abcddeef' if x not in 'abc'} # Returns {'d', 'e', 'f'}

Tuples

- A tuple is an immutable ordered list of values.

t = (1, 2, 3)

t[0] # Returns "1"

t[0] = 3 # Raises a "TypeError: 'tuple' object does not support item assignment"

- Note that syntactically, a tuple of length one has to have a comma after the last element but tuples of other lengths, even zero, do not:

type((1)) # Returns <class 'int'>

type((1,)) # Returns <class 'tuple'>

type(()) # Returns <class 'tuple'>

A tuple is in many ways similar to a list; one of the most important differences is that tuples can be used as keys in dictionaries and as elements of sets, while lists cannot:

d = {(x, x + 1): x for x in range(10)} # Create a dictionary with tuple keys

t = (1, 2) # Create a tuple

type(t) # Returns "<class 'tuple'>"

d[t] # Returns "1"

d[(1, 2)] # Returns "1"

l = [1, 2]

d[l] # Raises a "TypeError: unhashable type: 'list'"

d[[1, 2]] # Raises a "TypeError: unhashable type: 'list'"

- You can do most of the list operations on tuples too:

len(tup) # Returns 3

tup + (4, 5, 6) # Returns (1, 2, 3, 4, 5, 6)

tup[:2] # Returns (1, 2)

2 in tup # Returns True

- Just like lists, you can unpack tuples into variables:

a, b, c = (1, 2, 3) # a is now 1, b is now 2 and c is now 3

# You can also do extended unpacking

a, *b, c = (1, 2, 3, 4) # a is now 1, b is now [2, 3] and c is now 4

# Tuples are created by default if you leave out the parentheses

d, e, f = 4, 5, 6 # Tuple 4, 5, 6 is unpacked into variables d, e and f

# respectively such that d = 4, e = 5 and f = 6

# Now look how easy it is to swap two values

e, d = d, e # d is now 5 and e is now 4

-

You can find all you need to know about tuples in the Python documentation.

-

Similar to lists, when evaluating a range over tuple indices (something of the form

[x:y]wherexandyare some indices into the tuple), if our right-hand value exceeds the tuple length, Python simply returns the items from the list up until the value goes out of index range.

a = (1, 2, 3) # Max addressable index: 2

a[:3] # Does NOT return an error, instead returns (1, 2, 3)

Functions

- Python functions are defined using the

defkeyword:

def sign(x):

if x > 0:

return 'positive'

elif x < 0:

return 'negative'

else:

return 'zero'

for x in [-1, 0, 1]:

print(sign(x)) # Prints "negative", "zero", "positive"

- We will often define functions to take (optional) keyword arguments, like this:

def hello(name, loud=False):

if loud:

print('HELLO, %s!' % name.upper())

else:

print('Hello, %s' % name)

hello('Bob') # Prints "Hello, Bob"

hello('Fred', loud=True) # Prints "HELLO, FRED!"

- Keyword arguments can arrive in any order:

def add(x, y):

print("x is {} and y is {}".format(x, y))

return x + y

add(y=6, x=5) # Returns 11

- You can define functions that take a variable number of positional arguments. In a function definition,

*packs all arguments in a tuple (this process is called tuple-packing).

def varargs(*args):

return args

varargs(1, 2, 3) # Returns (1, 2, 3)

- You can define functions that take a variable number of keyword arguments, as well. In a function definition,

**packs all arguments in a dictionary (this process is called dictionary-packing).

def keyword_args(**kwargs):

return kwargs

keyword_args(big="foot", loch="ness") # Returns {"big": "foot", "loch": "ness"}

- You can do both tuple- and dictionary-packing, if you like:

def all_the_args(*args, **kwargs):

print(args) # Prints (1, 2)

print(kwargs) # Prints {"a": 3, "b": 4}

-

There is a lot more information about Python functions in the Python documentation.

-

In a function call,

*and**play the opposite role as in a function definition.*unpacks all arguments in a tuple (this process is called tuple-unpacking).**unpacks all arguments in a dictionary (this process is called dictionary-unpacking).

args = (1, 2, 3, 4)

kwargs = {"a": 3, "b": 4}

all_the_args(*args) # equivalent to all_the_args(1, 2, 3, 4)

all_the_args(**kwargs) # equivalent to all_the_args(a=3, b=4)

all_the_args(*args, **kwargs) # equivalent to all_the_args(1, 2, 3, 4, a=3, b=4)

- With Python, you can return multiple values from functions as intuitively as returning a single value:

def swap(x, y):

return y, x # Return multiple values as a tuple without the parenthesis.

# (Note: parenthesis have been excluded but can be included)

x = 1

y = 2

x, y = swap(x, y) # Returns x = 2, y = 1

# (x, y) = swap(x,y) # Again parenthesis have been excluded but can be included.

- Python’s functions are first-class objects. This implies that you can assign them to variables, store them in data structures, pass them as arguments to other functions, and even return them as values from other functions:

def create_adder(x):

def adder(y):

return x + y

return adder

add_10 = create_adder(10)

add_10(3) # Returns 13

- Note that while the following short-circuit AND code-pattern is seen in function return statements, it is rarely used but it is still valuable to know its usage:

# Short-circuit AND: The expression x and y first evaluates x; if x is false, its value is returned; otherwise, y is evaluated and the resulting value is returned.

# Per https://docs.python.org/3.6/reference/expressions.html#boolean-operations

def short_circuit_and(a, b):

return a and b

print(short_circuit_and(a=None, b=None)) # prints None

print(short_circuit_and(a=None, b=1)) # prints None

print(short_circuit_and(a=1, b=None)) # prints None

print(short_circuit_and(a=1, b=2)) # prints 2

- Note that

return a and bis equivalent to:

return None if not a else b

if a:

return b

else:

return None

Nested functions

-

A function defined inside another function is called a nested function. Nested functions can access variables of the enclosing (outer) function’s scope.

-

In Python, these “non-local” variables are read-only by default. To modify them from within a nested function, they must be explicitly declared as non-local using the

nonlocalkeyword. -

The following example demonstrates a nested function accessing a variable from its enclosing scope. In this case, the nested

printer()function reads the non-local variablemsgdefined inprint_msg().

def print_msg(msg):

# This is the outer enclosing function

def printer():

# This is the nested function

print(msg)

printer()

# We execute the function

# Output: Hello

print_msg("Hello")

- which outputs:

Hello

Defining a nonlocal variable in a nested function using nonlocal

-

A

nonlocaldeclaration is analogous to aglobaldeclaration. Both are needed only when a function assigns to a variable. Normally, such a variable would be treated as local to the function. -

The

nonlocalandglobaldeclarations cause the name to refer to a variable defined outside the current function. In other words,nonlocalallows assignment to a variable in an enclosing (but non-global) scope, similar to howglobalallows assignment to a variable in the global scope. -

See PEP 3104 for more details on

nonlocal. -

Note that if a function only reads a variable and does not assign to it, no declaration is needed; Python will automatically resolve the name by searching enclosing scopes.

-

As an example, consider the following code snippet that does not use

nonlocal. Each assignment toxcreates a new local variable:

x = 0

def outer():

x = 1

def inner():

x = 2

print("inner:", x)

inner()

print("outer:", x)

outer()

print("global:", x)

# inner: 2

# outer: 1

# global: 0

- The following variation uses

nonlocal, causinginner()to modify thexdefined inouter()instead of creating a new local variable:

x = 0

def outer():

x = 1

def inner():

nonlocal x

x = 2

print("inner:", x)

inner()

print("outer:", x)

outer()

print("global:", x)

# inner: 2

# outer: 2

# global: 0

- If we were to use

global, the assignment would bindxto the global variable instead, bypassing the enclosing function’s scope entirely:

x = 0

def outer():

x = 1

def inner():

global x

x = 2

print("inner:", x)

inner()

print("outer:", x)

outer()

print("global:", x)

# inner: 2

# outer: 1

# global: 2

Closure

-

A closure is formed when a nested function captures and retains access to variables from its enclosing scope, even after the outer function has finished executing.

-

Closures allow functions to carry state with them without relying on global variables or object-oriented constructs.

-

The captured variables are stored in the function’s closure and remain alive as long as the returned function exists.

-

The following example demonstrates a closure that remembers the value of

x:

def make_adder(x):

def adder(y):

return x + y

return adder

add_10 = make_adder(10)

add_10(3) # Returns 13

- Here,

adder()closes over the variablex. Even thoughmake_adder()has already returned,adder()continues to have access tox. - Closures are commonly used for function factories, decorators, and maintaining private state.

Defining a global variable using global

-

The

globalkeyword declares that a variable inside a function refers to a name in the global (module-level) scope. -

Without a

globaldeclaration, assigning to a variable inside a function always creates or modifies a local variable. -

The

globalkeyword should be used sparingly, as it introduces implicit dependencies and can make code harder to reason about. -

The following example demonstrates modifying a global variable from within a function:

x = 5

def set_global_x(num):

global x

x = num

set_global_x(10)

x # Returns 10

- In this example, the assignment inside

set_global_x()updates the globalxrather than creating a new local variable. - Prefer returning values from functions or using closures over

globalwhenever possible, as they lead to clearer and more maintainable code.

Type hinting

-

Type hinting was introduced in Python 3.5 as part of the

typingmodule. They provide a standardized way to annotate variables, function arguments, and return values with expected types. -

Type hints are not enforced at runtime. Python will not raise errors if types do not match; instead, hints are consumed by static type checkers, IDEs, linters, and human readers to improve correctness, tooling, and documentation.

Container types: legacy vs modern syntax

-

In Python 3.5–3.8, built-in container types such as

listanddictcannot be parameterized with type arguments. Attempting to writelist[int]in these versions raises aTypeError. -

To express container element types, equivalents from the

typingmodule must be used.

from typing import List, Dict, Tuple

Vector = List[float]

Config = Dict[str, int]

Point = Tuple[int, int]

- Starting in Python 3.9, built-in container types support generic type parameters directly. This allows type annotations to more closely resemble runtime objects and avoids importing container types from

typing.

Vector = list[float]

Config = dict[str, int]

Point = tuple[int, int]

- Both the legacy and modern forms are treated identically by static type checkers. For codebases targeting Python 3.9 or newer, the built-in generic syntax is preferred.

Basic function annotations

-

Function arguments and return values can be annotated in all Python versions that support type hints. These annotations document what types a function expects and what it promises to return.

-

In the function below,

nameis annotated asstr, and the return value is also annotated asstr.

def greeting(name: str) -> str:

return 'Hello ' + name

- Subclasses of

strare accepted due to normal Python subtyping rules. The annotation does not enforce type checking at runtime; passing a non-string value will only fail if the function body itself cannot handle it.

Type aliases

-

Type aliases allow complex or frequently repeated type expressions to be assigned a meaningful name. They were introduced alongside type hints in Python 3.5.

-

A type alias is created by assigning a type expression to a variable name. The alias does not define a new type; it is treated as interchangeable with the original type by static type checkers.

-

Legacy style (Python 3.5–3.8):

from typing import List

Vector = List[float]

- Modern style (Python 3.9+):

Vector = list[float]

- Using the alias improves readability and simplifies updates if the underlying type changes.

def scale(scalar: float, vector: Vector) -> Vector:

return [scalar * num for num in vector]

new_vector = scale(2.0, [1.0, -4.2, 5.4])

- In Python 3.11 and newer,

typing.TypeAliasmay be used to explicitly mark aliases, but simple assignment remains valid and commonly used.

Complex aliases

-

Type aliases are especially useful when working with nested or composite types, where inline annotations quickly become difficult to read.

-

Legacy style (Python 3.5–3.8):

from typing import Dict, Tuple

from collections.abc import Sequence

ConnectionOptions = Dict[str, str]

Address = Tuple[str, int]

Server = Tuple[Address, ConnectionOptions]

def broadcast_message(message: str, servers: Sequence[Server]) -> None:

...

- Modern style (Python 3.9+):

from collections.abc import Sequence

ConnectionOptions = dict[str, str]

Address = tuple[str, int]

Server = tuple[Address, ConnectionOptions]

def broadcast_message(message: str, servers: Sequence[Server]) -> None:

...

- A static type checker treats these aliased forms as exactly equivalent to their fully expanded versions, but aliases significantly improve clarity and maintainability.

Multiple types (Union, Optional, and |)

-

Sometimes a value may legitimately have more than one possible type. Python’s type system supports this through union types.

-

Legacy syntax (Python 3.5–3.9):

- When a value may be one of several types, use

typing.Union.

from typing import Union def parse(value: Union[int, str]) -> int: ...- When a value may either be of type

TorNone, usetyping.Optional.

from typing import Optional def find_user(name: str) -> Optional[str]: ...Optional[T]is exactly equivalent toUnion[T, None].

- When a value may be one of several types, use

-

Modern syntax (Python 3.10+):

- Python 3.10 introduced a more concise syntax using the

|operator to express unions. The|operator is commonly called the pipe operator; it is originally the bitwise OR operator, repurposed here for type unions.

def parse(value: int | str) -> int: ...- Optional values are written using

T | None.

def find_user(name: str) -> str | None: ... - Python 3.10 introduced a more concise syntax using the

-

Additional notes:

- The value

Nonehas its own distinct type, written astype(None), which evaluates at runtime to<class 'NoneType'>. - An argument having a default value does not automatically make it optional in the type system.

- Whenever

Noneis a valid value, it should be explicitly included in the type annotation.

- The value

Any

- The

Anytype is special in that it indicates an unconstrained datatype. A static type checker will treat every type as being compatible withAnyandAnyas being compatible with every type. - This means that it is possible to perform any operation or method call on a value of type Any and assign it to any variable:

from typing import Any

a: Any = None

a = [] # OK

a = 2 # OK

s: str = ''

s = a # OK

def foo(item: Any) -> int:

# Typechecks; 'item' could be any type,

# and that type might have a 'bar' method

item.bar()

...

- Notice that no typechecking is performed when assigning a value of type

Anyto a more precise type. For example, the static type checker did not report an error when assigningatoseven though s was declared to be of typestrand receives anintvalue at runtime! - Furthermore, all functions without a return type or parameter types will implicitly default to using

Any:

def legacy_parser(text):

...

return data

# A static type checker will treat the above

# as having the same signature as:

def legacy_parser(text: Any) -> Any:

...

return data

- This behavior allows

Anyto be used as an escape hatch when you need to mix dynamically and statically typed code.

Tuple

- Tuple type;

Tuple[X, Y]is the type of a tuple of two items with the first item of typeXand the second of typeY. The type of the empty tuple can be written asTuple[()]. - Example:

Tuple[T1, T2]is a tuple of two elements corresponding to type variablesT1andT2.Tuple[int, float, str]is a tuple of an int, a float and a string. - To specify a variable-length tuple of homogeneous type, use literal ellipsis, e.g.

Tuple[int, ...]. A plain Tuple is equivalent toTuple[Any, ...], and in turn totuple(starting Python 3.9). - In the example below, we’re expecting the type of the

pointsvariable to be a tuple that contains two floats within.

from typing import Tuple

def example(points: Tuple[float, float]):

return map(do_stuff, points)

List

- Practically similar to tuple type, just that list type is, as the name suggests, for lists.

- In the example below, we’re expecting the function to return a list of dicts that map strings as keys to strings as values.

from typing import List

def example() -> List[Dict[str, str]]:

return [{"1":"2"}]

Union

- Used to signify support for two or more dataypes;

Union[X, Y]is equivalent toX | Yand means eitherX or Y. - To define a union, use e.g.

Union[int, str]or the shorthandint | str. Using the shorthand version is recommended. Details: - The arguments must be types and there must be at least one.

- Unions of unions are flattened, e.g.:

Union[Union[int, str], float] == Union[int, str, float]

- Unions of a single argument vanish, e.g.:

Union[int] == int # The constructor actually returns int

- Redundant arguments are skipped, e.g.:

Union[int, str, int] == Union[int, str] == int | str

- When comparing unions, the argument order is ignored, e.g.:

Union[int, str] == Union[str, int]

- You cannot subclass or instantiate a Union.

- You cannot write

Union[X][Y].

Optional

Optional[X]is equivalent toX | None(orUnion[X, None]). In other words,Optional[...]is a shorthand notation forUnion[..., None], telling the type checker that either an object of the specific type is required, orNoneis required. Note that...stands for any valid type hint, including complex compound types or aUnion[]of more types.- Note that this is not the same concept as an optional argument, which is one that has a default. An optional argument with a default does not require the

Optionalqualifier on its type annotation just because it is optional. For example:

def foo(arg: int = 0) -> None:

...

- On the other hand, if an explicit value of

Noneis allowed, the use ofOptionalis appropriate, whether the argument is optional or not. For example:

def foo(arg: Optional[int] = None) -> None:

...

- Thus, whenever you have a keyword argument with default value

None, you should useOptional. (Note: If you are targeting Python 3.10 or newer, PEP 604 introduced a better syntax, see below). - As an two example, if you have

dictandlistcontainer types, but the default value for the a keyword argument shows thatNoneis permitted too, useOptional[...]:

from typing import Optional

def test(a: Optional[dict] = None) -> None:

#print(a) ==> {'a': 1234}

#or

#print(a) ==> None

def test(a: Optional[list] = None) -> None:

#print(a) ==> [1, 2, 3, 4, 'a', 'b']

#or

#print(a) ==> None

- There is technically no difference between using

Optional[]on aUnion[], or just addingNoneto theUnion[]. SoOptional[Union[str, int]]andUnion[str, int, None]are exactly the same thing. -

As a recommendation, stick to using

Optional[]when setting the type for a keyword argument that uses= Noneto set a default value, this documents the reason whyNoneis allowed better. Moreover, it makes it easier to move theUnion[...]part into a separate type alias, or to later remove theOptional[...]part if an argument becomes mandatory. - For example:

from typing import Optional, Union

def api_function(optional_argument: Optional[Union[str, int]] = None) -> None:

"""API Function that does blah.

If optional_argument is given, it must be an id of type string or int.

"""

- then documentation is improved by pulling out the

Union[str, int]into a type alias:

from typing import Optional, Union

# ID types can be strings or integers -- support both.

IdTypes = Union[str, int]

def api_function(optional_argument: Optional[IdTypes] = None) -> None:

"""API Function that does blah.

If optional_argument is given, it must be an id of type string or int.

"""

- The refactor to move the

Union[]into an alias was made all the much easier becauseOptional[...]was used instead ofUnion[str, int, None]. TheNonevalue is not a ID type after all, it’s not part of the value,Noneis meant to flag the absence of a value. - When only reading from a container type, you may just as well accept any immutable abstract container type; lists and tuples are

Sequenceobjects, whiledictis aMappingtype:

from typing import Mapping, Optional, Sequence, Union

def test(a: Optional[Mapping[str, int]] = None) -> None:

"""accepts an optional map with string keys and integer values"""

# print(a) ==> {'a': 1234}

# or

# print(a) ==> None

def test(a: Optional[Sequence[Union[int, str]]] = None) -> None:

"""accepts an optional sequence of integers and strings

# print(a) ==> [1, 2, 3, 4, 'a', 'b']

# or

# print(a) ==> None

- In Python 3.9 and up, the standard container types have all been updated to support using them in type hints, see PEP 585. But, while you now can use

dict[str, int]orlist[Union[int, str]], you still may want to use the more expressiveMappingandSequenceannotations to indicate that a function won’t be mutating the contents (they are treated as ‘read only’), and that the functions would work with any object that works as a mapping or sequence, respectively. - Python 3.10 introduces the

|union operator into type hinting, see PEP 604. Instead ofUnion[str, int]you can writestr | int. In line with other type-hinted languages, the preferred (and more concise) way to denote an optional argument in Python 3.10 and up, is nowType | None, e.g.str | Noneorlist | None.

Control flow

if statement

# Let's just make a variable

some_var = 5

# Here is an if statement.

# This prints "some_var is smaller than 10"

if some_var > 10:

print("some_var is totally bigger than 10.")

elif some_var < 10: # This elif clause is optional.

print("some_var is smaller than 10.")

else: # This is optional too.

print("some_var is indeed 10.")

Conditional expression

ifcan also be used as an expression to form a conditional expression, as an equivalent of C’s?:ternary operator:

"yahoo!" if 3 > 2 else 2 # Returns "yahoo!"

- Conditional expressions can be used in all kinds of situations where you want to choose between two expression values based on some condition:

value = 123

print(value, 'is', 'even' if value % 2 == 0 else 'odd')

for loop

forloops iterate over iterables such as lists, tuples, dictionaries, and sets.

for animal in ["dog", "cat", "mouse"]:

print("{} is a mammal".format(animal))

- which outputs:

dog is a mammal

cat is a mammal

mouse is a mammal

range()andforloops are a powerful combination.range(number)returns an iterable of numbers from zero to the given number. More onrange()in its dedicated section.

for i in range(4):

print(i)

- which outputs:

0

1

2

3

range(lower, upper)returns an iterable of numbers from the lower number to the upper number.

for i in range(4, 8):

print(i)

- which outputs:

4

5

6

7

range(lower, upper, step)returns an iterable of numbers from the lower number to the upper number, while incrementing by step. If step is not indicated, the default value is1.

for i in range(4, 8, 2):

print(i)

- which outputs:

4

6

- To loop over a list, and retrieve both the index and the value of each item in the list, use

enumerate(iterable):

animals = ["dog", "cat", "mouse"]

for i, value in enumerate(animals):

print(i, value)

- which outputs:

0

1

2

- For an in-depth treatment on how

forloops work in Python, refer to our section on The Iterator Protocol.

else clause

forloops also have anelseclause which most of us are unfamiliar with. Theelseclause executes after the loop completes normally. This means that the loop did not encounter abreakstatement. They are really useful once you understand where to use them.- The common construct is to run a loop and search for an item. If the item is found, we break out of the loop using the

breakstatement. There are two scenarios in which the loop may end. The first one is when the item is found andbreakis encountered. The second scenario is that the loop ends without encountering abreakstatement. Now we may want to know which one of these is the reason for a loop’s completion. One method is to set a flag and then check it once the loop ends. Another is to use theelseclause. - This is the basic structure of a

for/elseloop:

found_obj = None

for obj in objects:

if obj.key == search_key:

# Found it!

found_obj = obj

break

else:

# Didn't find anything

print('No object found.')

- Using

for-elseorwhile-elseblocks in production code is not recommended owing to their obscurity. Thus, anytime you see this construct, a better alternative is to either encapsulate the search in a function:

def find_obj(search_key):

for obj in objects:

if obj.key == search_key:

return obj

- Or simply use a list comprehension:

matching_objs = [o for o in objects if o.key == search_key]

if matching_objs:

print('Found {}'.format(matching_objs[0]))

else:

print('No object found.')

- Note that while the list comprehension version is not semantically equivalent to the other two versions, but it works good enough for non-performance critical code where it doesn’t matter whether you iterate the whole list or not.

- Consider a simple example, which finds factors for numbers between 2 to 10:

for n in range(2, 10):

for x in range(2, n):

if n % x == 0:

print(n, 'equals', x, '*', n/x)

break

- By adding an additional

elseblock which catches the numbers which have no factors and are therefore prime numbers:

for n in range(2, 10):

for x in range(2, n):

if n % x == 0:

print( n, 'equals', x, '*', n/x)

break

else:

# Loop fell through without finding a factor

print(n, 'is a prime number')

while loop

- While loops go on until a condition is no longer met:

x = 0

while x < 4:

print(x)

x += 1 # Shorthand for x = x + 1

- which outputs:

0

1

2

3

Lambda functions

- Lambda expressions are a special syntax in Python for creating anonymous functions. The

lambdasyntax itself is generally referred to as a lambda expression, while the function you get back from this is called a lambda function. - Python’s lambda expressions allow a function to be created and passed around (often into another function) all in one line of code.

- Lambda expressions allow us to take this code:

colors = ["Goldenrod", "Purple", "Salmon", "Turquoise", "Cyan"]

def normalize_case(string):

return string.casefold()

normalized_colors = map(normalize_case, colors)

- And turn it into this code:

colors = ["Goldenrod", "Purple", "Salmon", "Turquoise", "Cyan"]

normalized_colors = map(lambda s: s.casefold(), colors)

- Lambda expressions are just a special syntax for making functions. They can only have one statement in them and they return the result of that statement automatically.

- The inherent limitations of

lambdaexpressions are actually part of their appeal. When an experienced Python programmer sees a lambda expression they know that they’re working with a function that is only used in one place and does just one thing. - Other examples of lambda functions:

(lambda x: x > 2)(3) # Returns "True"

(lambda x, y: x ** 2 + y ** 2)(2, 1) # Returns "5"

Lambda function use-cases

- You’ll typically see

lambdaexpressions used when calling functions (or classes) that accept a function as an argument. - Python’s built-in

sortedfunction accepts a function as itskeyargument. This key function is used to compute a comparison key when determining the sorting order of items. - So

sortedis a great example of a place that lambda expressions are often used:

colors = ["Goldenrod", "purple", "Salmon", "turquoise", "cyan"]

sorted(colors, key=lambda s: s.casefold()) # Returns ['cyan', 'Goldenrod', 'purple', 'Salmon', 'turquoise']

- The above code returns the given colors sorted in a case-insensitive way.

- The sorted function isn’t the only use of lambda expressions, but it’s a common one.

The pros and cons of lambda functions

- Both

lambdaexpressions anddefoffer tools to define functions, but each of them have different limitations and use a different syntax. - The main ways lambda expressions are different from def:

- They can be immediately passed around (no variable needed)

- They can only have a single line of code within them

- They return automatically

- They can’t have a docstring and they don’t have a name

- They use a different and unfamiliar syntax

- The fact that

lambdaexpressions can be passed around is their biggest benefit. Returning automatically is neat but generally not a big benefit. I find the “single line of code” limitation is neither good nor bad overall. The fact that lambda functions can’t have docstrings and don’t have a name is unfortunate and their unfamiliar syntax can be troublesome for newer Pythonistas.

Misuse and overuse scenarios

- In some cases, lambda expressions are used in ways that are unideal. Other times lambda expressions are simply being overused, i.e., they’re acceptable but code written a different way would probably serve better.

- Let’s take a look at the various ways lambda expressions are misused and overused.

Misuse: naming lambda expressions

- PEP8, the official Python style guide, advises never to write code like this:

normalize_case = lambda s: s.casefold()

- The above statement makes an anonymous function and then assigns it to a variable. The above code ignores the reason lambda functions are useful: lambda functions can be passed around without needing to be assigned to a variable first.

- If you want to create a one-liner function and store it in a variable, you should use

definstead:

def normalize_case(s): return s.casefold()

- PEP8 recommends this because named functions are a common and easily understood thing. This also has the benefit of giving our function a proper name, which could make debugging easier. Unlike functions defined with

def, lambda functions never have a name (it’s always<lambda>):

normalize_case = lambda s: s.casefold()

normalize_case # Returns "<function <lambda> at 0x7f264d5b91e0>"

def normalize_case(s): return s.casefold()

normalize_case # Returns "<function normalize_case at 0x7f247f68fea0>"

- If you want to create a function and store it in a variable, define your function using

def. That’s exactly what it’s for. It doesn’t matter if your function is a single line of code or if you’re defining a function inside of another function,defworks just fine for those use cases.

Misuse: needless function calls

- I frequently see lambda expressions used to wrap around a function that was already appropriate for the problem at hand.

- For example take this code:

sorted_numbers = sorted(numbers, key=lambda n: abs(n))