Primers • Knowledge Distillation

- Overview

- Definition

- Classical Distillation Families

- Offline, Online, and Semi-Online Distillation

- On-Policy and Off-Policy Distillation

- Relationship Between Offline/Online and Off-Policy/On-Policy Distillation

- Off-Policy and On-Policy Distillation for Autoregressive LLMs

- Divergence Choice: Forward KL, Reverse KL, and JSD

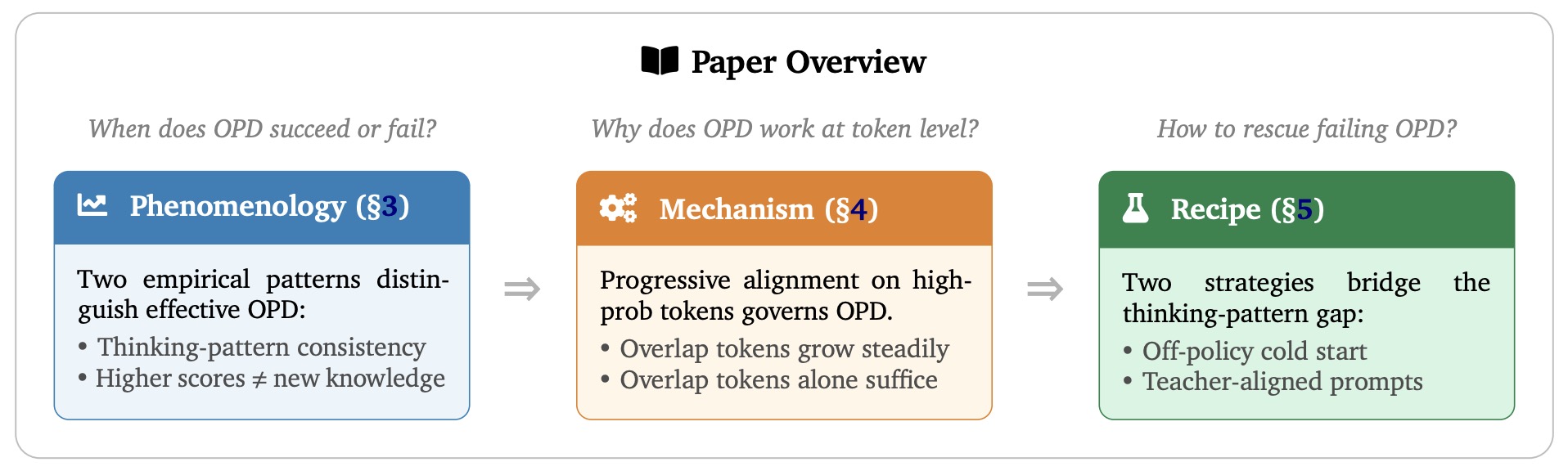

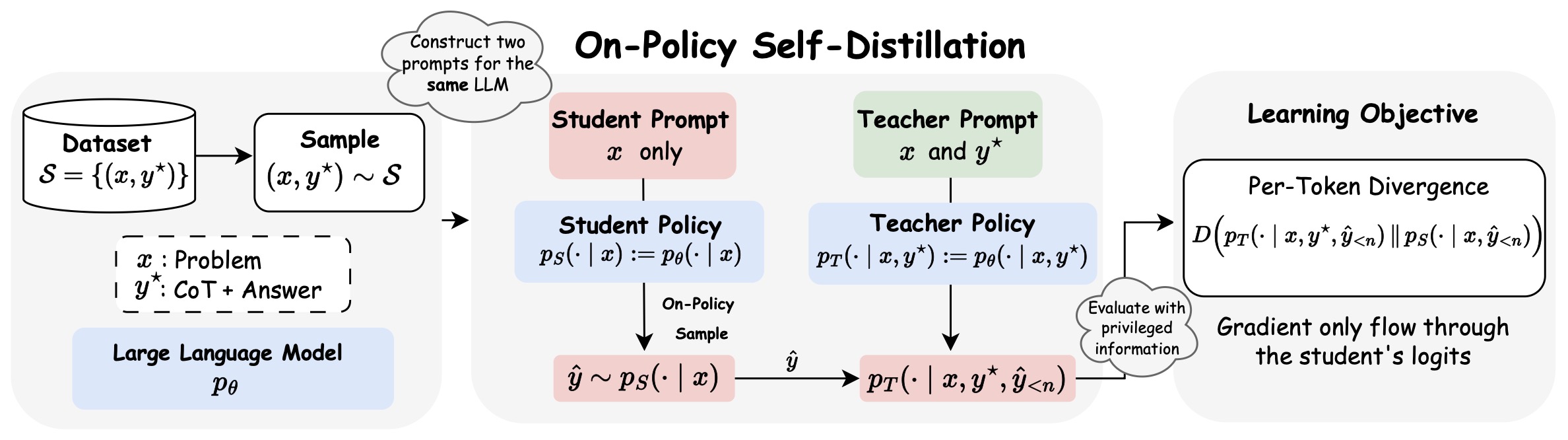

- Self-Distillation and On-Policy Self-Distillation

- Distillation as Synthetic Data and Post-Training Infrastructure

- Distillation and Reinforcement Learning

- Multi-Domain Post-Training and Capability Consolidation

- Implementation View

- Primer Roadmap

- Foundations

- Teacher–Student Formulation

- Temperature Scaling and Soft Targets

- Token-Level Distillation in Autoregressive Models

- Divergence Choices and Their Effects

- Supervised Distillation and Sequence-Level Distillation

- Representation and Intermediate-Layer Distillation

- Distillation as Synthetic Data Generation

- Limitations of Classical Distillation

- Implementation Considerations

- Offline Distillation

- Online Distillation

- Core Definition

- Relationship to Offline, Off-Policy, and On-Policy Distillation

- Major Forms of Online Distillation

- Advantages of Online Distillation

- Limitations of Online Distillation

- Semi-Online and Hybrid Approaches

- Online Distillation in Modern LLM Training

- Implementation Pattern

- Online Distillation in the Broader Distillation Taxonomy

- Off-Policy Distillation

- Definition and Formal Objective

- Sources of Off-Policy Data

- Sequence-Level Distillation

- Logit Distillation

- Synthetic Data Pipelines

- Advantages of Off-Policy Distillation

- Limitations: Distribution Mismatch

- Behavioral Consequences

- Relationship to Reinforcement Learning

- Engineering and Systems Considerations

- When Off-Policy Distillation is Preferred

- On-Policy Distillation (OPD)

- Core Idea and Formal Objective

- Intuition: Learning from One’s Own Mistakes

- Generalized Knowledge Distillation (GKD)

- Choice of Divergence and Reward Interpretation

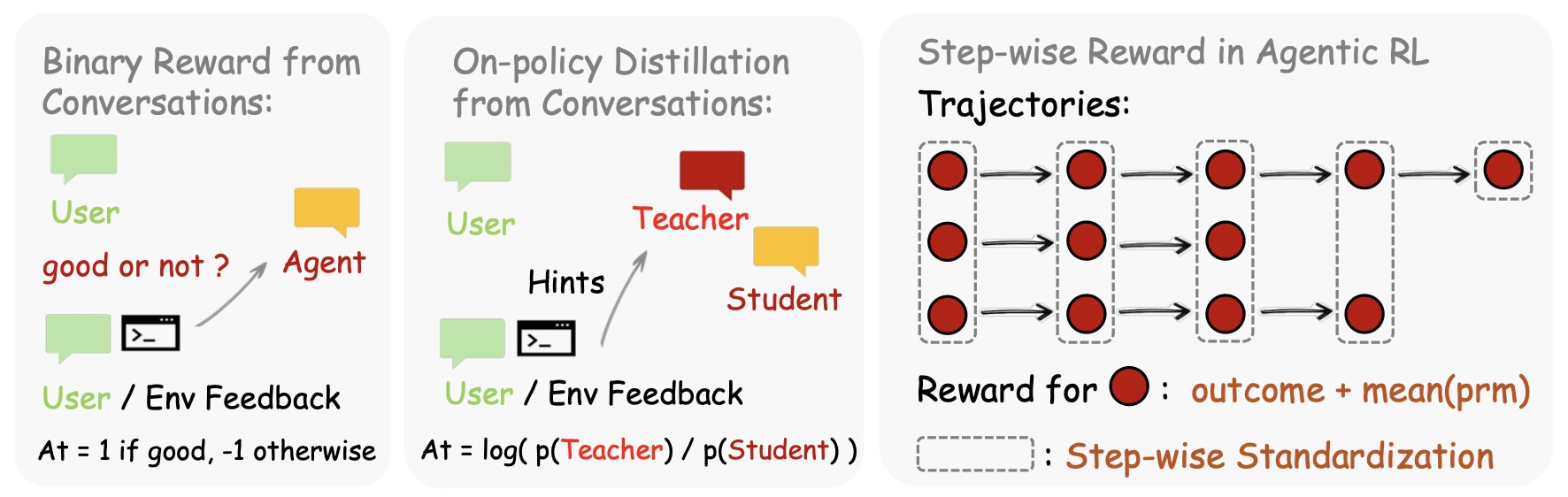

- Distillation and Reinforcement Learning

- Reinforcement Learning via Self-Distillation

- Learning beyond Teacher: Generalized On-Policy Distillation with Reward Extrapolation

- Scaling Reasoning Efficiently via Relaxed On-Policy Distillation

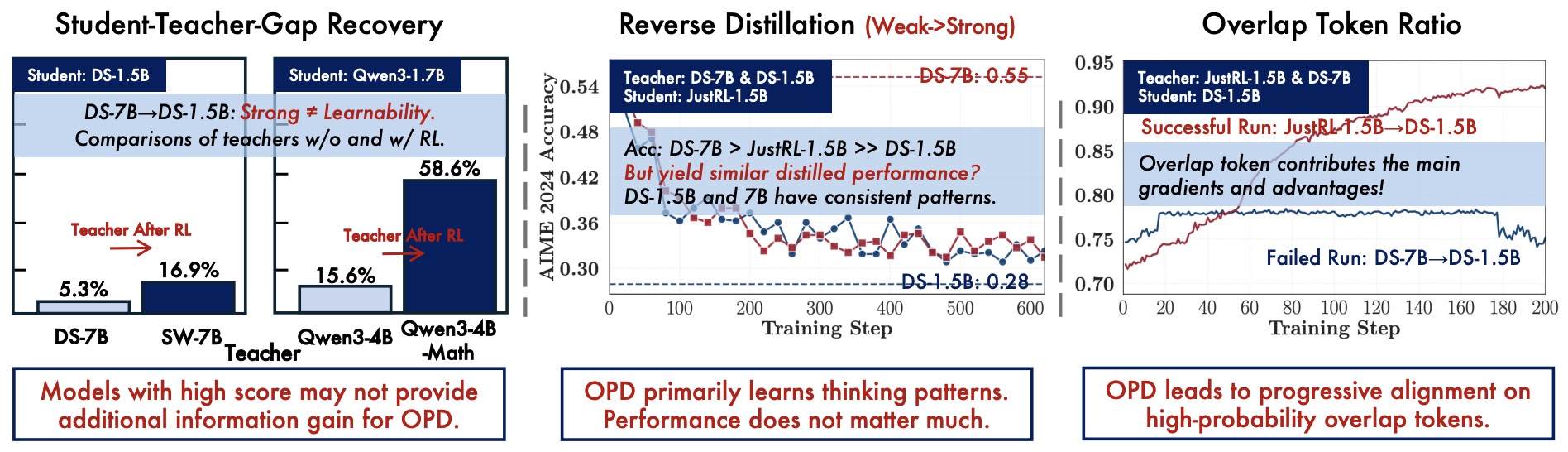

- Self-Distilled RLVR

- Unifying Group-Relative and Self-Distillation Policy Optimization via Sample Routing

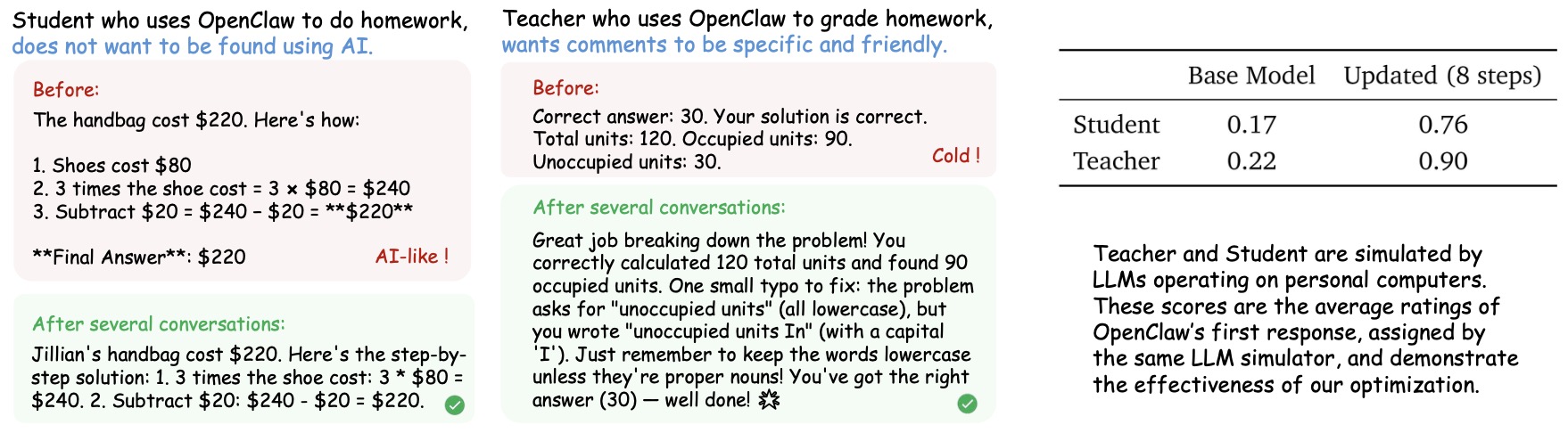

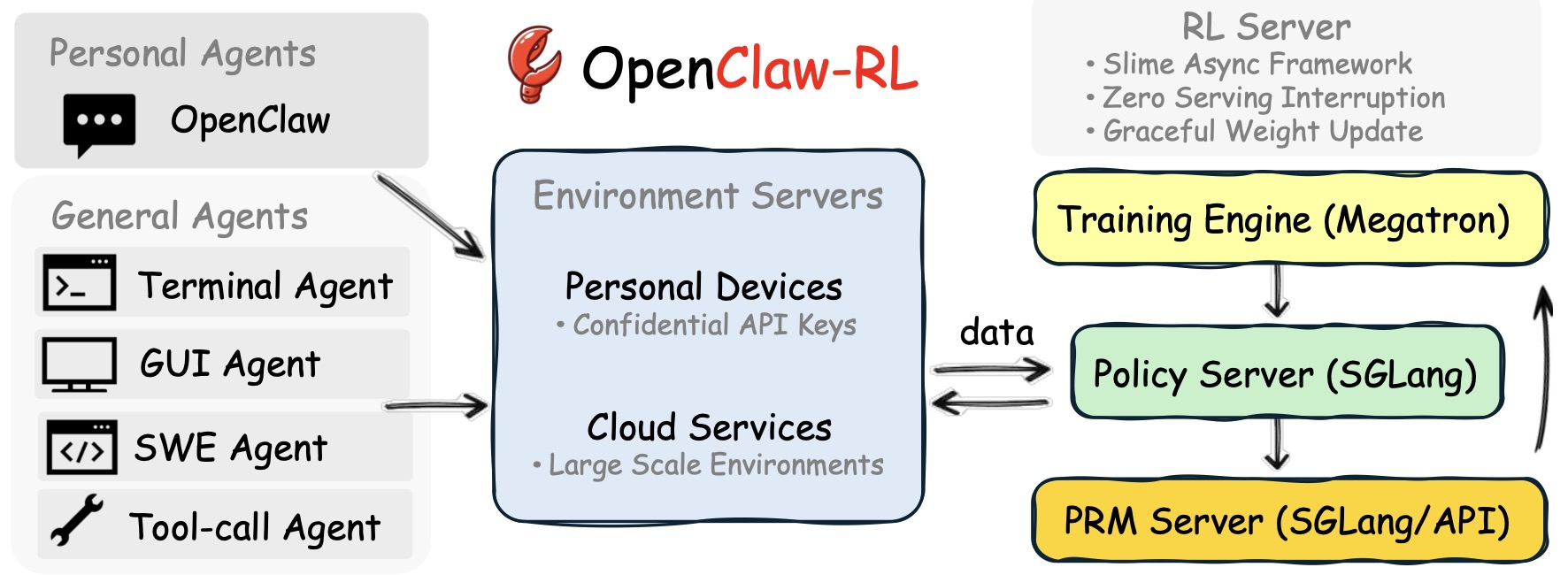

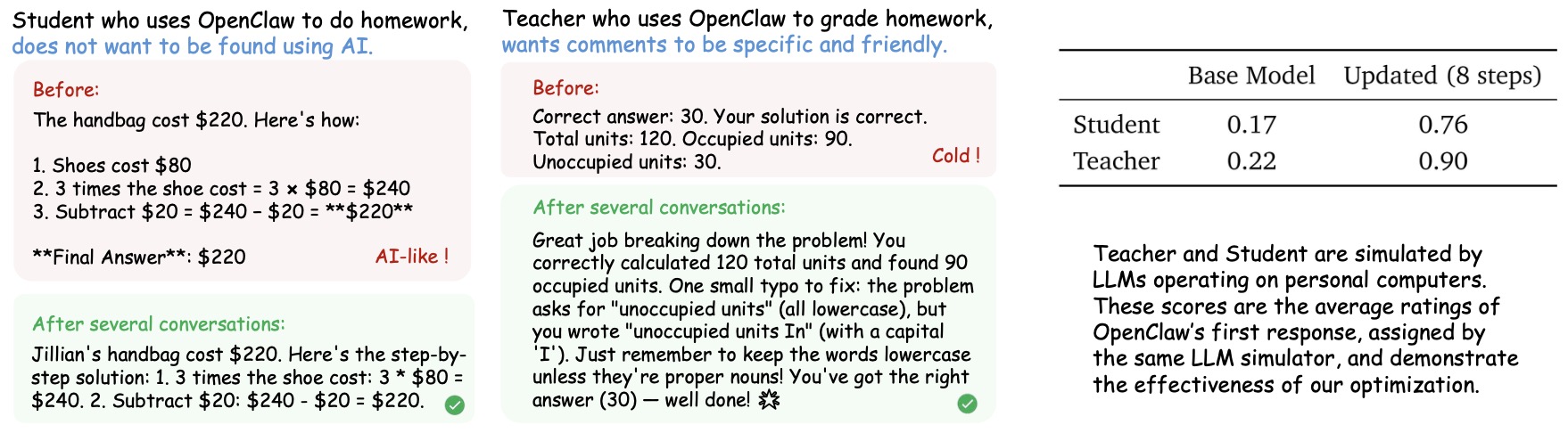

- OpenClaw-RL: Train Any Agent Simply by Talking

- Practical Failure Modes and Stabilization Recipes

- Practical Training Loop

- Self-Distillation (SD)

- Core Formulation

- Temporal Self-Distillation

- Ensemble and Multi-View Self-Distillation

- Contextual Self-Distillation

- On-Policy Self-Distillation (OPSD)

- Self-Distillation as Reinforcement Learning

- Reinforcement Learning via Self-Distillation

- Self-Distilled RLVR

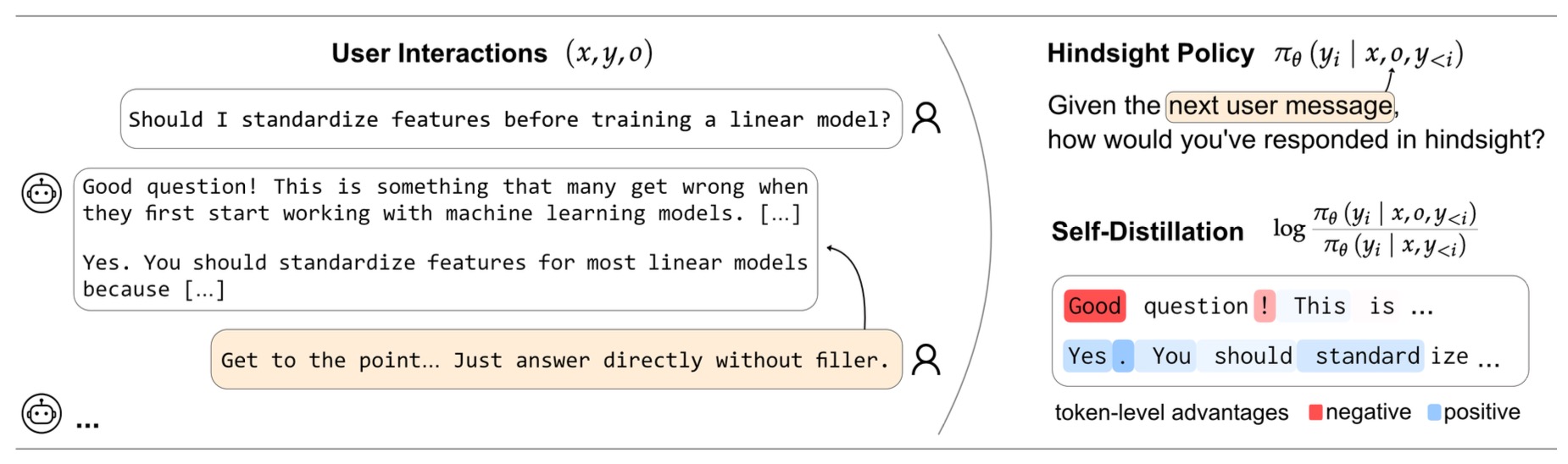

- Aligning Language Models from User Interactions

- Self-Distillation in Agentic Systems

- Advantages of Self-Distillation

- Limitations and Failure Modes

- When Self-Distillation is Preferred

- Multi-Teacher Distillation

- Core Formulation

- Motivation: Capability Consolidation and the See-Saw Problem

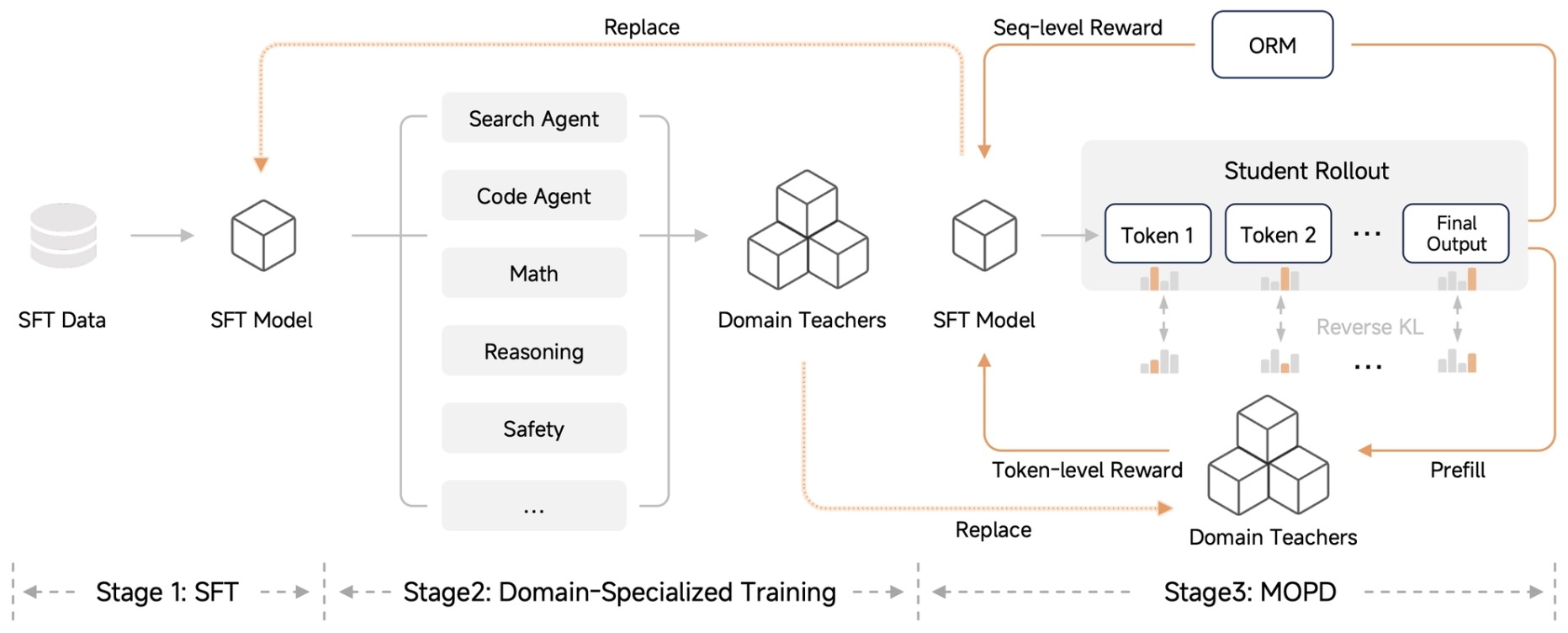

- Multi-Teacher On-Policy Distillation (MOPD)

- Reverse KL and Advantage Interpretation

- Teacher Selection and Routing Strategies

- Multi-Teacher Distillation and Reinforcement Learning

- Engineering and Systems Design

- Advantages of Multi-Teacher Distillation

- Limitations and Challenges

- When Multi-Teacher Distillation is Preferred

- Comparative Analysis

- Implementation Patterns

- Canonical Distillation Dataflow

- Off-Policy Pipeline Architecture

- On-Policy Pipeline Architecture

- Generation Buffers and Asynchronous Execution

- Teacher Scoring Infrastructure

- Log-Probability Payload Design

- Full-Vocabulary Versus Top-k Approximation

- Tokenizer Compatibility and Alignment

- Stabilization Mechanisms

- Multi-Teacher Routing Infrastructure

- Self-Distillation Systems

- RL–Distillation Hybrid Systems

- Evaluation and Regression Monitoring

- Practical Design Defaults

- Decision Guide for Choosing a Distillation Method

- References

- Foundational distillation papers

- On-policy distillation and generalizations

- Self-distillation and privileged supervision

- Multi-teacher and capability consolidation

- Agentic and interaction-driven distillation

- Synthetic data and RLHF references

- Imitation learning and exposure bias

- Systems, tooling, and infrastructure

- Blogs and implementation guides

- Twitter / X threads and informal discussions

- Broader LLM training context

- Citation

Overview

Definition

-

Distillation is a training paradigm in which a student model is optimized to reproduce useful behavior from a teacher model, usually to obtain a model that is cheaper, faster, smaller, easier to deploy, or more specialized than the teacher. The canonical formulation was popularized in Distilling the Knowledge in a Neural Network by Hinton et al. (2015), which showed that a student can learn from the teacher’s softened output probabilities rather than only from hard labels.

-

At a high level, distillation replaces or augments ordinary supervised learning with a matching objective between teacher and student distributions. For a teacher distribution \(p_T\) and student distribution \(p_S^\theta\) over labels or next tokens, a standard token-level objective is:

\[\mathcal{L}_{KD}(\theta) =\mathbb{E}_{(x,y)} \left[ D\left( p_T(\cdot \mid x, y_{<t}) \,\Vert\, p_S^\theta(\cdot \mid x, y_{<t}) \right) \right]\]- where \(D\) is usually forward KL, reverse KL, Jensen-Shannon divergence, cross-entropy on sampled teacher outputs, or a task-specific hybrid. In language models, the conditioning context includes the prompt \(x\) and the partial output \(y_{<t}\), so distillation is fundamentally about matching next-token behavior under particular trajectories.

Classical Distillation Families

-

Classical distillation has several major families. Logit or soft-label distillation matches the teacher’s probability distribution directly, often with temperature scaling. Sequence-level distillation trains on full outputs generated by the teacher, as introduced for neural machine translation in Sequence-Level Knowledge Distillation by Kim and Rush (2016), where teacher-generated translations serve as simplified targets for the student. Representation distillation matches hidden states, attention maps, embeddings, or intermediate features, which is common in encoder models such as DistilBERT by Sanh et al. (2019), which combines language-modeling, distillation, and cosine-distance losses to compress BERT.

-

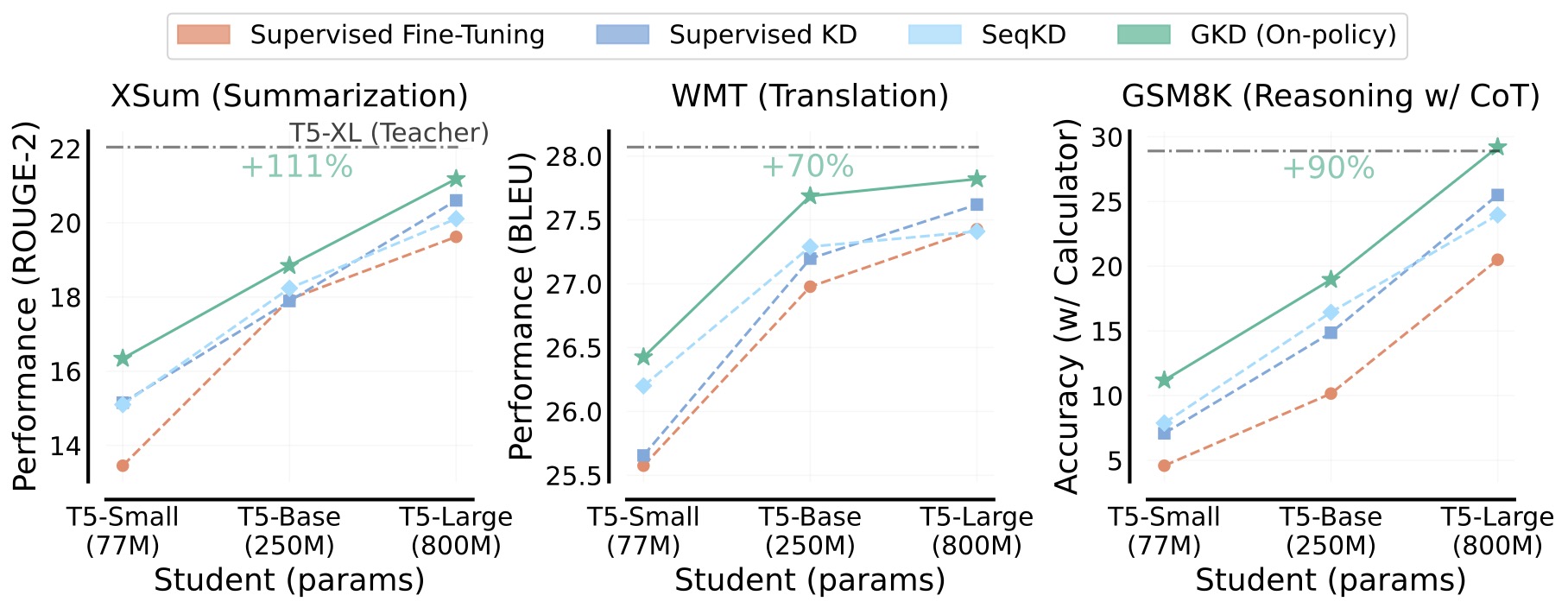

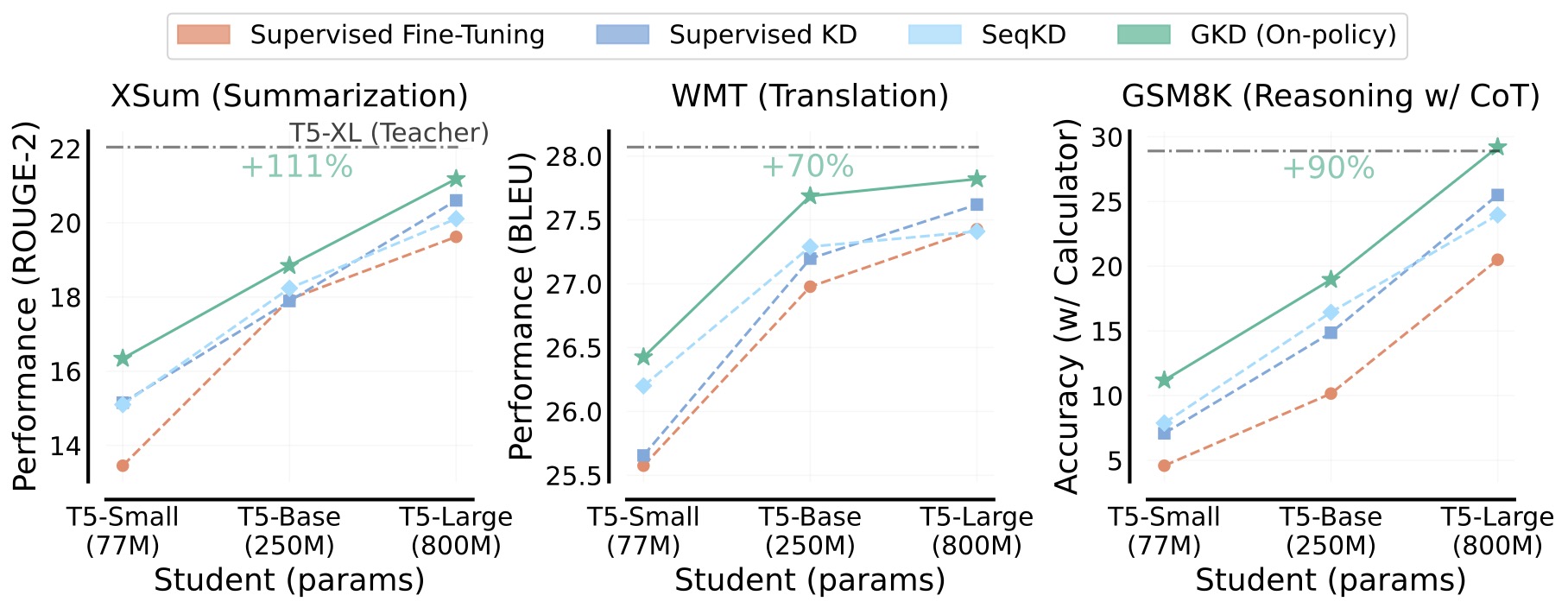

The following figure (source) shows an overview comparison of Generalized Knowledge Distillation with supervised fine-tuning, supervised KD, and sequence-level KD across summarization, translation, and reasoning tasks, emphasizing that on-policy GKD trains on student-generated outputs rather than only fixed target sequences.

Offline, Online, and Semi-Online Distillation

-

Distillation can also be categorized by whether the teacher is fixed or co-trained. Offline distillation uses a pretrained, frozen teacher and trains a student from stored or live teacher outputs; this is the standard teacher-student setting used by most classical KD systems. Online distillation trains multiple students, peers, or teacher-like supervisors simultaneously, so the teaching signal evolves during training rather than coming from a fixed teacher. Deep Mutual Learning by Zhang et al. (2017) is a canonical online distillation method in which peer networks learn collaboratively and teach each other throughout training. Co-distillation and online mutual learning therefore differ from ordinary offline KD not because they necessarily change the loss, but because the teacher distribution is non-stationary and coupled to the student’s optimization.

-

Semi-online distillation sits between offline and online regimes. One common form keeps a strong pretrained teacher but periodically adapts or updates an auxiliary teacher, supervisor, or student ensemble. Shadow Knowledge Distillation: Bridging Offline and Online Knowledge Transfer by Li et al. (2022) studies the empirical performance gap between offline and online distillation and attributes much of the benefit of online methods to reversed student-to-teacher transfer rather than only to simultaneous training.

On-Policy and Off-Policy Distillation

-

On-policy and off-policy distillation classify methods according to the source of the trajectories on which the distillation loss is computed, rather than according to whether the teacher is frozen or co-trained. This distinction is especially important for autoregressive language models, where each token changes the future contexts that the model will encounter during generation.

-

In off-policy distillation, the student is trained on sequences generated by an external source, such as a human-labeled dataset, teacher-generated completions, or another model’s rollouts. The student does not determine the contexts on which it is supervised. Classical supervised knowledge distillation, sequence-level distillation, and most synthetic-data pipelines fall into this category. Sequence-Level Knowledge Distillation by Kim and Rush (2016) and standard supervised KD as described in DistilBERT by Sanh et al. (2019) are canonical off-policy approaches.

-

In on-policy distillation, the student first samples its own trajectories and then receives dense teacher supervision along those exact rollouts. The training data distribution therefore evolves with the student. On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024) formalizes this idea as Generalized Knowledge Distillation (GKD), which treats distillation as an imitation-learning problem and trains on student-generated sequences rather than only fixed datasets.

-

The core GKD procedure samples a student trajectory with probability \(\lambda\) and otherwise falls back to dataset trajectories, then minimizes a divergence between teacher and student token distributions over the resulting sequences. This interpolates continuously between purely off-policy and purely on-policy training.

-

Formally, the on-policy objective can be written as:

-

The key advantage is that the student is trained in the same types of contexts it will encounter during inference, mitigating exposure bias and compounding errors. Thinking Machines Lab describes this as combining the on-policy relevance of reinforcement learning with the dense per-token supervision of distillation.

-

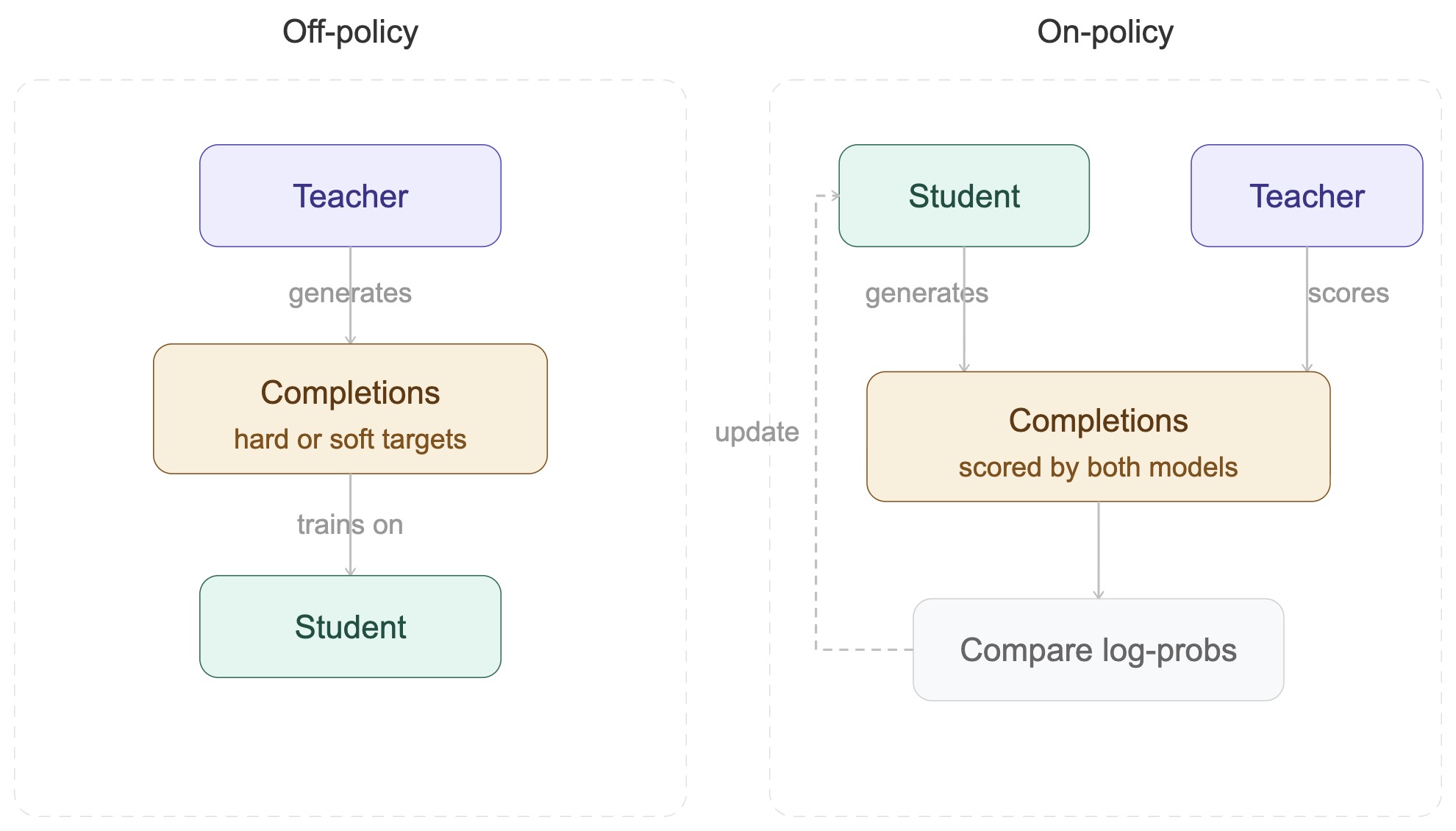

The following figure shows the distinction between off-policy and on-policy distillation: off-policy training uses teacher-generated completions, whereas on-policy training samples the student’s own rollouts and evaluates those exact rollouts with the teacher.

-

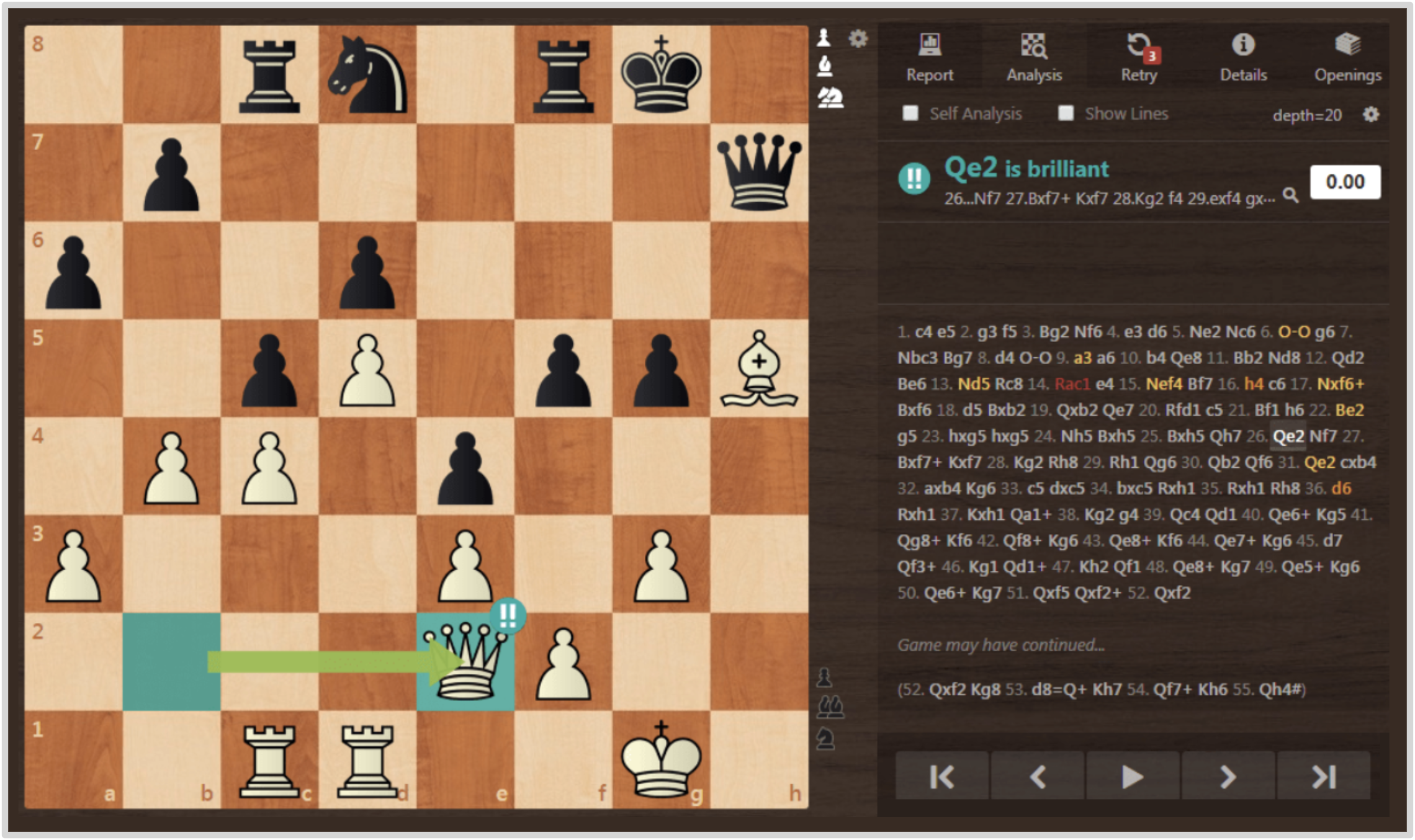

A useful intuition is provided by the chess analogy from Thinking Machines Lab. Off-policy distillation is like watching grandmaster games: the learner observes strong moves, but only in positions the expert encounters. On-policy distillation is like having a chess engine annotate every move in your own games, identifying precisely which moves were brilliant and which were blunders.

-

The following figure shows the chess analogy, illustrating how on-policy distillation provides move-by-move feedback on the student’s own trajectories.

-

From an implementation perspective, off-policy distillation is simpler because teacher outputs can be precomputed and reused. On-policy distillation is more computationally demanding because student rollouts and teacher evaluations must be generated repeatedly during training. However, it often delivers superior performance on long-horizon reasoning tasks because it teaches the student to recover from its own mistakes rather than only imitate ideal trajectories. Distilling 100B+ Models 40x Faster with TRL demonstrates practical infrastructure for large-scale OPD, including generation buffers, batched teacher queries, and compressed binary log-probability transfer to make 100B+ teachers tractable.

-

On-policy and off-policy are orthogonal to the offline/online distinction. A frozen teacher can be used in either regime, and multiple co-trained peers can still operate off-policy if they supervise each other on fixed datasets. In practice:

- Offline + Off-Policy: Classical teacher-student distillation with precomputed teacher outputs.

- Offline + On-Policy: Modern OPD with a frozen teacher scoring student rollouts.

- Online + Off-Policy: Deep Mutual Learning on shared minibatches.

- Online + On-Policy: Co-trained models supervising one another on their own generated trajectories.

-

This separation is conceptually important because most recent advances in LLM post-training, including Generalized Knowledge Distillation, On-Policy Self-Distillation, and Multi-Teacher On-Policy Distillation, use frozen teachers and are therefore offline in teacher update pattern while simultaneously on-policy in trajectory generation.

Relationship Between Offline/Online and Off-Policy/On-Policy Distillation

-

Offline and online distillation are related to, but distinct from, off-policy and on-policy distillation. Offline versus online describes the training-time relationship between teacher and student: frozen teacher versus concurrently trained teacher or peers. Off-policy versus on-policy describes the trajectory source: external data or teacher trajectories versus student-generated rollouts.

-

Thus, classical offline KD is usually off-policy, because the student trains on fixed human, dataset, or teacher trajectories. However, online distillation can still be off-policy if peers exchange predictions on fixed batches, and offline distillation can be on-policy if a frozen teacher scores rollouts generated by the current student. This is exactly the setup used in on-policy LLM distillation: the teacher can remain frozen, but the data distribution changes because trajectories are sampled from the student.

-

A useful taxonomy is therefore two-dimensional:

| Axis | Main options | What it determines |

|---|---|---|

| Teacher update pattern | Offline, online, semi-online | Whether the teacher is frozen, co-trained, or partially adapted |

| Trajectory source | Off-policy, on-policy | Whether sequences come from datasets/teachers or from the student |

| Target type | Hard, soft, feature, preference, reward-like | Whether supervision is tokens, logits, hidden states, preferences, or dense advantages |

| Teacher identity | External, self, multi-teacher, peer ensemble | Whether knowledge comes from another model, the same model, several models, or co-learners |

Off-Policy and On-Policy Distillation for Autoregressive LLMs

-

For autoregressive LLMs, the most important modern distinction is not only what is matched, but where the trajectories come from. Off-policy distillation trains the student on trajectories produced by a teacher, a dataset, or another external policy. On-policy distillation trains the student on its own rollouts and asks the teacher to score the student’s actual visited states.

-

This distinction is central because autoregressive errors compound: a student that deviates early at inference may enter contexts it never saw during fixed-dataset distillation. On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024) formalizes this as Generalized Knowledge Distillation, using teacher feedback on student-generated sequences to reduce train-inference mismatch.

-

The following figure (source) shows the distinction between off-policy and on-policy distillation: off-policy training uses teacher-generated completions, whereas on-policy training samples the student’s own rollouts and evaluates those exact rollouts with the teacher.

Divergence Choice: Forward KL, Reverse KL, and JSD

-

The loss direction matters. Forward KL,

\[D_{KL}(p_T \,\Vert\, p_S) =\sum_x p_T(x)\log\frac{p_T(x)}{p_S(x)}\]- is teacher-weighted and tends to be mean-seeking, penalizing the student for missing teacher modes. Reverse KL,

- is student-weighted and tends to be mode-seeking, penalizing tokens the student actually proposes when the teacher assigns them low probability. The TRL writeup Distilling 100B+ Models 40x Faster with TRL highlights this engineering distinction because top-\(k\) approximations differ depending on whether top tokens are selected from the teacher or the student.

-

The following figure shows forward KL and reverse KL, including their different weighting behavior and their mean-seeking versus mode-seeking tendencies.

Self-Distillation and On-Policy Self-Distillation

-

Self-distillation is a related family in which the teacher is not a separate larger model. In older usage, it can mean training a model from its own predictions, from earlier checkpoints, or from an ensemble of itself. In newer LLM reasoning work, self-distillation can be on-policy and context-conditioned: Self-Distilled Reasoner: On-Policy Self-Distillation for Large Language Models by Zhao et al. (2026) uses one model as both student and teacher, where the teacher view receives privileged information such as a verified solution while the student view sees only the problem.

-

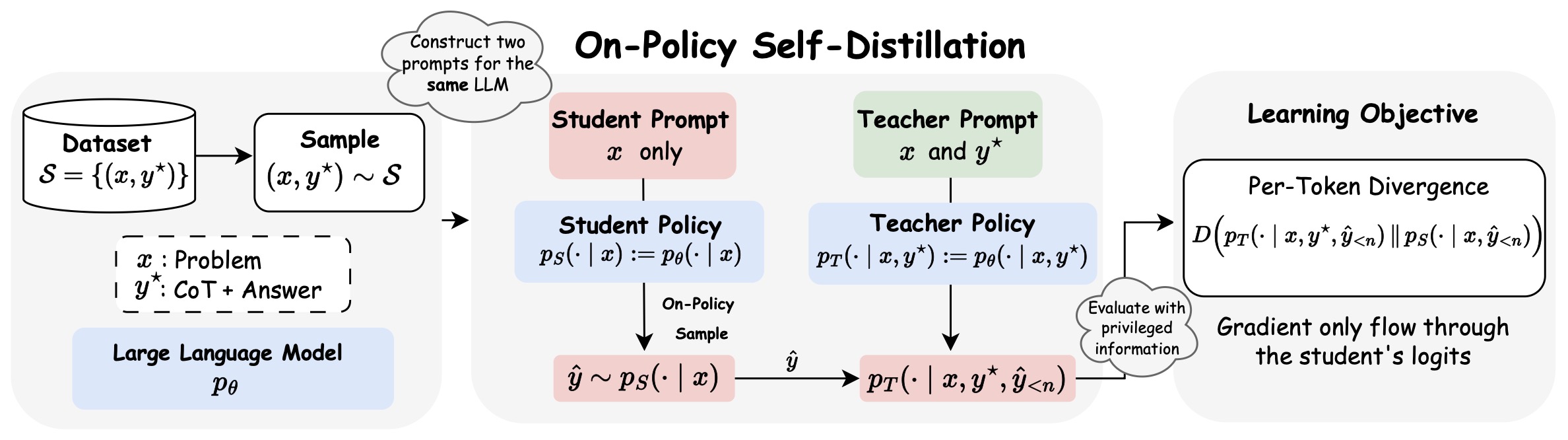

The following figure shows On-Policy Self-Distillation (OPSD), where the same model defines a student policy conditioned only on the problem and a teacher policy conditioned on privileged solution information. Given a reasoning dataset \(\mathcal{S}=\left\{\left(x_i, y_i^{\star}\right)\right\}_{i=1}^N\), we instantiate two policies from the same LLM: a student policy \(p_S(\cdot \mid x)\) and a teacher policy \(p_T\left(\cdot \mid x, y^{\star}\right)\). The student generates an on-policy response \(\hat{y} \sim p_S(\cdot \mid x)\). Both policies then evaluate this trajectory to produce next-token distributions \(p_S\left(\cdot \mid x, \hat{y}_{<n}\right)\) and \(p_T\left(\cdot \mid x, y^{\star}, \hat{y}_{<n}\right)\) at each step \(n\). The learning objective minimizes the per-token divergence \(D\left(p_T \,\Vert\, p_S\right)\) along the student’s rollout. The divergence here can be forward KL, reverse KL or JSD. Crucially, gradients backpropagate only through the student’s logits, allowing the model to self-distill.

Distillation as Synthetic Data and Post-Training Infrastructure

- A newer way to understand distillation is as part of the broader synthetic-data and post-training toolkit. The RLHF Book’s chapter Synthetic Data describes distillation as both a data engine, where stronger models generate completions, critiques, preferences, or filters, and a skill-transfer method, where a stronger model’s capabilities are transferred into a weaker model. The same chapter frames the path from offline KD to on-policy distillation as a move from static teacher-generated data toward student-sampled trajectories with dense teacher feedback.

Distillation and Reinforcement Learning

-

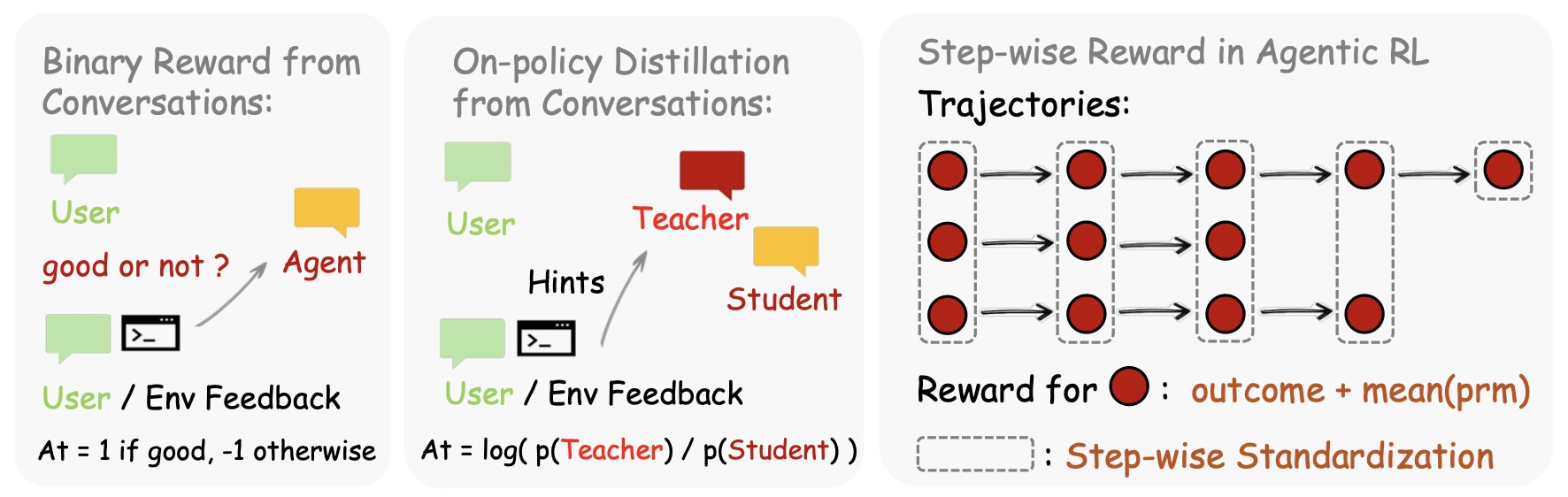

This connection also clarifies why distillation and reinforcement learning are now tightly linked. RL with verifiable rewards provides on-policy training but usually sparse scalar feedback, while OPD provides dense token-level feedback over on-policy trajectories. The RLHF Book expresses this bridge by treating the OPD token-level signal as an advantage-like term:

\[A_t^{\mathrm{OPD}} =\log \pi_T(a_t \mid s_t) - \log \pi_\theta(a_t \mid s_t)\]- where sampled tokens that the teacher rates above the student receive positive advantage, and sampled tokens the teacher rates below the student receive negative advantage.

-

Recent papers make this RL–distillation connection more explicit. Reinforcement Learning via Self-Distillation by Hübotter et al. (2026) introduces Self-Distillation Policy Optimization, which converts rich textual feedback such as runtime errors or judge comments into dense learning signals without an external teacher. Learning beyond Teacher: Generalized On-Policy Distillation with Reward Extrapolation by Yang et al. (2026) argues that OPD is a special case of dense KL-constrained RL and generalizes it with a flexible reference model and reward scaling. Scaling Reasoning Efficiently via Relaxed On-Policy Distillation by Ko et al. (2026) interprets teacher–student log-likelihood ratios as token rewards and introduces relaxed OPD techniques for stability.

-

The relationship between distillation and RL is especially important for reasoning, coding, and agentic systems. Self-Distilled RLVR by Yang et al. (2026) argues that privileged self-distillation alone can leak information and destabilize long training, so it uses self-distillation to determine fine-grained update magnitudes while retaining RLVR for update direction. Unifying Group-Relative and Self-Distillation Policy Optimization via Sample Routing by Li et al. (2026) routes correct samples to GRPO-style reward-aligned RL and failed samples to self-distillation correction, combining sparse correctness signals with dense token-level supervision. OpenClaw-RL: Train Any Agent Simply by Talking by Wang et al. (2026) extends this idea to agent interactions, using next-state signals such as user replies, tool outputs, terminal states, and GUI changes as both scalar feedback and hindsight-guided OPD supervision.

Multi-Domain Post-Training and Capability Consolidation

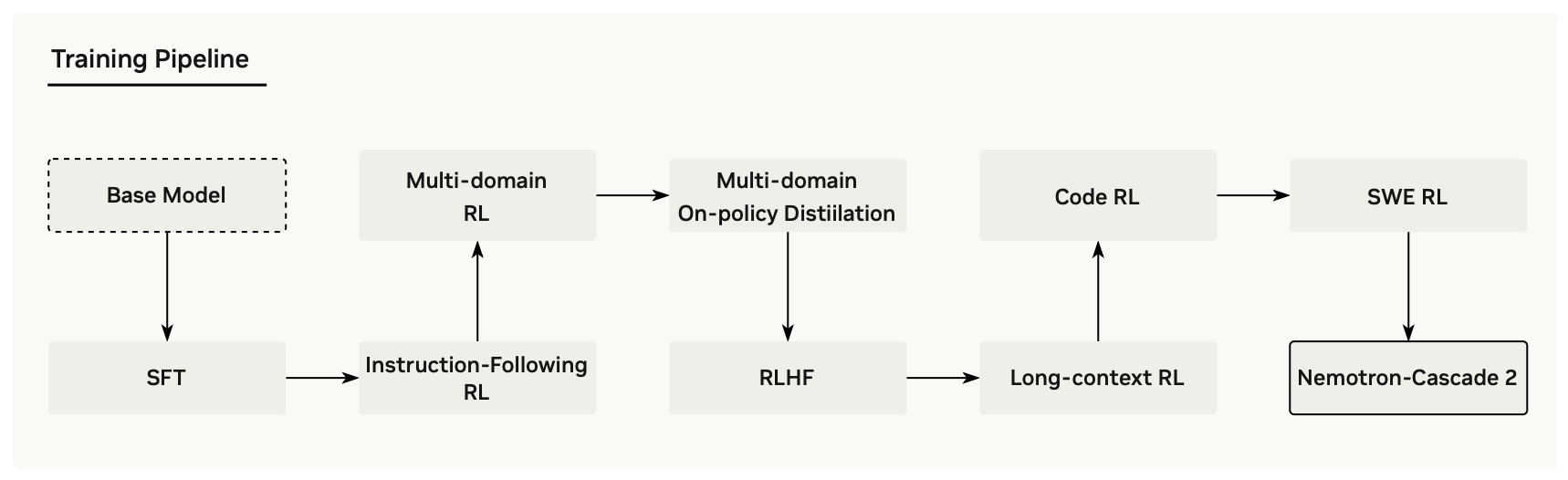

- In multi-domain post-training, distillation also functions as a capability consolidation tool after or during RL. Nemotron-Cascade 2: Post-Training LLMs with Cascade RL and Multi-Domain On-Policy Distillation by Yang et al. (2026) uses multi-domain OPD from the strongest intermediate teacher models to recover benchmark regressions and sustain gains after broader Cascade RL.

- Aligning Language Models from User Interactions by Kleine Buening et al. (2026) uses user follow-up messages as hindsight context for self-distillation, updating the model toward the behavior it would have produced after seeing the user’s correction or clarification. Informal discussion around this trend also appears in Cameron R. Wolfe’s X posts on multi-teacher OPD and the utility of combining specialist teachers, including this MOPD discussion thread.

Implementation View

-

In implementation terms, modern LLM distillation usually requires four decisions: the source of trajectories, the teacher signal, the divergence or surrogate loss, and the systems design for computing log-probabilities. The Thinking Machines post On-Policy Distillation frames on-policy distillation as combining the relevance of RL with the dense per-token signal of distillation: the student samples its own trajectories, while the teacher provides token-level feedback rather than a sparse sequence-level reward.

-

The following figure shows an intuitive chess analogy for on-policy distillation: rather than merely observing expert games or receiving only win/loss feedback, the learner receives dense move-level feedback on its own play.

Primer Roadmap

- The rest of the primer covers the major types of distillation in detail: classical soft-label distillation, hard-label and sequence-level distillation, representation and attention distillation, task-specific versus task-agnostic distillation, offline and online distillation, off-policy distillation, on-policy distillation, self-distillation, on-policy self-distillation, multi-teacher on-policy distillation, and the newer RL–distillation hybrid family that treats teacher log-probability gaps, hindsight feedback, or self-teacher contexts as dense policy-optimization signals.

Foundations

- Classical knowledge distillation establishes the core teacher–student framework that underlies all subsequent variants, including offline distillation, online distillation, off-policy distillation, on-policy distillation, self-distillation, and modern reinforcement learning hybrids. The central insight is that a model can learn not only from hard labels, but from the richer probability distribution produced by a stronger teacher or peer model. This section introduces the mathematical foundations, temperature scaling, divergence choices, early extensions, and the distinction between fixed-teacher and co-trained-teacher settings that made distillation a general-purpose model compression and capability transfer technique.

Teacher–Student Formulation

-

The classical formulation of distillation considers two models: a teacher \(p_T\), typically large and high-performing, and a student \(p_S^\theta\), typically smaller or more efficient. The objective is to transfer the teacher’s behavior into the student while reducing computational cost or improving specialization.

-

This paradigm was formalized in Distilling the Knowledge in a Neural Network by Hinton et al. (2015), which introduced the idea that the teacher’s soft output probabilities encode richer information than hard labels, revealing inter-class similarities. The student is trained to match these soft distributions rather than only the argmax class.

-

In the original classical setting, the teacher is usually fixed before the student is trained. This corresponds to offline distillation: the teacher distribution is stationary, and the student learns from a stable reference model.

-

Online distillation relaxes this assumption. In methods such as Deep Mutual Learning by Zhang et al. (2017), multiple peer models learn collaboratively and teach one another during training, so there may be no single pretrained superior teacher and no fixed teacher distribution.

-

For classification or token prediction, the core offline teacher–student loss is typically:

\[\mathcal{L}_{KD}(\theta) =\mathbb{E}_{x \sim \mathcal{D}} \left[ D\left( p_T(\cdot \mid x) ,\Vert, p_S^\theta(\cdot \mid x) \right) \right]\]- where \(D\) is a divergence, most commonly forward KL.

-

For online or mutual distillation, the same form can be generalized by replacing the single frozen teacher \(p_T\) with one or more evolving peers \(p_j^{\theta_j}\):

\[\mathcal{L}_{i}(\theta_i) =\mathcal{L}_{\text{task}}(\theta_i) +\lambda \sum_{j\neq i} D\left( p_j^{\theta_j}(\cdot \mid x) ,\Vert, p_i^{\theta_i}(\cdot \mid x) \right)\]- where model \(i\) learns from peer models \(j\) while also updating its own parameters. This captures the essential shift from one-way offline transfer to reciprocal online transfer.

-

In practice, distillation is often combined with supervised learning:

\[\mathcal{L}(\theta) =\alpha \mathcal{L}_{CE}(\theta) +(1 - \alpha)\mathcal{L}_{KD}(\theta)\]- where \(\mathcal{L}_{CE}\) is cross-entropy with ground-truth labels and \(\alpha\) balances teacher imitation and label supervision.

Temperature Scaling and Soft Targets

- A central idea in classical distillation is temperature scaling. The teacher logits \(z_i\) are softened using a temperature \(T > 1\):

-

Higher temperatures produce smoother distributions, making low-probability classes more visible. This helps the student learn nuanced relationships that are otherwise hidden in one-hot labels.

-

The distillation loss then becomes:

-

The factor \(T^2\) ensures gradient magnitudes remain stable when scaling logits.

-

In offline distillation, temperature is typically applied to a frozen teacher’s logits. In online distillation, temperature may be applied to each peer model’s logits before exchanging predictions, helping prevent mutual learning from collapsing too quickly into overconfident agreement.

-

Implementation detail: in large vocabulary settings such as LLMs, computing full softmax distributions is expensive. In practice, systems often approximate the loss using top-\(k\) tokens from either the teacher or student distribution, depending on whether forward or reverse KL is used.

Token-Level Distillation in Autoregressive Models

- For language models, distillation is applied at the token level. Given an input sequence \(x\) and generated tokens \(y = (y_1, \dots, y_n)\), both teacher and student define conditional distributions:

- The distillation objective aggregates per-token divergence:

-

This formulation is explicitly described in On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024), where distillation is framed as minimizing divergence between teacher and student token distributions along sequences.

-

Offline token-level distillation usually evaluates the teacher and student on a fixed sequence distribution, such as human-written outputs, teacher-generated outputs, or cached synthetic data. This is simple and stable, but it can create a gap between training prefixes and inference prefixes.

-

Online token-level distillation allows the supervising distribution to change during training. In peer-learning settings, each model may provide token-level probabilities to other models on shared batches; in more advanced LLM systems, periodically refreshed checkpoints or peer models can serve as evolving teachers.

-

A key implication is that the quality of training depends heavily on the distribution of prefixes \(y_{<t}\) encountered during training, which motivates the distinction between off-policy and on-policy methods discussed later.

Divergence Choices and Their Effects

-

Different divergences induce different behaviors in the student:

-

Forward KL:

\[D_{KL}(p_T ,\Vert, p_S)\]- This penalizes the student for assigning low probability to tokens the teacher considers likely. It leads to mean-seeking behavior, encouraging coverage of all teacher modes.

-

Reverse KL:

\[D_{KL}(p_S ,\Vert, p_T)\]- This penalizes tokens the student produces that the teacher considers unlikely. It leads to mode-seeking behavior, focusing on dominant modes.

-

Jensen-Shannon Divergence (JSD):

\[D_{JSD}(p_T ,\Vert, p_S) =\beta D_{KL}\left(p_T ,\Vert, m\right) +(1 - \beta) D_{KL}\left(p_S ,\Vert, m\right)\]- where \(m = \beta p_T + (1 - \beta)p_S\). JSD interpolates between forward and reverse KL and is bounded, which can improve stability.

-

-

Practical insight: forward KL is often used in classical supervised KD, while reverse KL is frequently used in on-policy distillation because it aligns naturally with sampling from the student distribution.

-

In offline distillation, divergence selection primarily controls how the student approximates a fixed teacher. In online distillation, divergence selection also affects training dynamics among co-evolving models: overly strong agreement losses can reduce diversity too early, while weaker or temperature-smoothed agreement can preserve complementary learning signals for longer.

Supervised Distillation and Sequence-Level Distillation

-

Two important classical variants are widely used:

-

Supervised (logit-level) distillation:

\[\mathcal{L}_{SD} =\mathbb{E}_{(x,y)} \left[ D_{KL}\big(p_T ,\Vert, p_S\big)(y \mid x) \right]\]- This provides dense supervision at every token, leveraging the full distribution rather than only correct labels.

-

Sequence-level distillation:

-

Introduced in Sequence-Level Knowledge Distillation by Kim and Rush (2016), this replaces ground-truth outputs with teacher-generated sequences. The student is trained via standard likelihood:

\[\mathcal{L}_{SeqKD} =\mathbb{E}_{x} \left[-\log p_S(y_T \mid x) \right]\]- where \(y_T \sim p_T(\cdot \mid x)\).

-

This simplifies the target distribution, often making learning easier but discarding distributional richness.

-

-

-

Both supervised logit-level distillation and sequence-level distillation are most commonly implemented as offline methods: a frozen teacher labels fixed data or generates synthetic targets, and the student is trained afterward.

-

Online versions are possible when teacher outputs are generated by co-trained peers or periodically refreshed teachers rather than by a static teacher. In such cases, the same supervised or sequence-level objective can be used, but the source distribution evolves during optimization.

Representation and Intermediate-Layer Distillation

-

Classical distillation need not operate solely on output probabilities. Representation distillation aligns hidden states, attention maps, and embeddings.

-

DistilBERT: a distilled version of BERT by Sanh et al. (2019) combines three losses:

-

DistilBERT loss components: masked language modeling loss, distillation loss on softened logits, cosine embedding loss on hidden representations.

-

This multi-objective approach preserves both output behavior and internal representations, demonstrating that distillation can transfer structural knowledge in addition to token probabilities.

-

-

Subsequent work has extended this principle to:

- Representation-alignment targets: attention map matching, value and key projection matching, layer-wise feature regression, contrastive representation alignment.

-

These techniques are especially useful when the student architecture differs substantially from the teacher.

-

In offline representation distillation, the teacher’s hidden states can be cached or computed live from a frozen teacher. In online representation distillation, peers may align intermediate representations during co-training, but this is more architecture-sensitive because hidden-state dimensions, layer counts, and attention structures must be compatible or projected into a shared space.

Distillation as Synthetic Data Generation

-

A complementary perspective, emphasized in the RLHF Book chapter Synthetic Data, is that distillation is also a structured data-generation process. A teacher can produce:

-

Synthetic supervision artifacts: answers, chain-of-thought traces, critiques, preference labels, filtered examples.

-

The student then trains on these outputs either as hard targets or as soft distributions.

-

-

This viewpoint broadens distillation from model compression to a general capability-transfer mechanism. In modern LLM pipelines, generating high-quality synthetic reasoning traces often precedes more advanced on-policy or reinforcement learning stages.

-

In offline synthetic-data distillation, the generated examples are usually fixed before student training or regenerated in separate rounds. In online synthetic-data distillation, examples, critiques, or peer labels may evolve as teachers, students, or self-improvement loops change during training.

Limitations of Classical Distillation

-

Despite its effectiveness, classical distillation suffers from several structural limitations:

-

Distribution mismatch: The student is trained on fixed trajectories, such as ground-truth or teacher-generated sequences, but at inference it generates its own tokens. Errors compound because it encounters states not seen during training. This issue is highlighted in imitation learning literature and explicitly discussed in On-Policy Distillation of Language Models.

-

Teacher bias and mode collapse: Forward KL encourages the student to cover all teacher modes, sometimes leading to overly smooth or low-confidence outputs.

-

Capacity mismatch: If the student cannot represent the teacher distribution, minimizing forward KL may produce unrealistic samples or unstable behavior.

-

Data inefficiency: Off-policy distillation may waste training effort on trajectories the student would never generate, reducing practical efficiency.

-

Teacher staleness in offline KD: A frozen teacher cannot adapt to the student’s changing failure modes, which can limit the usefulness of teacher feedback late in training.

-

Non-stationarity in online KD: A co-trained or evolving teacher can provide fresher supervision, but the target distribution changes over time, making optimization and reproducibility harder. Deep Mutual Learning by Zhang et al. (2017) shows that collaboratively trained peers can outperform a static-teacher setup, but the method also shifts distillation from a simple one-way transfer problem into a coupled multi-model optimization problem.

-

Implementation Considerations

-

In modern LLM systems, classical distillation requires careful engineering:

-

Log-probability extraction: The teacher must provide token-level log-probabilities. This is often done via a separate inference server, for example vLLM-based systems, with batched requests and compressed logprob transmission.

-

Top-\(k\) approximation: Full-vocabulary KL is expensive. Approximations using top-\(k\) tokens reduce memory and bandwidth requirements, especially for large vocabularies of roughly \(100{,}000\) tokens or more.

-

Batching and caching: Efficient pipelines buffer student generations and batch teacher evaluations to amortize cost, enabling distillation even from 100B+ models at scale.

-

Hybrid objectives: Many systems combine supervised fine-tuning, distillation, and reinforcement learning signals in a single pipeline.

-

Offline execution pattern: Offline KD usually separates teacher inference from student optimization. Teacher completions, logits, hidden states, or labels can be precomputed, cached, audited, and reused across multiple student runs.

-

Online execution pattern: Online KD requires coordination among multiple co-trained models or periodically refreshed teachers. This adds communication overhead, synchronization complexity, and non-stationary targets, but it can provide more adaptive supervision.

-

Semi-online compromise: Semi-online systems periodically refresh teacher checkpoints or add shadow teachers while preserving some stability of offline KD. Shadow Knowledge Distillation: Bridging Offline and Online Knowledge Transfer by Li et al. (2022) studies this intermediate regime and frames it as a bridge between static offline transfer and fully online knowledge exchange.

-

Offline Distillation

-

Offline distillation is the classical and historically dominant form of knowledge distillation. In offline distillation, the teacher model is trained beforehand and then frozen. The student is subsequently optimized to imitate this fixed teacher using either precomputed teacher outputs or teacher evaluations generated during training. Because the teacher does not change, the supervision signal is stationary, which makes offline distillation stable, reproducible, and comparatively simple to implement.

-

Most of the literature traditionally referred to as “knowledge distillation” implicitly assumes an offline setting. Distilling the Knowledge in a Neural Network by Hinton et al. (2015) is the canonical example: a pretrained ensemble or large model produces softened probability distributions that supervise a smaller student. DistilBERT by Sanh et al. (2019) similarly uses a frozen BERT teacher to train a compact transformer.

Core Definition

-

Let \(p_T\) denote a pretrained teacher and \(p_S^\theta\) the student. Offline distillation optimizes:

\[\mathcal{L}_{\text{offline}}(\theta) =\mathbb{E}_{(x,y)\sim\mathcal{D}} \left[ D\left( p_T(\cdot \mid x, y_{<t}) \,\Vert\, p_S^\theta(\cdot \mid x, y_{<t}) \right) \right]\]- where:

- \(\mathcal{D}\) is a fixed dataset.

- \(p_T\) is frozen throughout training.

- \(D\) is typically forward KL, reverse KL, JSD, or cross-entropy.

- where:

-

The defining property is that the teacher parameters remain constant:

\[\nabla_\phi \mathcal{L}_{\text{offline}} = 0\]- where \(\phi\) denotes teacher parameters.

Relationship to Off-Policy and On-Policy Distillation

-

Offline distillation and off-policy distillation are closely related but not identical concepts.

- Offline vs. online describes whether the teacher is frozen or co-trained.

- Off-policy vs. on-policy describes where trajectories come from.

-

Most offline distillation is also off-policy, because the student trains on fixed human or teacher-generated sequences. However, offline distillation can also be on-policy if a frozen teacher evaluates rollouts generated by the current student. This is precisely the setup used in many modern on-policy LLM distillation methods, including On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024), where the teacher remains fixed but the trajectory distribution changes over time.

-

Thus, “offline” refers to teacher dynamics, while “on-policy” refers to data dynamics.

Common Forms of Offline Distillation

-

Offline distillation encompasses many of the most widely used distillation approaches:

-

Soft-Label Distillation: The teacher provides full probability distributions over classes or tokens, often softened with temperature scaling.

-

Sequence-Level Distillation: The teacher generates complete outputs that become training targets, as introduced in Sequence-Level Knowledge Distillation by Kim and Rush (2016).

-

Representation Distillation: The student matches hidden states, embeddings, attention maps, or intermediate activations.

-

Preference and Reward Distillation: The teacher provides rankings, scalar rewards, or critiques rather than direct logits.

-

Precomputed vs. Live Teacher Querying: Offline distillation does not require that teacher outputs be fully precomputed.

-

Precomputed offline distillation: Teacher outputs are generated once and stored.

-

Live offline distillation: The frozen teacher is queried during training, but its parameters remain unchanged.

-

Both are considered offline because the teacher itself is static.

-

-

Advantages of Offline Distillation

-

Stability: The target distribution does not change during training.

-

Reproducibility: Repeated runs see identical teacher behavior.

-

Engineering simplicity: Teacher and student optimization are decoupled.

-

Caching efficiency: Teacher outputs can be stored and reused.

-

Scalability: Large teachers can supervise many student experiments.

Limitations of Offline Distillation

-

Teacher staleness: The teacher cannot adapt to the student’s evolving weaknesses.

-

Potential distribution mismatch: If training trajectories are fixed, the student may not learn to recover from its own mistakes.

-

Storage requirements: Precomputing token-level distributions can be expensive.

-

Capability ceiling: The student is fundamentally bounded by the teacher’s performance and biases.

Semi-Online Variants

- Some systems partially relax the static-teacher assumption by periodically updating a teacher snapshot or ensemble. Shadow Knowledge Distillation: Bridging Offline and Online Knowledge Transfer by Li et al. (2022) studies this intermediate regime and argues that part of the online distillation advantage comes from reversed student-to-teacher transfer rather than only from simultaneous training.

Offline Distillation in Modern LLM Training

-

In contemporary LLM pipelines, offline distillation is widely used for compressing frontier models into smaller deployable models, generating synthetic instruction and reasoning datasets, transferring capabilities after RL or alignment, and creating baseline models before on-policy fine-tuning.

-

The RLHF Book chapter Synthetic Data presents offline distillation as the first stage in a progression from static synthetic data generation to fully on-policy distillation and RL-integrated post-training.

Implementation Pattern

-

Select or train the teacher model: Begin with a strong model, ensemble, specialist checkpoint, or post-RL model whose behavior should be transferred into the student. In most offline settings, this teacher is already trained before the distillation run begins.

-

Freeze the teacher parameters: Keep the teacher fixed throughout student training. This ensures that the supervision distribution remains stationary and that repeated student runs can be compared cleanly.

-

Generate or query teacher outputs: Use the teacher to produce hard targets, soft probabilities, hidden-state targets, critiques, preferences, or reward-like annotations. These outputs may be generated once before training or queried live during student optimization.

-

Store targets or log-probabilities when useful: For large-scale systems, cache teacher completions, token IDs, top-\(k\) log-probabilities, embeddings, or preference labels so they can be reused across multiple student runs. Full-vocabulary logits are usually expensive, so practical systems often store compressed targets.

-

Train the student to match the teacher: Optimize the student with the appropriate matching loss, such as cross-entropy on teacher-generated tokens, KL divergence on teacher probabilities, MSE on hidden states, or a hybrid objective combining supervised and distillation losses.

-

Evaluate, diagnose, and iterate: Measure the student against task benchmarks, teacher agreement, latency, memory footprint, and regression suites. If the student underperforms, iterate by improving the teacher data, changing the divergence, adjusting temperature, increasing top-\(k\) coverage, or introducing on-policy rollouts.

Online Distillation

-

Online distillation generalizes the teacher-student paradigm by allowing the teacher signal to evolve during training rather than remain fixed. Instead of relying exclusively on a pretrained, frozen teacher, online distillation trains multiple models simultaneously and enables them to exchange knowledge throughout optimization. The supervision distribution is therefore non-stationary and adapts as the participating models improve.

-

The core motivation is that a fixed teacher may become stale relative to the student’s changing weaknesses and strengths. By allowing teachers and students to co-evolve, online distillation can provide more adaptive supervision, improve generalization, and in some cases outperform both conventional offline distillation and independently trained models.

-

The canonical example is Deep Mutual Learning by Zhang et al. (2017), which trains peer networks jointly and minimizes KL divergence between their predictive distributions. Each model acts simultaneously as both student and teacher, and all participants improve through reciprocal supervision. More recent approaches such as co-distillation and peer learning extend this idea to larger ensembles and distributed training systems.

Core Definition

-

Suppose there are \(K\) models with parameters \({\theta_k}_{k=1}^K\). For model \(i\), the online distillation objective can be written as:

\[\mathcal{L}_i(\theta_i) =\mathcal{L}_{\text{task}}(\theta_i) +\lambda \sum_{j \neq i} D\left( p_j(\cdot \mid x) \,\Vert\, p_i(\cdot \mid x) \right)\]-

where:

- \(\mathcal{L}_{\text{task}}\) is the primary supervised or reinforcement learning objective,

- \(D\) is a divergence such as KL or JSD,

- \(\lambda\) controls the strength of mutual supervision,

- all models update concurrently.

-

-

Unlike offline distillation, the teacher distributions \(p_j\) evolve throughout training:

\[\nabla_{\theta_j} \mathcal{L}_j \neq 0\]- for all participating models.

Relationship to Offline, Off-Policy, and On-Policy Distillation

-

Online versus offline describes whether the teacher changes during training. Off-policy versus on-policy describes where the training trajectories originate.

-

This yields four conceptually distinct combinations:

- Offline + Off-Policy: Classical KD using a frozen teacher and fixed teacher or dataset trajectories.

- Offline + On-Policy: Modern OPD, where a frozen teacher scores student-generated rollouts.

- Online + Off-Policy: Peer models exchange predictions on a fixed dataset or minibatch stream.

- Online + On-Policy: Multiple co-evolving models generate and score their own trajectories, potentially sharing rollouts and dense feedback.

-

Most historical online distillation methods are online and off-policy because they operate on shared minibatches. Emerging RL and LLM systems increasingly explore online and on-policy hybrids, where co-trained models evaluate trajectories sampled from their current policies.

Major Forms of Online Distillation

-

Mutual Learning: Each model teaches every other model, as in Deep Mutual Learning.

-

Co-Distillation: Large-scale training jobs periodically exchange predictions or checkpoints to improve convergence and robustness.

-

Peer Ensembles: Multiple comparable models learn jointly and average or vote on predictions during training.

-

Adaptive Teacher Distillation: A stronger model is periodically updated and continues to supervise one or more students.

-

Population-Based Distillation: A population of models with different objectives or hyperparameters exchanges knowledge during training.

Advantages of Online Distillation

-

Adaptive supervision: The teaching signal evolves with the models and can address newly emerging failure modes.

-

Improved generalization: Peer learning often reduces overconfidence and improves calibration.

-

No need for a single superior teacher: Comparable models can still benefit from teaching one another.

-

Regularization effects: Mutual agreement acts as a strong inductive bias.

-

Compatibility with distributed systems: Large training clusters can exchange logits or checkpoint summaries during optimization.

Limitations of Online Distillation

-

Higher system complexity: Multiple models must be trained simultaneously or synchronized periodically.

-

Non-stationary targets: The supervision distribution changes over time, which can complicate optimization.

-

Risk of consensus errors: If all participants share similar biases, they may reinforce incorrect behavior.

-

Compute overhead: Training several models jointly can be significantly more expensive than using a single frozen teacher.

Semi-Online and Hybrid Approaches

-

Many practical systems combine offline and online strategies:

-

Checkpoint refresh: A frozen teacher is periodically replaced by the latest strong checkpoint.

-

Teacher ensembles: A static teacher is supplemented with co-trained peers.

-

Shadow teachers: * Auxiliary teachers are updated asynchronously to provide fresher supervision.

-

-

Shadow Knowledge Distillation: Bridging Offline and Online Knowledge Transfer by Li et al. (2022) analyzes these intermediate approaches and shows that reversed student-to-teacher transfer contributes significantly to online distillation’s effectiveness.

Online Distillation in Modern LLM Training

-

Although most frontier LLM distillation remains offline, online principles appear increasingly often in:

- Multi-agent self-improvement systems,

- Self-play and debate frameworks,

- Checkpoint-based teacher refresh pipelines,

- Distributed co-training,

- Self-distillation with periodically updated teacher snapshots.

-

In large-scale post-training, a model may be supervised by:

- Specialist checkpoints trained on different domains,

- Recent versions of itself,

- Peer models in a shared optimization loop.

-

This blurs the boundary between online distillation, self-distillation, and multi-teacher distillation.

Implementation Pattern

-

Initialize multiple models or peers: Start with two or more models, which may differ in architecture, initialization, objective, or specialization.

-

Train each model on the primary objective: Each participant optimizes its own supervised, RL, or hybrid loss.

-

Exchange predictive distributions: At each step or periodically, models compute logits, hidden states, or critiques that are shared with other participants.

-

Compute mutual distillation losses: Each model matches one or more peer distributions using KL divergence, JSD, or related objectives.

-

Update all models concurrently: Gradients are applied to every participant, so each model acts as both teacher and student.

-

Synchronize or refresh when needed: In distributed systems, communication may occur asynchronously or at checkpoint boundaries rather than every minibatch.

-

Evaluate both individual and ensemble performance: Assess whether joint learning improves standalone models, ensemble behavior, and calibration.

Online Distillation in the Broader Distillation Taxonomy

-

Online distillation occupies the teacher-update axis of the distillation taxonomy. It complements rather than replaces distinctions such as:

- Off-policy versus on-policy,

- Single-teacher versus multi-teacher,

- External-teacher versus self-distillation,

- Supervised versus RL-integrated training.

-

Conceptually, online distillation is best understood as adaptive teacher evolution, while on-policy distillation is best understood as adaptive trajectory generation. Modern systems increasingly combine both.

Off-Policy Distillation

- Off-policy distillation is the classical and still most widely used form of distillation. The student is trained on trajectories generated by an external source, such as human-labeled datasets, teacher-generated completions, synthetic reasoning traces, or curated corpora, rather than on trajectories sampled from the student itself. This makes off-policy distillation simple, stable, and highly scalable, but also introduces the train–inference mismatch that motivates later on-policy methods.

Definition and Formal Objective

- Given a dataset of input-output pairs \(\mathcal{D} = {(x, y)}\), where outputs \(y\) may come from humans, a teacher model, or synthetic data pipelines, the student minimizes a divergence between teacher and student token distributions:

- This is the standard supervised distillation objective described in Distilling the Knowledge in a Neural Network by Hinton et al. (2015) and generalized to autoregressive sequence models in On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024).

Sources of Off-Policy Data

-

Off-policy data can be obtained from several sources:

-

Human-labeled datasets provide expert-written instruction responses, translations, preference annotations, and curated reasoning traces that serve as direct supervision targets.

-

Teacher-generated synthetic data is produced by stronger models that generate answers, chain-of-thought traces, critiques, or tool-use demonstrations intended to transfer specific capabilities.

-

Filtered synthetic corpora are created by generating multiple candidate outputs and retaining only those that score highly under verifiers, reward models, or additional teacher models.

-

Historical model outputs can be relabeled and refined using stronger models, allowing prior checkpoints or production logs to become training data for newer students.

-

-

The RLHF Book chapter Synthetic Data emphasizes that most modern post-training pipelines rely heavily on large-scale synthetic data generation before any reinforcement learning or on-policy distillation stage.

Sequence-Level Distillation

-

Sequence-level distillation is the most common off-policy method for LLMs.

-

Introduced in Sequence-Level Knowledge Distillation by Kim and Rush (2016), it trains the student on full teacher-generated outputs:

\[\mathcal{L}_{\text{SeqKD}} =\mathbb{E}_{x} \left[ - \log p_S(y_T \mid x) \right]\]-

where:

\[y_T \sim p_T(\cdot \mid x)\]

-

-

This approach often simplifies the target distribution by replacing multiple valid outputs with one teacher-selected response, which can make optimization substantially easier.

Logit Distillation

- In logit or soft-label distillation, the student matches the teacher’s full next-token distribution.

-

Compared with sequence-level distillation, this preserves uncertainty information, token similarities, and alternative plausible continuations.

-

This approach is especially effective when the teacher is much stronger and when the student has sufficient capacity to approximate the teacher distribution.

Synthetic Data Pipelines

-

A major modern use of off-policy distillation is as the final consumer of synthetic data pipelines.

-

A typical workflow proceeds through several stages:

-

Prompts are collected from benchmark datasets, user interactions, or automatically generated prompt sets designed to cover the target domains.

-

A strong teacher model generates one or more candidate completions for each prompt, often including intermediate reasoning traces or tool-use steps.

-

Reward models, verifiers, or additional teacher models evaluate the generated outputs to estimate correctness, helpfulness, and consistency.

-

The highest-quality outputs are selected, reranked, or filtered to remove low-confidence or incorrect examples.

-

The resulting dataset is stored as a reusable synthetic corpus that may include completions, chain-of-thought traces, verifier scores, and teacher log-probabilities.

-

The student model is trained on this curated dataset using sequence-level distillation, logit matching, or a hybrid objective.

-

-

Synthetic datasets may contain a wide range of artifacts, including:

-

Detailed chain-of-thought traces that expose intermediate reasoning steps and allow the student to imitate structured problem solving.

-

Verified code solutions that transfer programming ability while ensuring that generated programs pass unit tests or execution checks.

-

Critiques and revisions that teach the student how to diagnose and improve its own responses.

-

Tool-use transcripts that demonstrate how to call APIs, interpret outputs, and incorporate retrieved information into subsequent reasoning.

-

Preference annotations that encode relative quality judgments and can later support alignment or ranking objectives.

-

-

The RLHF Book highlights that this synthetic-data-to-distillation pipeline remains the dominant method for transferring capabilities from frontier models into smaller and more deployable students.

Advantages of Off-Policy Distillation

-

The training procedure is operationally simple because it closely resembles standard supervised fine-tuning on a fixed dataset.

-

Optimization is highly stable and reproducible because the same precomputed examples can be reused across runs.

-

Teacher inference is amortized efficiently, since outputs and log-probabilities are generated once and consumed many times.

-

The approach scales naturally to very large datasets and distributed training systems.

-

It integrates seamlessly with synthetic data generation pipelines that create specialized corpora for reasoning, coding, and instruction following.

Limitations: Distribution Mismatch

-

The central weakness of off-policy distillation is that the student is trained on trajectories it did not generate.

-

During inference, the student samples:

\[\hat{y} \sim p_S(\cdot \mid x)\]- which may diverge from teacher-generated sequences. Because each token conditions on previous tokens, small errors compound over long trajectories.

-

This problem is explicitly analyzed in On-Policy Distillation of Language Models and motivates Generalized Knowledge Distillation.

-

The Thinking Machines article On-Policy Distillation compares this to learning chess solely by watching grandmasters: one sees excellent play but not the board states produced by one’s own mistakes.

Behavioral Consequences

-

Off-policy students often exhibit:

-

Strong performance when their generated prefixes remain close to the trajectories seen during training.

-

Limited ability to recover from early mistakes that push generation into unfamiliar contexts.

-

Increased exposure bias, especially in long-horizon reasoning and agentic tasks.

-

Stylistic imitation of the teacher without fully reproducing the teacher’s underlying reasoning competence.

-

Overconfident predictions when trained primarily on single deterministic targets rather than full probability distributions.

-

Relationship to Reinforcement Learning

- Off-policy distillation and reinforcement learning differ in both trajectory source and feedback density, as indicated in the table below:

| Method | Trajectory source | Reward density |

|---|---|---|

| Off-policy KD | Teacher or dataset | Dense token-level |

| RLHF / RLVR | Student | Sparse sequence-level |

| On-policy distillation | Student | Dense token-level |

-

As a result, off-policy distillation is highly sample-efficient but less robust than on-policy approaches.

-

Many modern pipelines therefore follow a staged progression:

- Synthetic data is generated and filtered to create high-quality off-policy supervision.

- The student is trained through off-policy distillation to absorb the teacher’s broad capabilities.

- Reinforcement learning is applied to refine behaviors that are difficult to specify directly in the dataset.

- On-policy distillation is used to transfer the benefits of RL into a smaller or more efficient model.

-

The RLHF Book explicitly presents this progression as the “path to on-policy distillation.”

Engineering and Systems Considerations

-

Off-policy systems are operationally straightforward:

-

Teacher inference can be performed asynchronously and at large batch sizes, maximizing accelerator utilization.

-

Training examples may store token IDs, reasoning traces, verifier scores, and optionally top-\(k\) log-probabilities for later reuse.

-

The resulting synthetic datasets can be reused across many experiments and across multiple student architectures.

-

Student training proceeds independently and does not require synchronous communication with the teacher.

-

-

The primary costs arise from generating synthetic data, storing large corpora, and maintaining the filtering and verification infrastructure that ensures data quality.

When Off-Policy Distillation is Preferred

-

Off-policy distillation is the best choice when:

-

Simplicity and stability are more important than exact train–inference matching.

-

Large synthetic datasets are already available or can be generated economically.

-

Teacher inference can be amortized offline and reused across many experiments.

-

The student is unlikely to diverge substantially from the training distribution during deployment.

-

The primary goal is broad capability transfer rather than maximal robustness to self-generated errors.

-

-

It remains the dominant starting point for most practical distillation pipelines, even when more advanced on-policy or reinforcement learning stages are planned later.

On-Policy Distillation (OPD)

- On-policy distillation addresses the central limitation of off-policy methods by training the student on trajectories it actually generates, rather than on fixed datasets curated by humans or sampled from a teacher. By shifting supervision onto the student’s own state distribution, on-policy distillation substantially reduces exposure bias and compounding errors in autoregressive models. Conceptually, it combines the on-policy relevance of reinforcement learning with the dense, token-level feedback of knowledge distillation, and has emerged as one of the most important post-training primitives for reasoning models and capability transfer in modern LLM pipelines.

Core Idea and Formal Objective

- In on-policy distillation, the student first generates a rollout:

- The teacher then evaluates the exact same trajectory by assigning next-token probabilities conditioned on the student’s prefixes. The student is updated to reduce the divergence between its own token distributions and the teacher’s token distributions along this rollout:

- This formulation was introduced in On-Policy Distillation of Language Models: Learning from Self-Generated Mistakes by Agarwal et al. (2024), which frames distillation as an imitation learning problem in the style of DAGGER and demonstrates substantial gains over conventional supervised and sequence-level KD across summarization, translation, and mathematical reasoning.

Intuition: Learning from One’s Own Mistakes

-

The key advantage of on-policy distillation is that the student receives feedback precisely in the contexts it is most likely to encounter at inference time.

-

The Thinking Machines article On-Policy Distillation presents an intuitive analogy to chess. Instead of only observing expert games, or receiving a single win-or-loss signal after playing a full game, the student receives move-by-move evaluations of its own choices. This makes it possible to identify and correct the specific decisions that caused the rollout to go off track.

-

The following figure (source) shows a chess.com-style visualization in which each move in the learner’s own game is graded from blunder to brilliant, illustrating how on-policy distillation provides dense, token-level feedback over self-generated trajectories.

Generalized Knowledge Distillation (GKD)

-

Agarwal et al. introduce Generalized Knowledge Distillation (GKD), which unifies several forms of distillation under a single framework. At each training step, the algorithm may either:

-

Sample a trajectory from the student and obtain teacher supervision along that rollout.

-

Draw a trajectory from a fixed dataset, thereby mixing in traditional off-policy examples.

-

-

This mixture is controlled by a parameter \(\lambda \in [0,1]\), which specifies the fraction of training examples that are student-generated.

-

When \(\lambda = 0\), GKD reduces to standard supervised distillation. When \(\lambda = 1\), all training occurs on student-generated trajectories. Intermediate values provide a practical curriculum that combines the stability of offline supervision with the robustness benefits of on-policy training.

-

The following figure (source) shows that on-policy Generalized Knowledge Distillation significantly outperforms supervised fine-tuning, supervised KD, and sequence-level KD across summarization, translation, and mathematical reasoning tasks.

Choice of Divergence and Reward Interpretation

- Although forward KL remains theoretically valid, reverse KL is particularly well suited to on-policy training because the rollout is sampled from the student distribution. Under reverse KL, the student is penalized only for tokens it actually assigns probability to and that the teacher considers unlikely.

- This objective is naturally interpreted as a dense per-token reward:

-

Tokens that the teacher rates more highly than the student receive positive advantage, while tokens that the teacher considers worse than the student receive negative advantage. The multi-teacher OPD article Multi-Teacher On-Policy Distillation: A New Post-Training Primitive emphasizes that this makes reverse KL a particularly natural replacement for the advantage term in GRPO-style reinforcement learning.

-

The Hugging Face TRL writeup Distilling 100B+ Models 40x Faster with TRL similarly notes that reverse KL aligns cleanly with student-generated trajectories and requires only the teacher’s log-probabilities on sampled tokens rather than full-vocabulary distributions.

Distillation and Reinforcement Learning

-

One of the most important modern insights is that on-policy distillation can be understood as a dense, KL-constrained form of policy optimization.

-

The RLHF Book chapter Synthetic Data describes on-policy distillation as the natural progression after synthetic data generation and reinforcement learning. Reinforcement learning supplies on-policy trajectories but typically only sparse sequence-level rewards, whereas on-policy distillation provides token-level guidance from a stronger teacher over those same trajectories. Recent work strengthens this connection.

Reinforcement Learning via Self-Distillation

-

Reinforcement Learning via Self-Distillation by Hübotter et al. (2026) converts textual critiques, execution errors, and other rich feedback into dense token-level updates, showing that self-distillation can serve as a general policy optimization mechanism.

-

Implementation details:

-

The method constructs a teacher distribution conditioned on the original trajectory and a natural-language feedback string that explains what went wrong or how to improve.

-

Student trajectories are replayed under this feedback-augmented teacher context, allowing each token to receive targeted corrective supervision.

-

The same base model can be used as both student and teacher, eliminating the need for a separate external model.

-

The approach is particularly effective for coding and reasoning tasks where runtime errors and verifier messages provide highly informative textual signals.

-

-

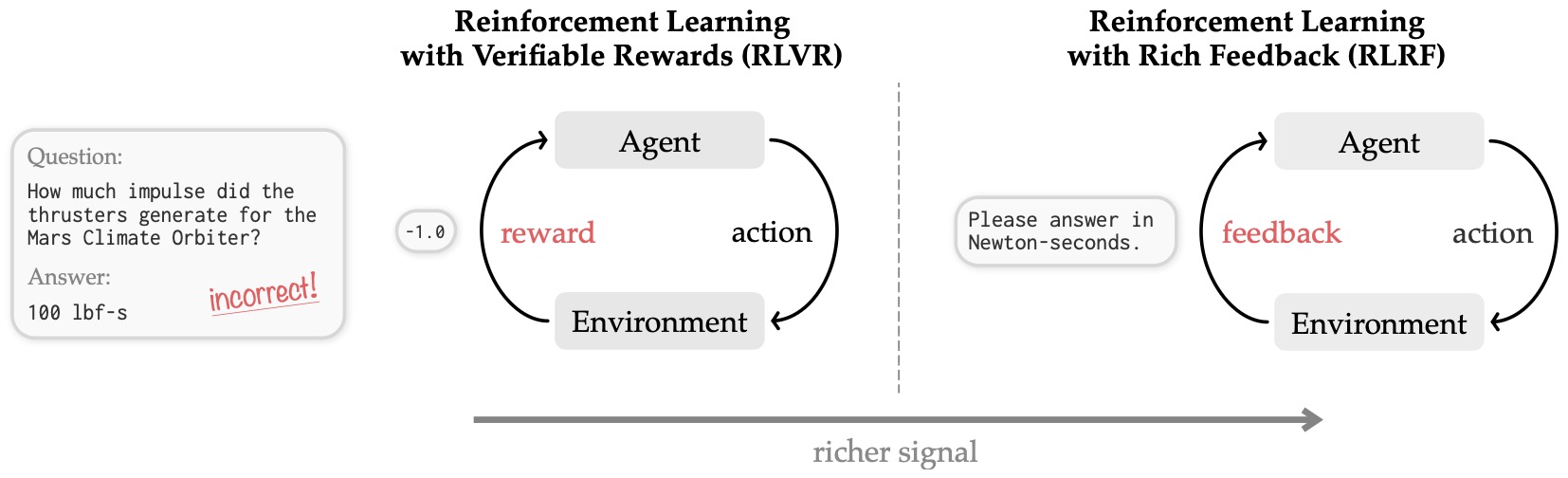

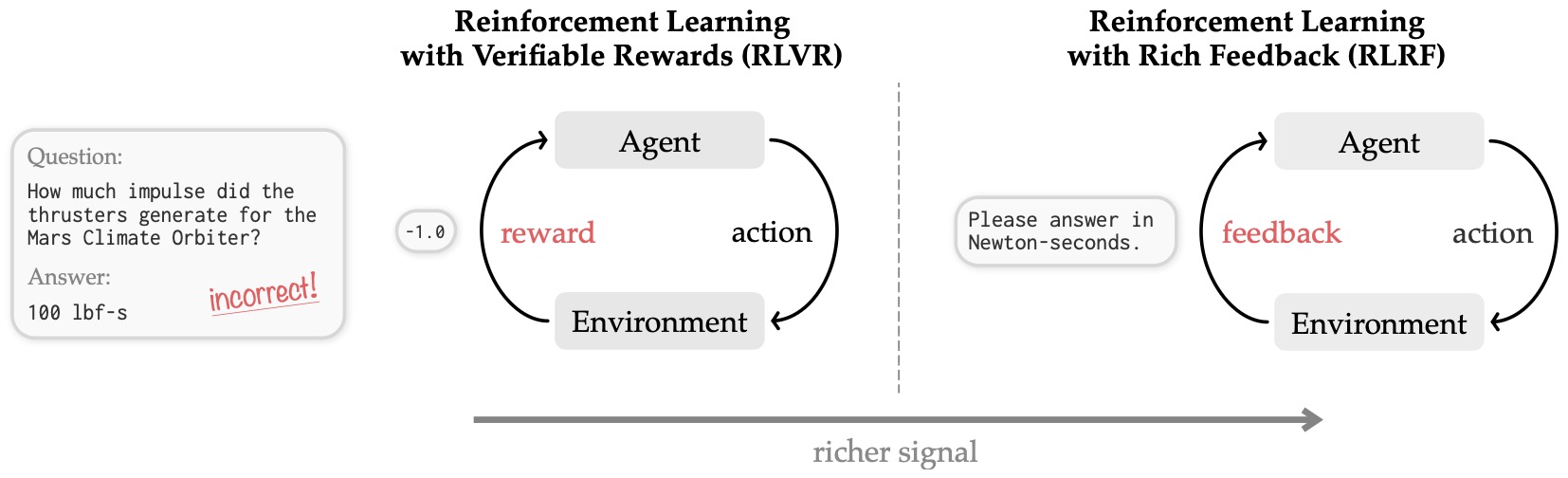

The following figure (source) shows a comparison of RLVR and RLRF settings. In Reinforcement Learning with Verifiable Rewards (RLVR), the agent learns from a scalar reward \(r\), which often acts as an information bottleneck by masking the underlying environment state. In contrast, Reinforcement Learning with Rich Feedback (RLRF) utilizes tokenized feedback. In other words, in the core self-distillation policy optimization setup, in which textual feedback is transformed into a dense teacher signal over the original student rollout. This provides a significantly richer signal than a scalar reward, as the feedback can encapsulate both the reward as well as detailed observations of the state (such as runtime errors from a code environment or feedback from an LLM judge).

Learning beyond Teacher: Generalized On-Policy Distillation with Reward Extrapolation

-

Learning beyond Teacher: Generalized On-Policy Distillation with Reward Extrapolation by Yang et al. (2026) introduces ExOPD (i.e., extrapolation OPD), which generalizes OPD by combining teacher imitation with explicit reward extrapolation, allowing the student to exceed the teacher rather than merely match it.

-

Implementation details:

-

A reference teacher policy provides dense token-level supervision, while an external reward signal estimates how much better or worse the current trajectory is than the teacher baseline.

-

The distillation loss is reweighted by reward-derived scaling factors so that trajectories outperforming the teacher receive amplified updates.

-

The framework supports interchangeable reference models, including external teachers, self-teachers, or moving-average checkpoints.

-

This design decouples “who provides dense supervision” from “who defines the ultimate objective,” enabling teacher-guided exploration beyond teacher quality.

-

-

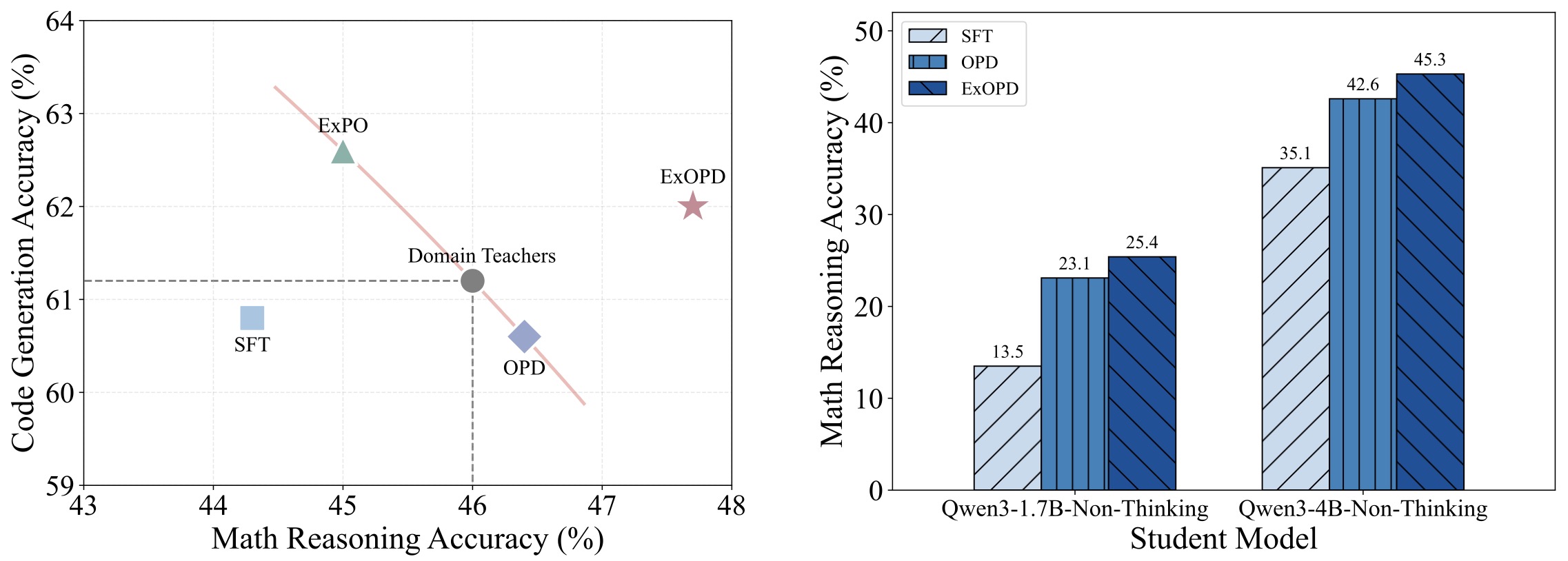

The following figure (source) shows the empirical effectiveness of ExOPD compared with off-policy distillation (SFT), standard OPD, and the weight-extrapolation method ExPO introduced in Model Extrapolation Expedites Alignment by Zheng et al. (2025) in multi-teacher and strong-to-weak distillation settings, with results averaged over 4 math reasoning and 3 code generation benchmarks. In multi-domain expert merging, ExOPD is the only method that yields a unified student that consistently outperforms all domain teachers; in strong-to-weak distillation, ExOPD significantly improves over standard OPD, with reward correction further boosting performance.

Scaling Reasoning Efficiently via Relaxed On-Policy Distillation

-

Scaling Reasoning Efficiently via Relaxed On-Policy Distillation by Ko et al. (2026) introduces REOPOLD, a relaxed on-policy distillation method designed to reduce over-imitation, improve stability, and scale reasoning training more efficiently.

-

Implementation details:

-

Token-level teacher–student log-likelihood ratios are treated as dense rewards, similar to reverse-KL advantages.

-

The authors relax strict imitation by clipping or tempering overly strong penalties on low-value tokens.

-

Partial rollouts and truncated reasoning traces are used to reduce compute while preserving the most informative supervision.

-

The approach is designed specifically for reasoning tasks where exact teacher imitation may be unnecessarily restrictive.

-

-

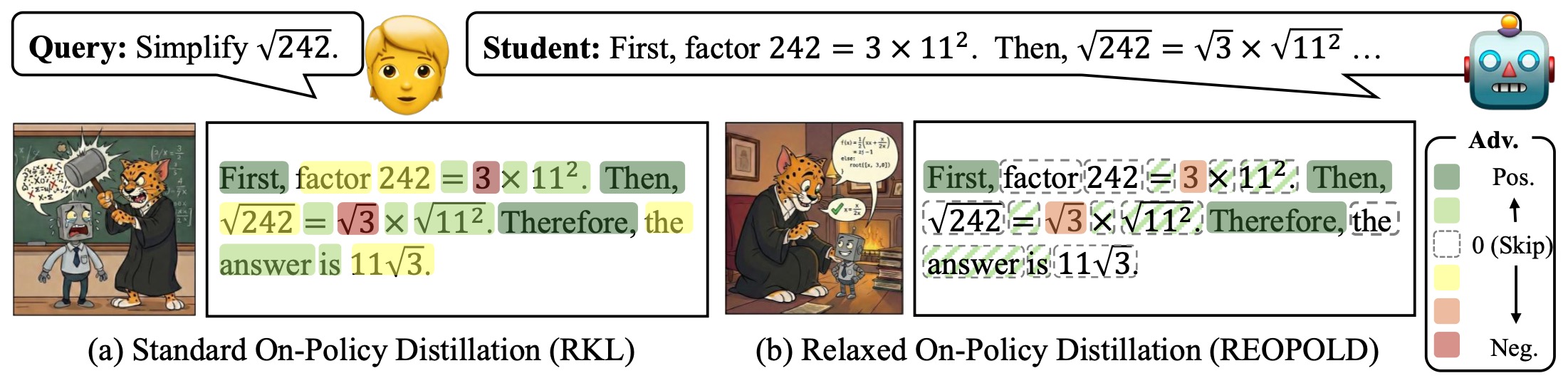

The following figure (source) shows an illustration of REOPOLD. While standard on-policy distillation often introduces instability and inefficiency by forcing the student to mimic the teacher excessively, REOPOLD fosters a more stable and effective learning environment. By establishing a formal connection between distillation and RL via a stop-gradient operation, REOPOLD uses teacher signals temperately and selectively. This filters out potentially harmful signals and prevents the student from deviating excessively from its original distribution.

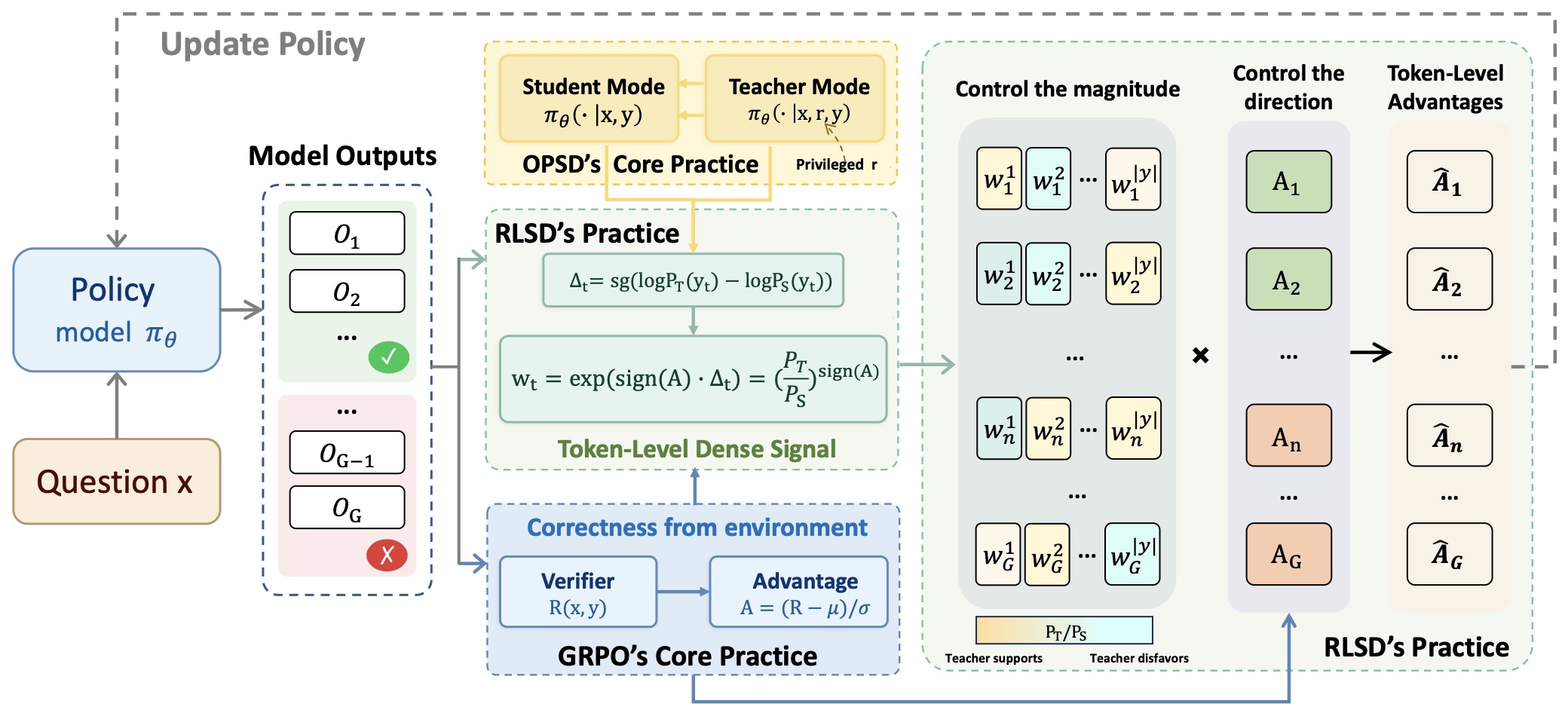

Self-Distilled RLVR

-

Self-Distilled RLVR by Yang et al. (2026) introduces RLSD, which combines reinforcement learning with verifiable rewards and privileged self-distillation, using self-distillation to modulate update magnitudes while preserving RL-derived update directions.

-

Implementation details:

-

A self-teacher receives privileged information such as the correct answer or verified reasoning trace.

-

Reinforcement learning determines which direction the policy should move based on correctness signals.

-

Self-distillation scales the magnitude of token-level updates according to how strongly the privileged teacher prefers alternative continuations.

-

This separation reduces information leakage while preserving the exploration and objective fidelity of RLVR.

-

-

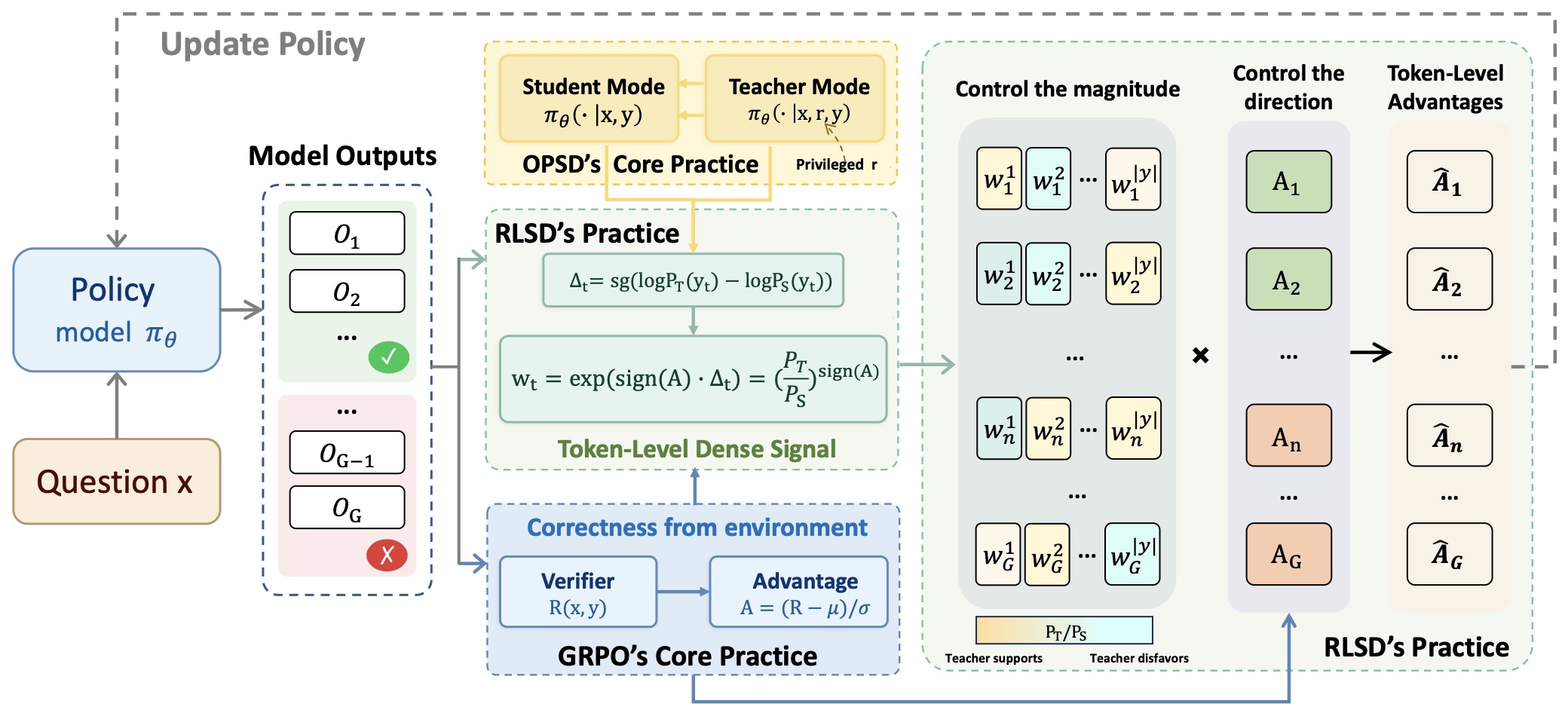

The following figure (source) shows an overview of RLSD, with a hybrid design in which RLVR provides update directions and self-distillation provides fine-grained step sizes.

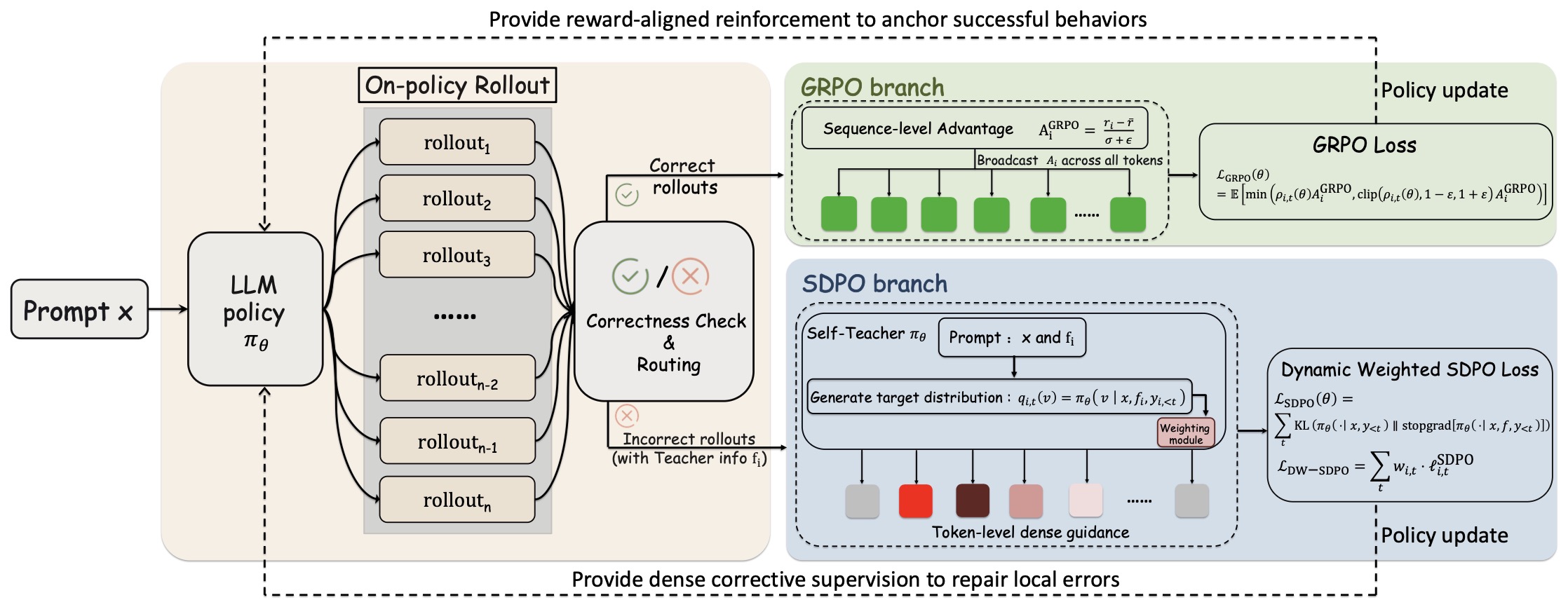

Unifying Group-Relative and Self-Distillation Policy Optimization via Sample Routing

-

Unifying Group-Relative and Self-Distillation Policy Optimization via Sample Routing by Li et al. (2026) introduces SRPO, a sample-routing framework that combines GRPO-style reinforcement learning on successful rollouts with self-distillation-based correction on failed rollouts.

-

Implementation details:

-

Correct samples are optimized using standard group-relative policy optimization.

-

Incorrect samples are replayed under a privileged teacher context and corrected using dense token-level self-distillation.

-

Routing decisions are based on verifier outcomes or reward thresholds.

-

The hybrid design preserves efficient RL updates on successful trajectories while extracting richer supervision from failures.

-

-

The following figure ([source])](https://arxiv.org/abs/2604.02288)) shows an overview of SRPO. Given a prompt \(x\), the policy \(\pi_\theta\) generates a group of on-policy rollouts. A correctness check routes each rollout to one of two branches: correct samples are sent to the GRPO branch, where group-relative advantages provide a reward-aligned policy update; incorrect samples with available teacher information are sent to the SDPO branch, where a feedback-conditioned self-teacher produces logit-level distillation targets via \(KL(P \,\Vert\, \operatorname{stopgrad}(Q))\) for dense corrective supervision.

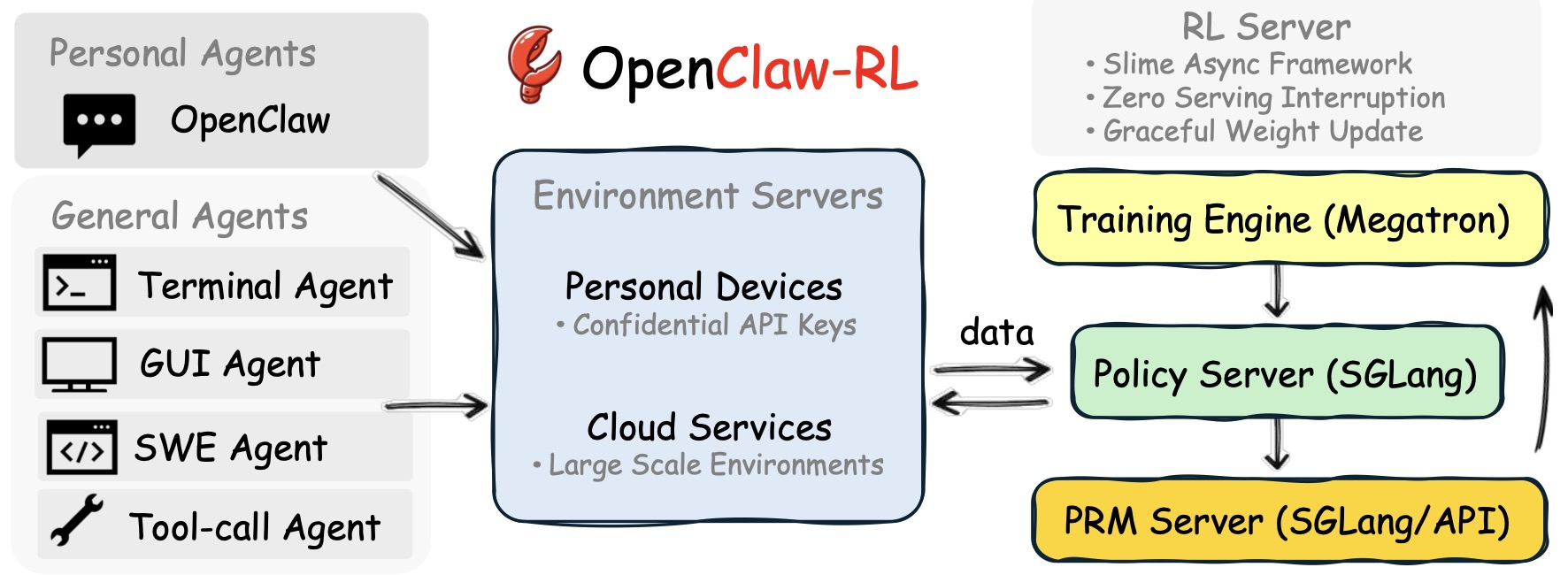

OpenClaw-RL: Train Any Agent Simply by Talking

-

OpenClaw-RL: Train Any Agent Simply by Talking by Wang et al. (2026) extends the RL–distillation connection to interactive agents, treating user messages, tool outputs, and environment transitions as hindsight supervision.

-

Implementation details:

-

The agent’s original trajectory is replayed together with subsequent user or environment feedback.

-

A hindsight-conditioned teacher evaluates how the model would have acted if it had observed the later information earlier.

-

Tool outputs, GUI changes, and terminal states are converted into dense correction signals.

-

The same framework supports conversational agents, coding agents, and embodied control systems.

-

-