Primers • Agentic Design Patterns

- Overview

- What makes an AI system an agent?

- Agentic Design Patterns

- Core Idea

- From linear prompts to execution graphs

- Functional roles

- Compositional structure

- When to use these patterns

- The unifying principle

- Prompt Chaining

- Routing

- Parallelization

- Reflection

- Tool Use

- Planning

- Prioritization

- Pattern Selection and Composition

- Multi-Agent Systems

- Single-Agent vs. Multi-Agent Systems

- Why this distinction matters

- Conceptual definitions

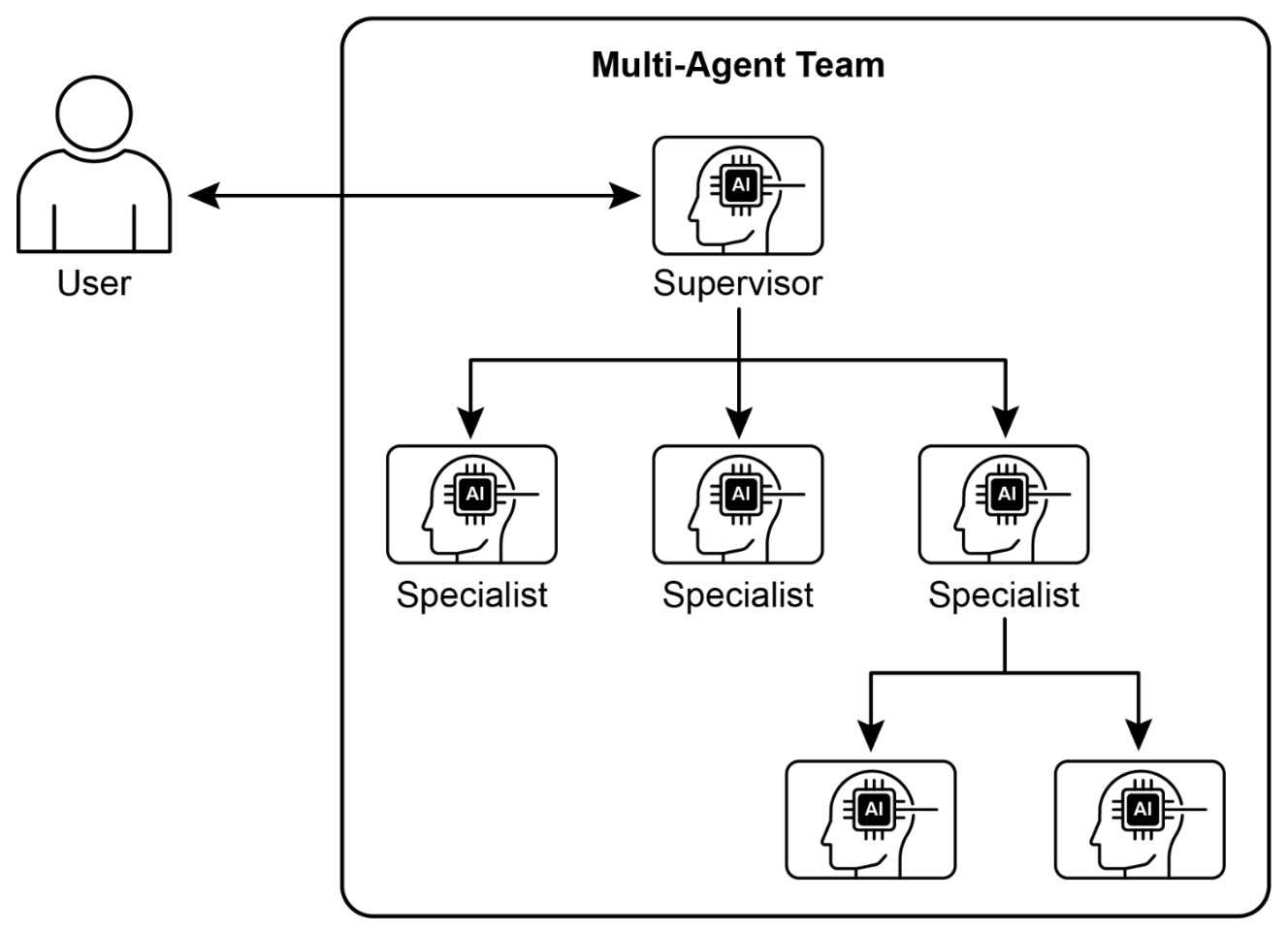

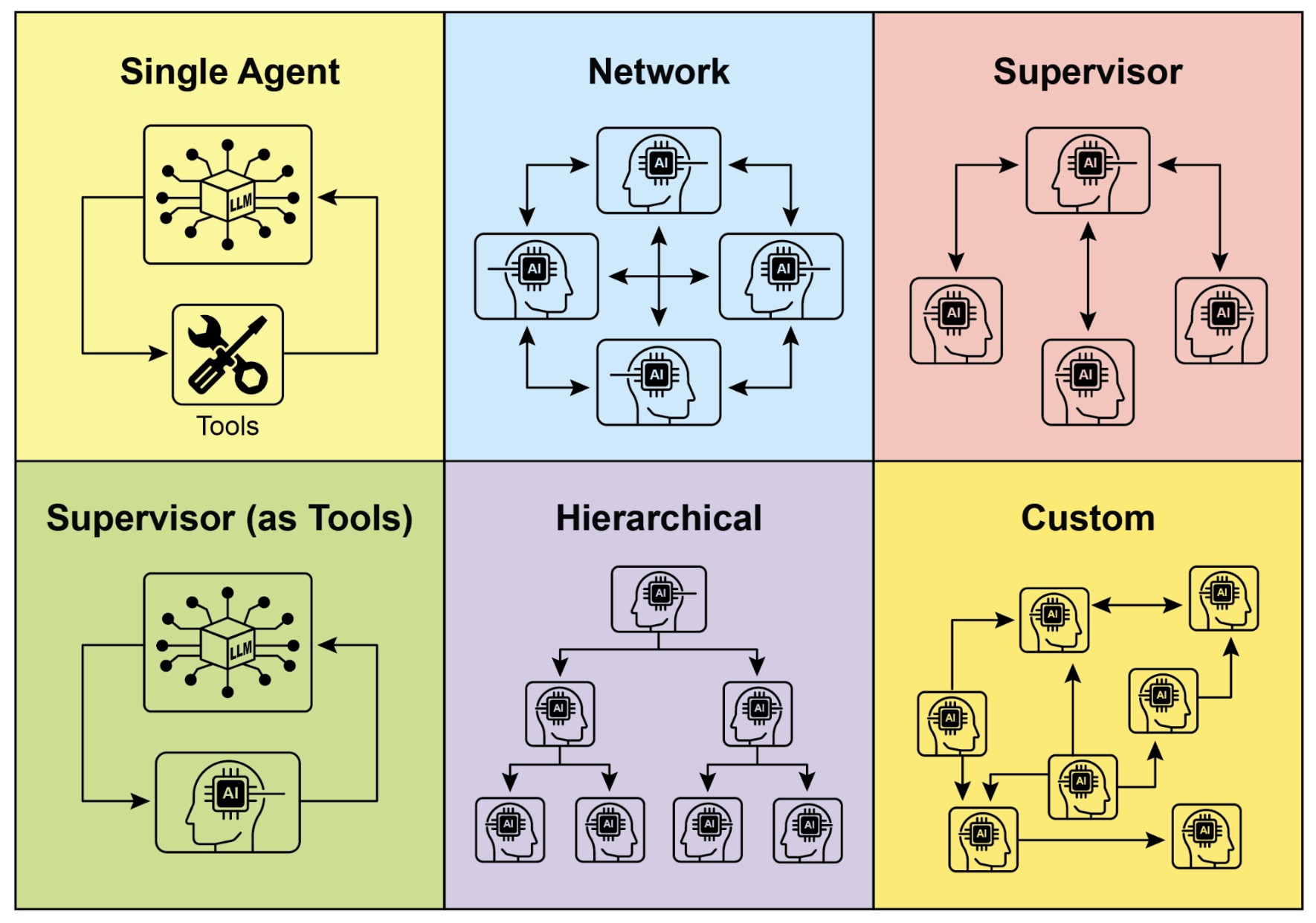

- Visual overview

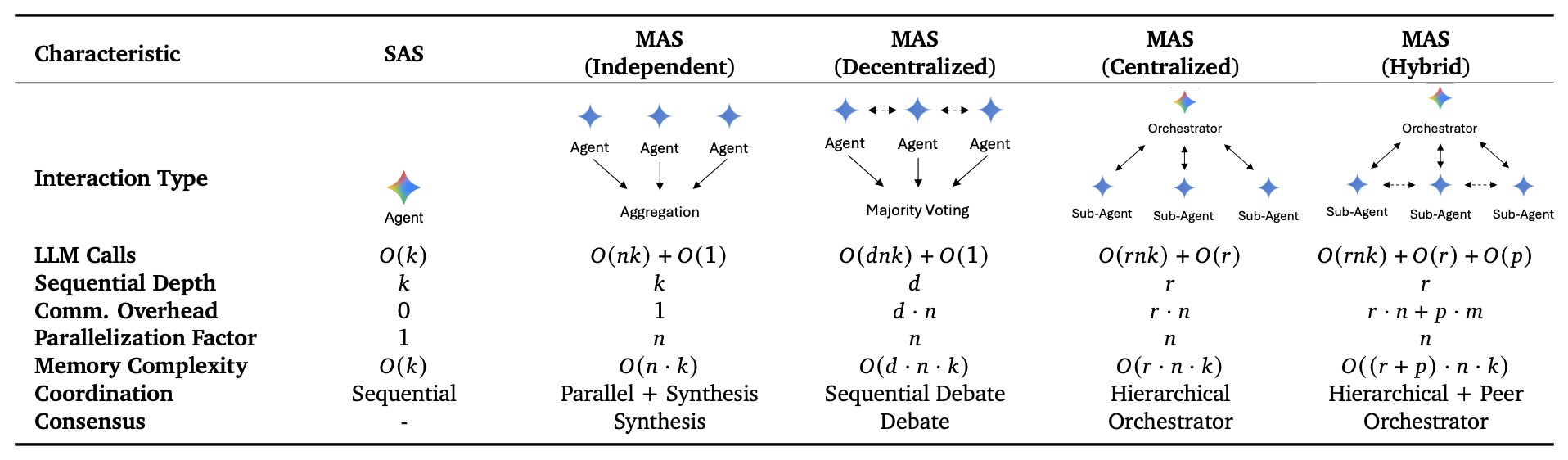

- Architectural comparison

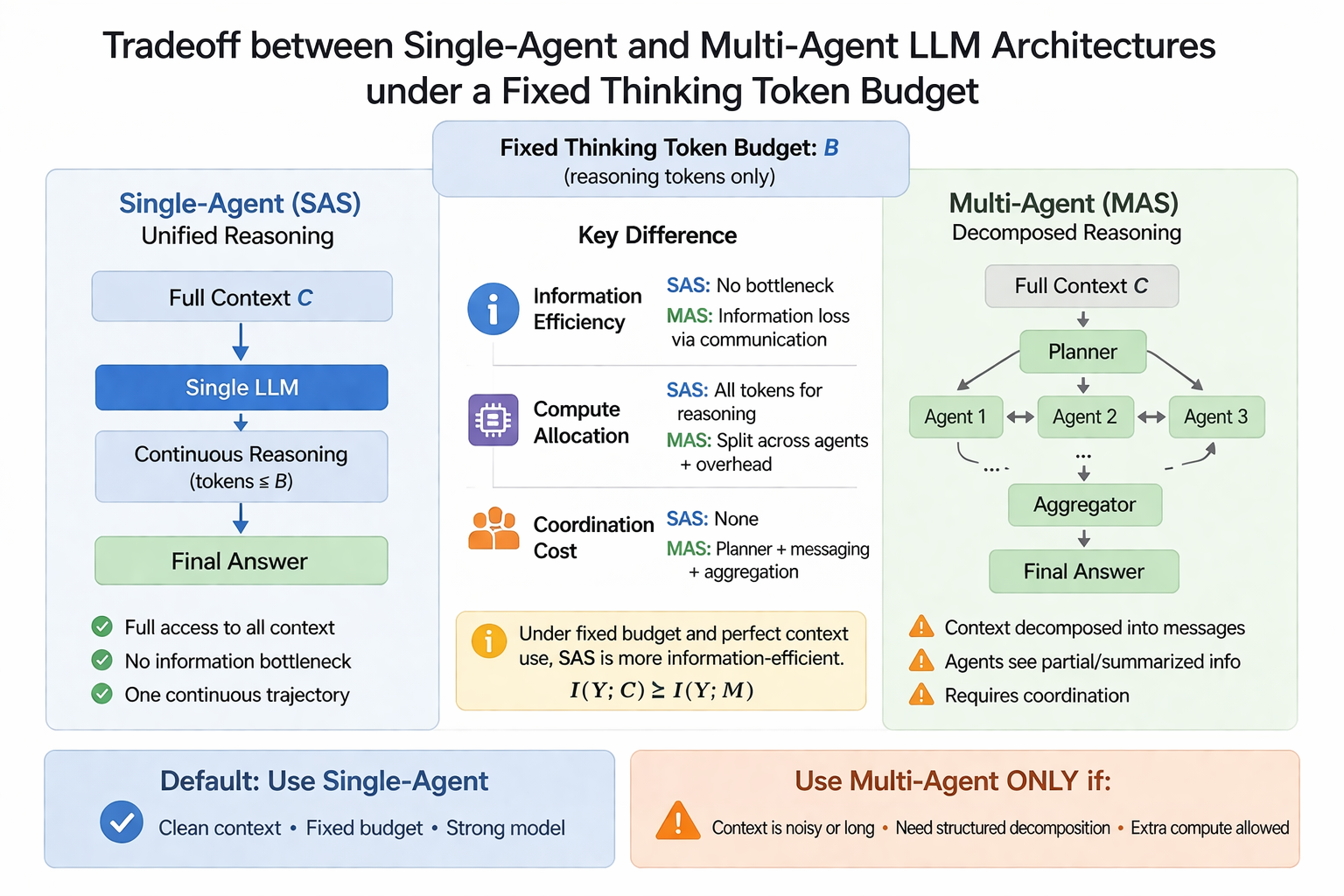

- Core tradeoffs

- When single-agent systems are usually better

- When multi-agent systems become competitive

- Architecture selection guidance

- Key takeaways

- State, Adaptation, and Control in Agentic Systems

- Core Idea

- Memory Management

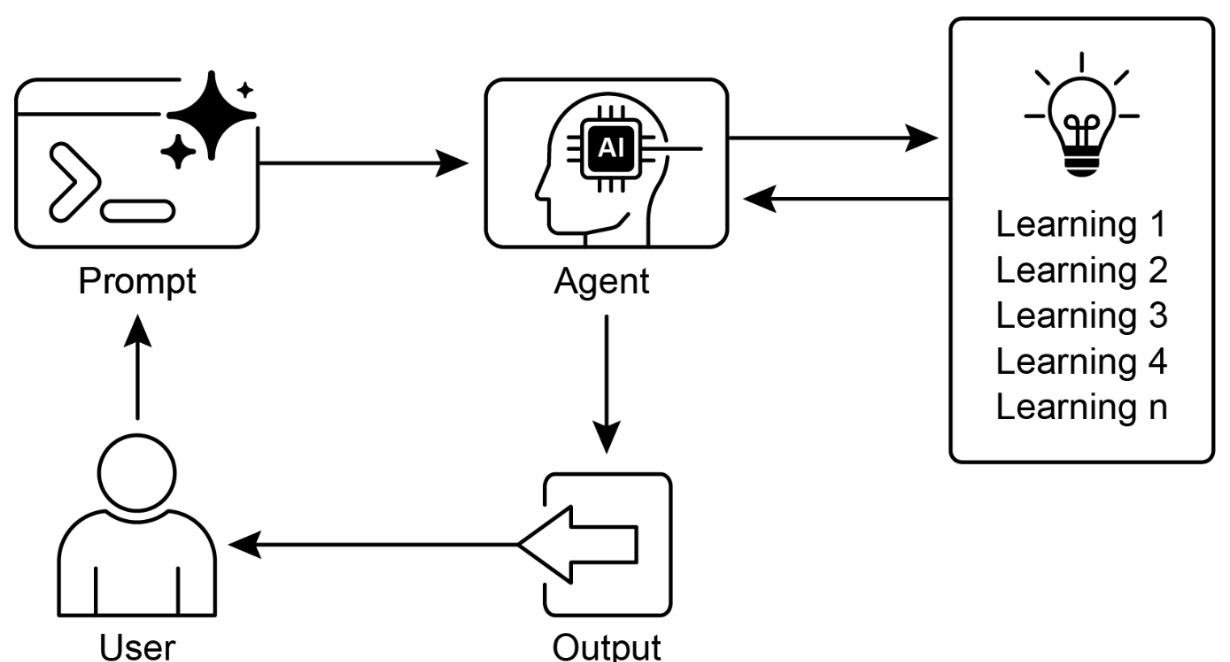

- Learning and Adaptation

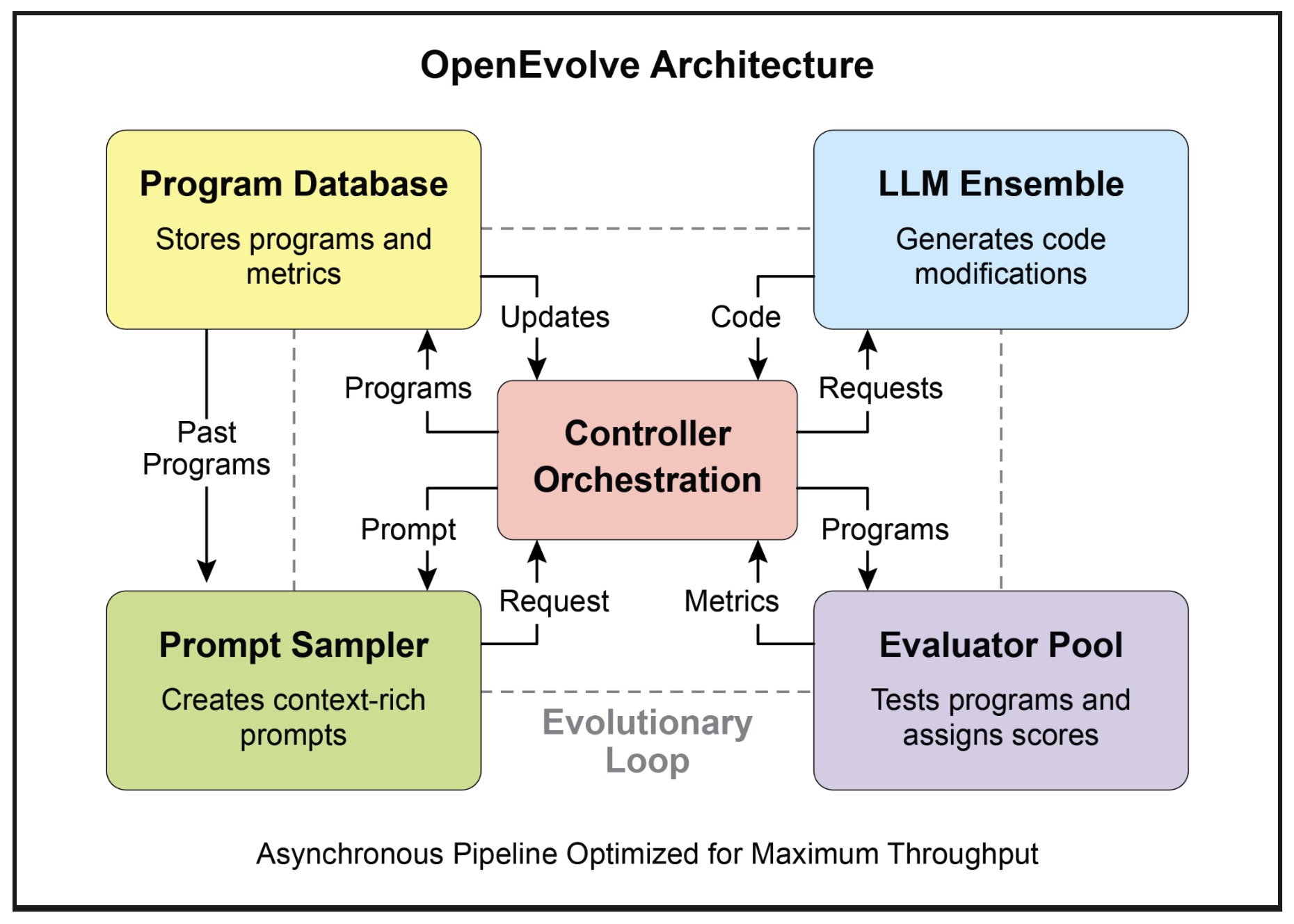

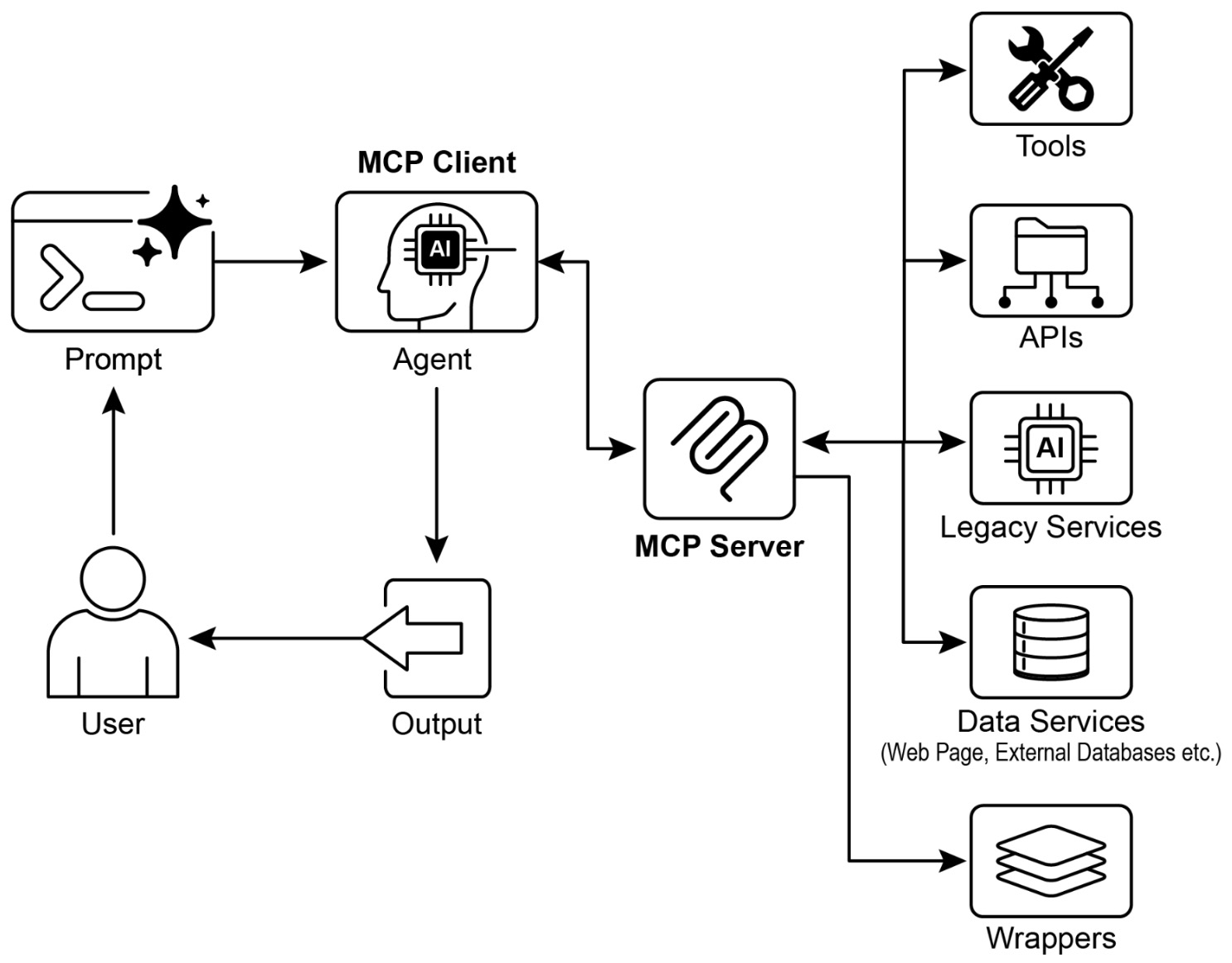

- Model Context Protocol (MCP)

- Goal Setting and Monitoring

- Exception Handling and Recovery

- Human-in-the-Loop

- Guardrails and Safety

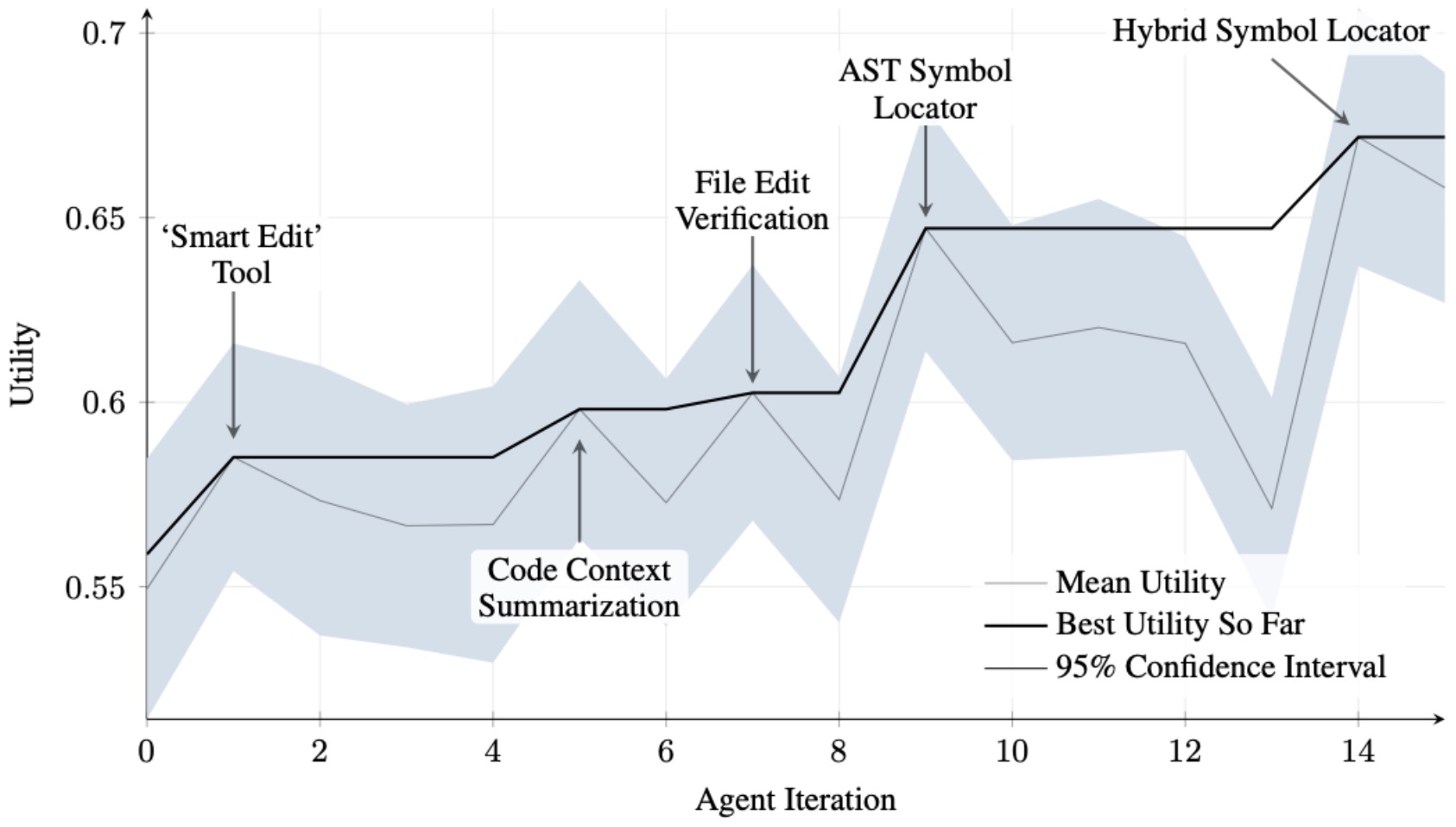

- Evaluation

- References

- Citation

Overview

-

Agentic design patterns are reusable ways to structure systems in which a language model does more than generate text. The model becomes part of a larger loop that observes context, chooses actions, uses tools, manages state, and keeps moving toward a goal. That shift, from text generation to goal-directed orchestration, is the central idea behind modern agent engineering. In this view, the model is not the whole product. It is the reasoning core inside a system that must also handle memory, tool access, control flow, communication, and failure recovery.

-

A useful mental model is to treat an agent system as an operating canvas. The canvas is the runtime environment that holds prompts, state, tools, external APIs, memory stores, and the logic that routes information from one step to the next. The important design question is therefore not only “which model should I call?” but also “how should the system be structured so the model can act reliably under uncertainty?” That is exactly where design patterns matter.

-

The reason patterns are so important is that single-shot prompting breaks down quickly as tasks become multi-step, tool-dependent, or long-running. Once a system must decompose work, retrieve facts, call APIs, maintain conversational state, coordinate specialists, or recover from partial failure, the architecture matters at least as much as the prompt. This is the same lesson the broader agent literature has converged on: performance improves when reasoning is interleaved with action, when external tools can be invoked, and when retrieved evidence augments model-only memory. ReAct by Yao et al. (2022) showed that alternating reasoning and acting improves multi-step task solving by letting the model update plans from observations. Toolformer by Schick et al. (2023) showed that models can learn when and how to call tools, which is foundational for practical agents. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks by Lewis et al. (2020) established the now-standard idea that external retrieval can make generation more factual and updatable.

-

Each pattern in this primer is paired with hands-on implementations using LangChain, demonstrating how these concepts translate into real, executable systems. This bridges the gap between theory and practice by showing how agentic behaviors such as planning, tool use, memory, and coordination can be concretely realized in production-oriented frameworks.

-

At a high level, an agentic system can be described as a policy over actions conditioned on context. If we write the agent’s state at time \(t\) as \(s_t\), its chosen action as \(a_t\), and its objective as maximizing expected cumulative utility, then the design problem is often framed as:

- This is not saying every agent in practice is trained end-to-end with reinforcement learning. Most production agents are not. Rather, it gives a clean way to think about what the system is doing: at each step it selects the next best action given the current state, available tools, and long-term objective. For the introductory patterns in this primer, no special loss function is central yet, because the focus is system structure rather than model training.

Why are agentic systems needed?

-

Modern AI systems reached a point where generating high-quality text is no longer the bottleneck. The real limitation lies in reliably solving complex, multi-step, real-world problems. A standalone large language model can produce fluent answers, but it struggles when tasks require persistence, external interaction, or adaptive decision-making. This gap is precisely why agentic systems are needed.

-

At their core, real-world problems are not single-shot queries. They are processes. They involve gathering information, making intermediate decisions, interacting with external systems, and iteratively refining outcomes. A static prompt-response model cannot sustain this kind of workflow because it lacks continuity, structured control, and the ability to act.

-

Agentic systems address this by transforming the model into part of a loop rather than a terminal endpoint. Instead of producing a single output, the system continuously updates its understanding and actions:

-

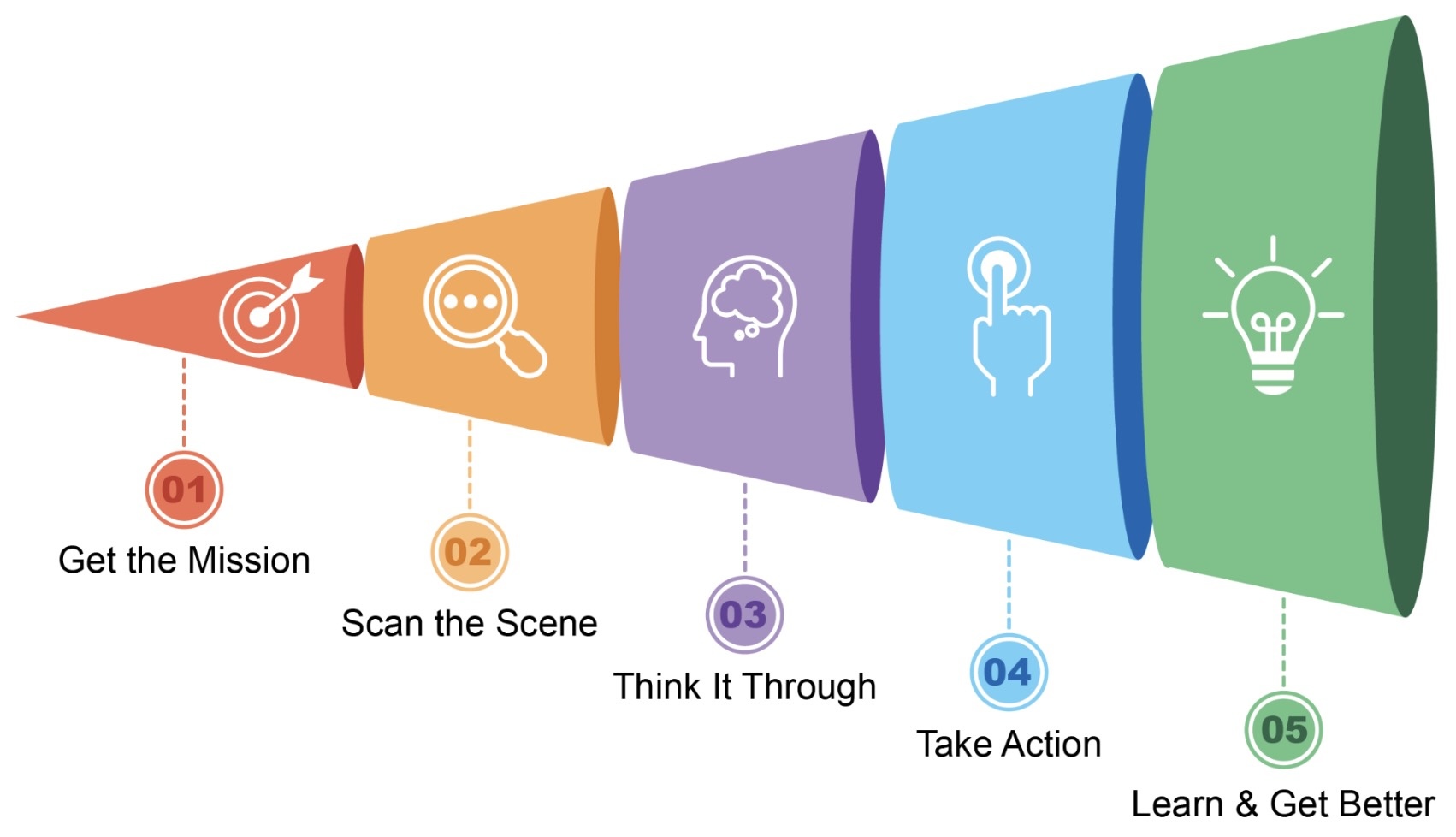

This loop directly mirrors the five-step operational cycle described in the source material, where an agent gets a mission, gathers context, plans, acts, and improves over time.

-

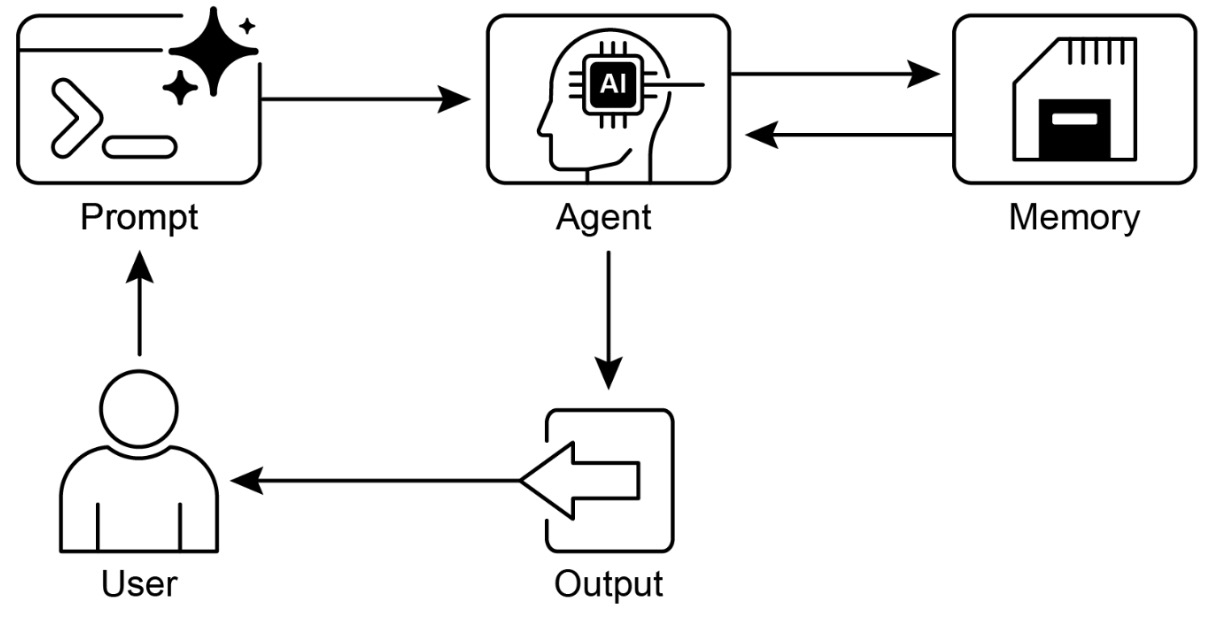

The following figure illustrates that agentic AI functions as an intelligent assistant, continuously learning through experience. It operates via a straightforward five-step loop to accomplish tasks.

The limitations of non-agentic systems

-

Traditional LLM-based applications fail in predictable ways when pushed beyond simple tasks:

- They cannot maintain state across multiple steps without manual orchestration

- They lack access to real-time or external information unless explicitly integrated

- They do not inherently plan or decompose problems

- They cannot act in the environment (e.g., call APIs, update systems)

- They cannot improve through feedback within a task

-

This leads to brittle systems that perform well in demos but degrade quickly in production scenarios.

-

Research has consistently highlighted these gaps. For example, ReAct by Yao et al. (2022) demonstrated that combining reasoning with actions significantly improves performance on multi-step tasks by allowing models to update their strategy based on observations. Similarly, Toolformer by Schick et al. (2023) showed that models become far more capable when they can decide when to use external tools. These works reinforce a key idea: intelligence in practical systems emerges not just from reasoning, but from structured interaction with the environment.

The need for goal-directed behavior

-

Agentic systems are needed because real applications are goal-driven rather than query-driven. Instead of answering “What is X?”, systems must achieve objectives like:

- Resolve a customer issue end-to-end

- Plan and execute a workflow

- Monitor and react to changing conditions

- Coordinate multiple steps across systems

-

This shift requires systems that can operate autonomously toward a goal, rather than simply responding to inputs.

-

Formally, this aligns with decision-making under uncertainty, where the system must choose actions that maximize long-term success:

- Even when not explicitly trained with reinforcement learning, agentic systems implicitly approximate this process by iteratively selecting actions that move closer to a goal.

The need for interaction with the external world

-

Another critical limitation of standalone models is that they are closed systems. They rely entirely on pretraining data and cannot:

- Access up-to-date information

- Perform real operations (e.g., database queries, transactions)

- Verify outputs against external sources

-

Agentic systems solve this by incorporating tool use and retrieval. This is why approaches like Retrieval-Augmented Generation by Lewis et al. (2020) are foundational. They allow systems to ground their outputs in real data, reducing hallucinations and enabling dynamic knowledge access.

-

In practice, this turns the model into a coordinator rather than a knowledge container.

The need for adaptability and feedback

-

Real environments are dynamic. Requirements change, inputs are noisy, and intermediate steps often fail. Non-agentic systems lack mechanisms to adapt mid-execution.

-

Agentic systems introduce:

- Feedback loops that allow correction

- Reflection mechanisms that improve outputs

- Memory that accumulates knowledge across steps

-

This is essential for robustness. Without these capabilities, systems cannot recover from errors or improve performance within a task.

The need for scalable complexity

-

As tasks grow in complexity, a single monolithic reasoning step becomes inefficient and unreliable. Breaking problems into smaller steps, coordinating multiple components, and distributing responsibilities becomes necessary.

-

Agentic systems enable this by:

- Decomposing tasks into manageable units

- Coordinating multiple specialized components

- Supporting parallel and sequential execution

-

This naturally leads to more advanced architectures such as multi-agent systems, where different agents handle distinct roles and collaborate toward a shared goal.

Why patterns matter

-

Patterns matter because agent systems fail in recurring ways. They lose context, over-call tools, forget intermediate results, mis-handle branching logic, or produce brittle behavior when the environment changes. Reusable patterns help by decomposing these recurring problems into standard solutions: prompt chaining for staged reasoning, routing for specialization, parallelization for throughput, reflection for self-critique, tool use for external action, planning for long-horizon tasks, memory for continuity, guardrails for safety, and evaluation for observability.

-

This is also why frameworks matter. Frameworks are not the intelligence. They are the scaffolding that makes intelligence operational. LangChain overview - Docs by LangChain positions LangChain as an integration and agent framework, while LangGraph overview - Docs by LangChain emphasizes stateful, long-running workflows. That division is important: LangChain is convenient for composition, while LangGraph becomes especially useful once your agent needs explicit state transitions, branching, retries, or human checkpoints.

The architectural shift

-

The most important conceptual shift is that the model is no longer the application boundary. In earlier LLM applications, the prompt itself effectively defined the system. In agentic systems, the prompt becomes just one component within a broader orchestration layer that manages state, tools, and control flow.

-

Rather than relying on a single forward pass of reasoning, agentic systems operate as structured, iterative processes. The system continuously evaluates its current context, selects an action, executes it, and updates its internal state before proceeding. This introduces continuity and adaptability that static prompt-based systems fundamentally lack.

-

This shift enables several critical capabilities:

- Stateful execution: Intermediate outputs, decisions, and context are preserved across steps instead of being recomputed from scratch

- Adaptive decision-making: The system can revise its approach dynamically based on new observations or tool outputs

- Composability: Complex tasks can be decomposed into smaller, modular units that can be independently improved and reused

- Resilience: Failures are no longer terminal; the system can retry, branch, or escalate when needed

-

These capabilities align closely with how agentic systems are described in the source material, where an agent progresses through cycles of understanding, planning, acting, and refining its behavior over time.

-

From a systems perspective, this means that intelligence is no longer a single computation but an emergent property of coordinated interactions between components. The language model provides reasoning, but the surrounding system provides structure, memory, and execution.

-

This architectural framing also explains why many agentic patterns exist. Each pattern addresses a specific challenge introduced by this shift. For example:

- Prompt chaining structures multi-step reasoning

- Routing enables specialization across tasks

- Tool use connects reasoning to real-world actions

- Reflection introduces self-correction

- Planning supports long-horizon objectives

-

Instead of embedding all logic inside a single prompt, these patterns distribute responsibility across a controlled workflow. The result is a system that is easier to debug, extend, and scale.

-

The key takeaway is that once you move from single-step generation to iterative, goal-driven execution, architecture becomes the dominant factor in system performance. The model is still essential, but it is no longer sufficient on its own.

Practical implications for builders

-

For a practitioner, the immediate implication is that reliability comes more from architecture than from prompt cleverness alone. A strong system usually does four things well:

-

It controls context. The model should only see the information needed for the current decision. Too little context causes blind reasoning, while too much causes distraction and degraded instruction following.

-

It makes action explicit. A model should not merely suggest what to do when the system can safely do it through tools.

-

It stores state outside the model. Memory, checkpoints, and interaction history should live in structured state rather than being entrusted entirely to the context window.

-

It treats failures as expected events. Agents need retries, fallbacks, validation, and escalation paths.

-

-

Those principles are not isolated tricks. They are the connective tissue across the patterns that follow.

A LangChain sketch

- Even the simplest LangChain example already hints at the architectural idea. A plain chain is not yet a full agent, but it shows how you stop thinking in one giant prompt and begin thinking in composable steps.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

prompt = ChatPromptTemplate.from_messages([

("system", "You are an assistant that turns vague goals into crisp task statements."),

("human", "Goal: {goal}")

])

goal_to_task = prompt | llm | StrOutputParser()

result = goal_to_task.invoke({

"goal": "Help me design an AI workflow that can answer support questions reliably."

})

print(result)

- This is only a starting point, but it captures the seed of the larger idea: the system translates a user goal into a machine-usable intermediate representation, which can later be routed into retrieval, planning, tool use, or evaluation. In other words, even the simplest useful agent begins by making hidden structure explicit.

What makes an AI system an agent?

-

An AI system becomes an agent when it transitions from passive response generation to active, goal-directed behavior. The defining shift is from generating outputs to driving outcomes. This happens when a system is embedded in a loop that enables it to perceive, reason, act, and adapt over time in pursuit of a goal.

-

At its simplest, an agent is a system that maps observations to actions in pursuit of a goal. However, modern agentic systems extend this classical definition by incorporating reasoning, tool use, memory, and iterative feedback loops. The result is a system that does not merely answer questions, but actively works toward outcomes.

The core agent loop

-

A practical way to understand what makes a system agentic is through its operational loop. An agent continuously cycles through a structured process:

- It receives a goal

- It gathers relevant context

- It reasons about possible actions

- It executes actions

- It observes outcomes and adapts

-

This can be formalized as a sequential decision process:

\[s_{t+1} = f(s_t, a_t, o_t), \quad a_t \sim \pi(a \mid s_t)\]- where \(s_t\) represents the system state, \(a_t\) the chosen action, and \(o_t\) the observation from the environment.

-

This loop is the minimal structure required for agency. Without it, a system cannot adapt, improve, or operate beyond a single interaction.

From models to agents

-

A large language model on its own does not qualify as an agent. It functions as a reasoning engine, capable of transforming input text into output text based on learned patterns. However, it lacks:

- Persistent state across interactions

- Direct access to external systems

- The ability to take real actions

- Feedback-driven adaptation within a task

-

This corresponds to what can be considered a baseline configuration, where intelligence is present but not operationalized.

-

An agent emerges when this reasoning capability is embedded within a system that provides:

- State management, allowing continuity across steps

- Tool interfaces, enabling interaction with external systems

- Control flow, determining how decisions unfold over time

- Feedback integration, enabling adaptation based on outcomes

-

This transformation aligns with the progression described in the source material, where systems evolve from isolated reasoning engines into connected, action-capable entities.

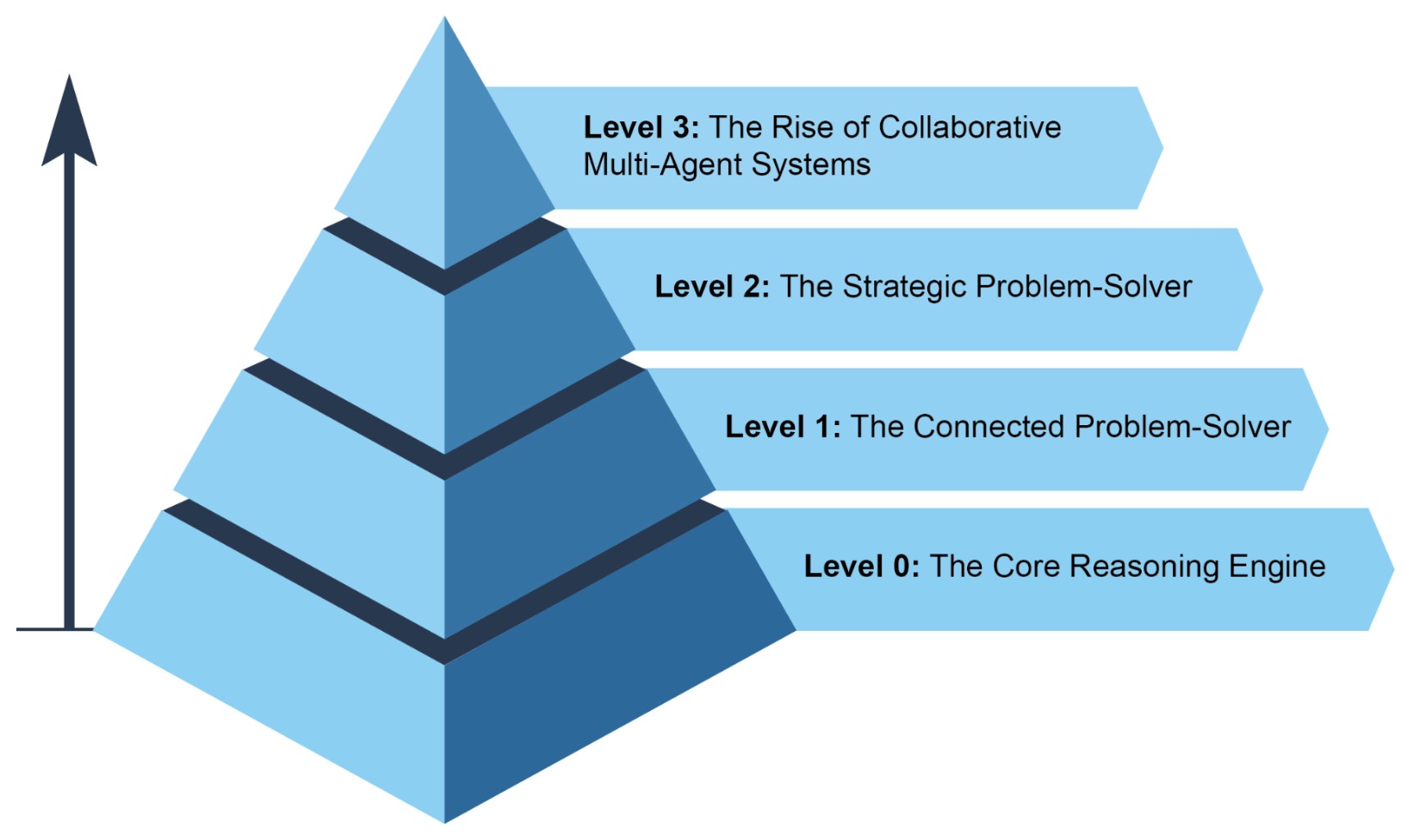

Levels of agent capability

-

Agentic systems can be understood along a spectrum of increasing capability and autonomy.

-

Level 0: The reasoning core:

- At this level, the system consists solely of a language model. It can reason about problems but cannot interact with the environment or access external information beyond its training data.

-

Level 1: The connected problem-solver:

-

Here, the system gains access to tools and external data sources. It can retrieve information, call APIs, and execute multi-step actions, enabling it to solve real-world problems that require up-to-date or external knowledge.

-

This is closely related to the paradigm introduced in Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks by Lewis et al. (2020), where external retrieval enhances model capabilities by grounding outputs in factual data.

-

-

Level 2: The strategic problem-solver:

-

At this level, the agent can plan, manage context strategically, and handle complex, multi-step workflows. A key capability here is context engineering, which involves selecting and structuring the most relevant information for each step to maximize performance.

-

This is conceptually aligned with structured reasoning approaches such as Chain-of-Thought Prompting by Wei et al. (2022), where intermediate reasoning steps improve task performance by decomposing problems.

-

-

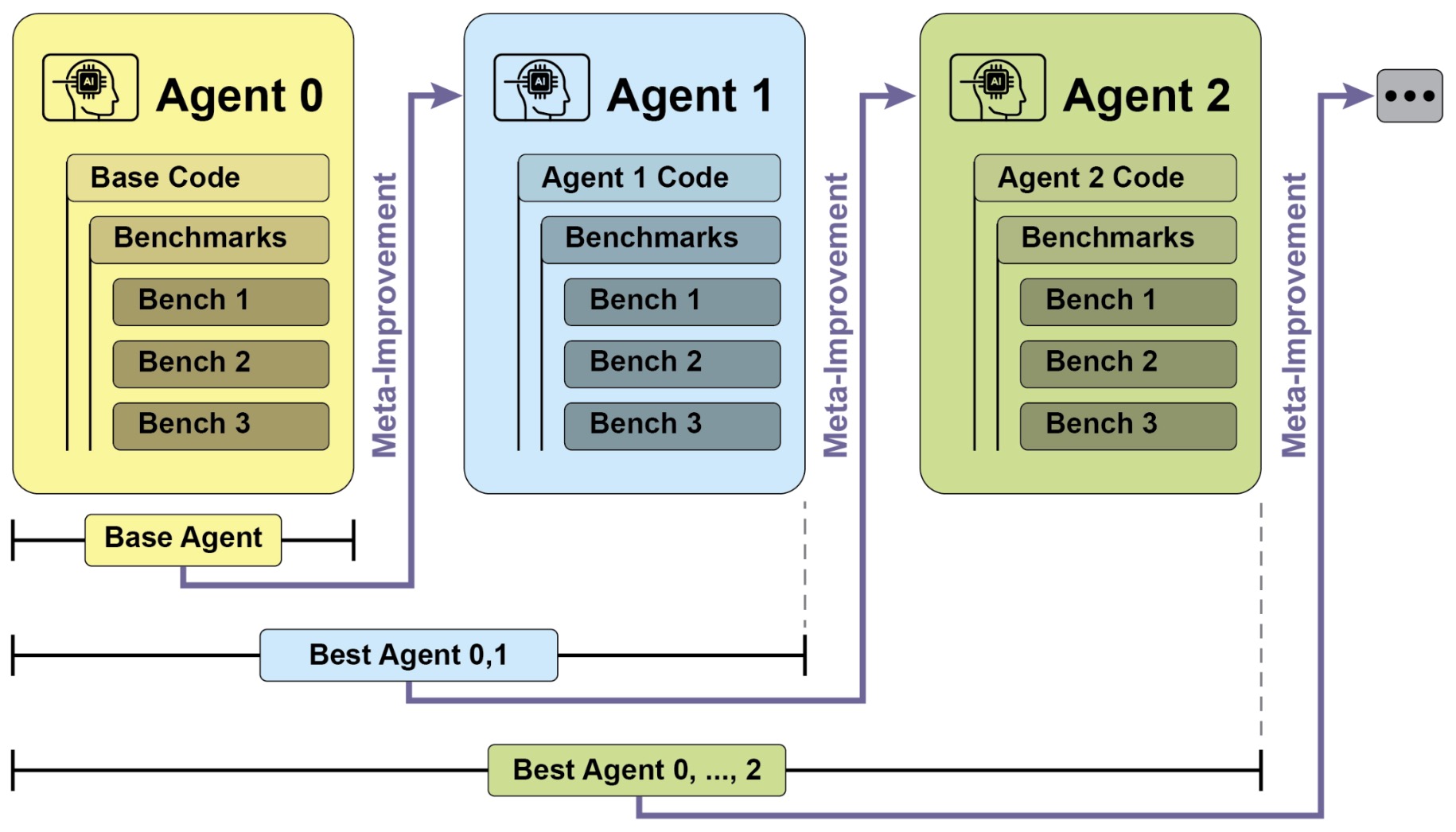

Level 3: Collaborative multi-agent systems:

-

The most advanced level involves multiple agents working together, each specializing in different roles. Instead of a single monolithic system, intelligence emerges from coordination among agents.

-

The following figure shows various instances demonstrating the spectrum of agent complexity.

-

-

This mirrors organizational structures in human systems, where specialized roles collaborate to achieve complex objectives. It also aligns with emerging research in distributed AI systems, where coordination and communication become central challenges.

-

Key properties of agentic systems

-

Several properties distinguish agents from traditional systems:

-

Autonomy: The ability to operate without constant human intervention

-

Proactiveness: The ability to initiate actions toward goals rather than waiting for instructions

-

Reactivity: The ability to respond dynamically to changes in the environment

-

Tool use: The ability to extend capabilities through interaction with external systems

-

Memory: The ability to retain and utilize information across time

-

Communication: The ability to interact with users or other agents

-

Prioritization: The ability to evaluate and rank tasks or actions based on criteria such as urgency, importance, dependencies, and resource constraints

-

Pattern selection and composition: The ability to combine multiple design patterns into a coherent system that aligns with task requirements and operational constraints

-

-

These properties are not independent. They reinforce each other to create systems that can operate effectively in complex, dynamic environments.

The role of reasoning and action

-

A defining feature of agentic systems is the tight coupling between reasoning and action. Instead of generating a complete solution upfront, the system iteratively refines its approach based on feedback.

-

This paradigm is exemplified by ReAct by Yao et al. (2022), which interleaves reasoning steps with actions, allowing the system to update its understanding as new information becomes available.

-

The key insight is that reasoning alone is insufficient. Effective problem-solving requires interaction with the environment, and that interaction must inform subsequent reasoning.

A minimal LangChain agent example

- The transition from a simple chain to an agent becomes clear when tools and decision-making are introduced.

from langchain.agents import initialize_agent, Tool

from langchain_openai import ChatOpenAI

# Define a simple tool

def search_tool(query: str) -> str:

return f"Search results for: {query}"

tools = [

Tool(

name="Search",

func=search_tool,

description="Useful for answering questions about current events"

)

]

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

agent = initialize_agent(

tools=tools,

llm=llm,

agent="zero-shot-react-description",

verbose=True

)

result = agent.run("What are recent developments in AI agents?")

print(result)

-

This example illustrates the essential ingredients of an agent:

- A reasoning model

- A set of tools

- A decision policy that determines when to use them

-

Even in this minimal form, the system is no longer just generating text. It is selecting actions based on context, which is the defining step toward agency.

The emerging paradigm

-

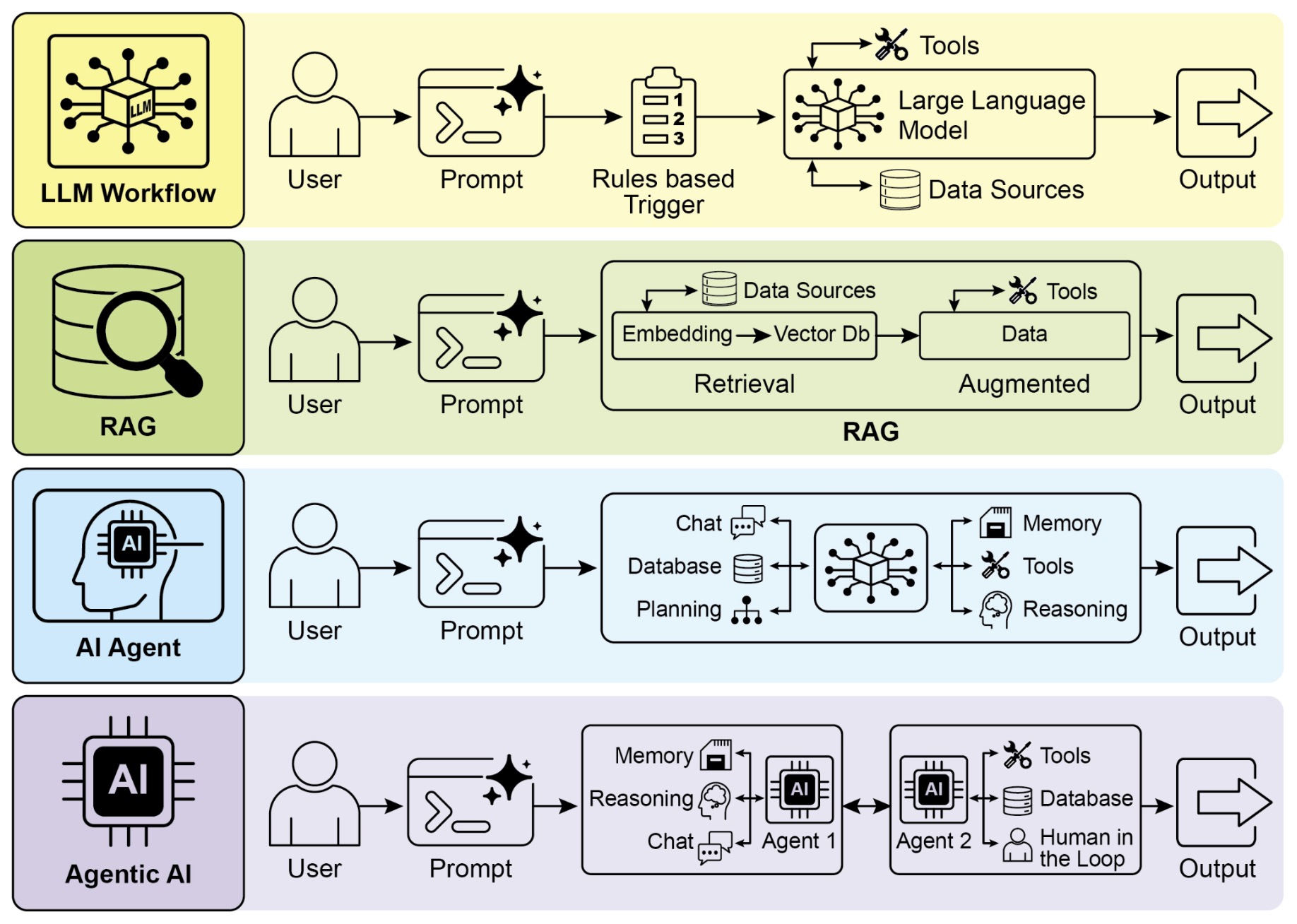

The progression from LLM workflows to fully agentic systems represents a broader shift in AI:

- From static pipelines to dynamic systems

- From isolated models to integrated environments

- From answering questions to achieving goals

-

The following figure shows transitioning from LLMs to RAG, then to Agentic RAG, and finally to Agentic AI.

- This evolution reflects a growing recognition that intelligence is not just about knowledge or reasoning in isolation. It is about the ability to operate effectively in a world of uncertainty, constraints, and changing information.

Agentic Design Patterns

Core Idea

-

The agentic design patterns covered in this section form the operational backbone of agentic systems. Together, they define how an agent reasons, decides, acts, and improves while interacting with its environment. Rather than functioning as isolated techniques, these patterns compose into execution graphs that transform static model calls into dynamic, goal-directed systems.

-

At a high level, these patterns collectively implement a structured decision process:

- Each pattern contributes a specific capability within this flow, enabling agents to move from simple response generation to complex, adaptive behavior. Importantly, prioritization and pattern selection act as meta-level controls over this process, determining not only what actions are taken, but which patterns are invoked and in what order.

From linear prompts to execution graphs

-

Traditional LLM systems operate as linear pipelines: a prompt is constructed, a response is generated, and the process ends. In contrast, agentic systems organize computation as directed graphs of operations, where intermediate outputs are routed, transformed, validated, and reused.

-

The patterns in this section collectively enable this shift:

- Prompt chaining introduces structured decomposition

- Routing introduces conditional branching

- Parallelization introduces concurrent execution

- Reflection introduces iterative refinement

- Tool use introduces external interaction

- Planning introduces long-horizon structure

- Multi-agent systems introduce distributed specialization

- Prioritization introduces decision ordering under constraints

- Pattern selection and composition introduces system-level orchestration

-

Together, these transform a single inference into a coordinated, adaptive process.

Functional roles

-

Each pattern plays a distinct role in the execution lifecycle of an agent, as follows:

-

Prompt chaining as decomposition: Prompt chaining breaks complex tasks into smaller, sequential steps. It reduces cognitive load on the model and enables intermediate validation. This is the foundation upon which most other patterns build.

-

Routing as decision-making: Routing determines which path the system should take. It selects tools, models, or workflows based on input characteristics, enabling specialization and efficiency.

-

Parallelization as scaling mechanism: Parallelization allows independent tasks to be executed simultaneously. It improves latency and enables exploration of multiple reasoning paths or data sources.

-

Reflection as quality control: Reflection introduces feedback loops that allow the system to critique and refine its outputs. It improves reliability and correctness through iterative improvement.

-

Tool use as action interface: Tool use connects the agent to the external world. It enables retrieval, computation, and real-world actions, extending the system beyond its internal knowledge.

-

Planning as strategic coordination: Planning organizes actions over multiple steps. It enables the system to reason about dependencies, sequence tasks, and pursue long-term goals.

-

Multi-agent systems as distributed intelligence: Multi-agent systems distribute responsibilities across specialized agents. They enable modularity, scalability, and collaboration in complex workflows.

-

Prioritization as resource-aware decision control: Prioritization determines which tasks, goals, or actions should be executed first when multiple options compete. It incorporates criteria such as urgency, importance, dependencies, and resource constraints, ensuring that the agent focuses on high-impact actions under limited time or compute.

-

Pattern selection and composition as system orchestration: Pattern selection determines which combination of patterns should be applied for a given task, while composition defines how they are connected. This operates at a meta-level, shaping the overall execution graph rather than individual steps.

-

Compositional structure

- These patterns are rarely used in isolation. A typical execution flow may look like:

-

This structure highlights how patterns compose:

- Routing selects the workflow

- Planning defines the structure

- Prioritization orders tasks and allocates resources

- Parallelization executes independent steps

- Tool use provides capabilities

- Reflection ensures quality

-

At a higher level, pattern selection and composition determines whether this entire pipeline is even the right structure, or whether an alternative configuration (e.g., multi-agent orchestration or iterative loops) should be used instead.

-

In more advanced systems, multi-agent coordination may wrap around this entire process, with different agents handling planning, execution, validation, and prioritization.

When to use these patterns

-

These patterns become necessary as task complexity increases:

- Use prompt chaining when tasks require multiple reasoning steps

- Use routing when inputs vary significantly in type or complexity

- Use parallelization when tasks are independent and latency matters

- Use reflection when correctness and quality are critical

- Use tool use when external data or actions are required

- Use planning when tasks span multiple dependent steps

- Use multi-agent systems when specialization improves outcomes

- Use prioritization when multiple tasks compete under constraints (time, compute, dependencies)

- Use pattern selection and composition when designing full systems, especially when multiple patterns must be combined or adapted dynamically

-

The choice is not binary. Most real systems use a combination of these patterns, selected and orchestrated based on task requirements and constraints.

The unifying principle

-

The unifying idea across all these patterns is control. They introduce structure into how models are used, transforming them from passive generators into components of a controlled execution system.

-

Instead of asking “what should the model output?”, agentic systems ask “what should the system do next?”

-

Prioritization refines this further into “what should the system do next given constraints?”, while pattern selection elevates it to “what system should be constructed to solve this class of problems?”

-

This shift, from output generation to action selection and system design, is what enables the patterns in this primer to work together as a cohesive whole.

Prompt Chaining

-

Prompt chaining is a foundational agentic design pattern that transforms how complex problems are solved with language models. Rather than relying on a single, monolithic prompt, it decomposes a task into a sequence of smaller, structured steps, where each step feeds into the next. This approach shifts systems away from fragile one-shot reasoning toward controlled, multi-stage execution that is more reliable, interpretable, and scalable.

-

At its core, prompt chaining operationalizes the idea that complex reasoning is best handled incrementally. Each step focuses on a specific sub-problem, reducing the cognitive load on the model and improving overall performance. This principle is supported by findings from Chain-of-Thought Prompting (Wei et al., 2022), which demonstrate that breaking reasoning into intermediate steps significantly enhances accuracy on complex tasks.

-

More broadly, prompt chaining reflects a shift in how language models are conceptualized: not as monolithic problem solvers, but as components within a structured computation graph. In this paradigm, reasoning is distributed across multiple steps and can be integrated with external tools and persistent state, aligning with the evolution toward agentic systems.

-

Because it introduces structure and control while remaining relatively simple to implement, prompt chaining often serves as the entry point into agentic design.

Why prompt chaining is needed

-

Single-prompt approaches often fail when tasks become multi-step or require structured reasoning. These failures arise from several well-known limitations:

- Instruction overload: Large prompts with multiple constraints cause the model to ignore or misinterpret parts of the task

- Context dilution: Important details get lost as prompt length increases

- Error amplification: Mistakes in early reasoning cannot be corrected mid-process

- Lack of control: There is no way to inspect or guide intermediate steps

-

Prompt chaining addresses these issues by explicitly structuring the reasoning process into discrete stages. Each stage has a well-defined input and output, allowing the system to validate, transform, or enrich information before passing it forward.

The structure of a prompt chain

-

A prompt chain can be viewed as a directed sequence of transformations:

\[x_0 \rightarrow f_1(x_0) = x_1 \rightarrow f_2(x_1) = x_2 \rightarrow \cdots \rightarrow f_n(x_{n-1}) = x_n\]- where each \(f_i\) represents a prompt-driven transformation applied by the model.

-

This structure introduces modularity into the system:

- Each step can be independently designed and optimized

- Intermediate outputs can be inspected and debugged

- External tools can be inserted between steps

- Different models can be used for different stages

-

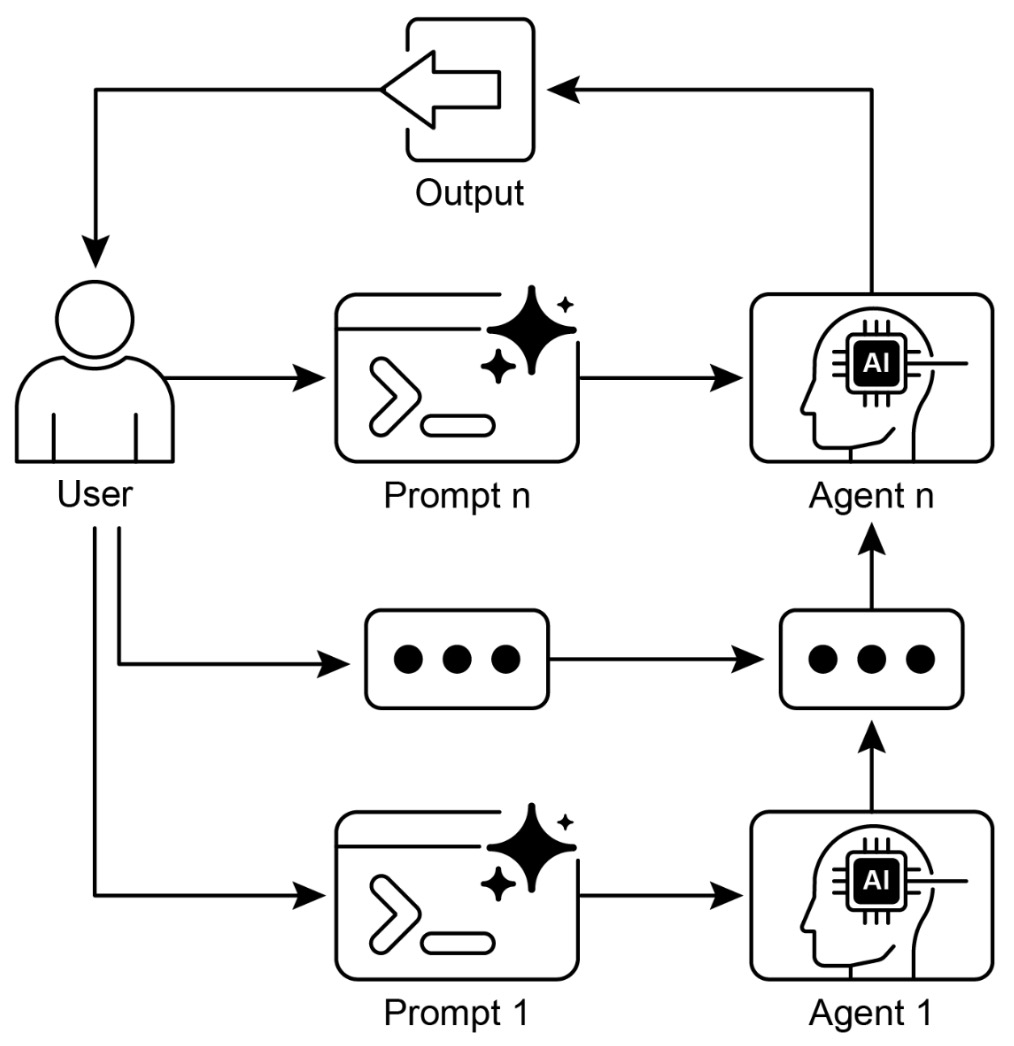

The result is a pipeline that behaves more like a program than a single inference call. The following figure illustrates the prompt chaining pattern, where agents receive a series of prompts from the user, with the output of each agent serving as the input for the next in the chain.

Example

-

Consider a task such as generating a research summary from raw documents. A single prompt might attempt to:

- Extract key points

- Organize them

- Generate a coherent summary

-

In a chained approach, this becomes:

- Extract key facts from the document

- Cluster facts into themes

- Generate a structured outline

- Produce the final summary

-

Each step reduces ambiguity and improves control over the output.

Implementation

- LangChain provides a natural abstraction for prompt chaining through composable chains. Each component in the chain transforms input into output, allowing pipelines to be constructed declaratively.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Step 1: Extract key points

extract_prompt = ChatPromptTemplate.from_messages([

("system", "Extract key facts from the following text."),

("human", "{input_text}")

])

# Step 2: Organize into themes

organize_prompt = ChatPromptTemplate.from_messages([

("system", "Group the following facts into themes."),

("human", "{facts}")

])

# Step 3: Generate summary

summary_prompt = ChatPromptTemplate.from_messages([

("system", "Write a concise summary from these themes."),

("human", "{themes}")

])

extract_chain = extract_prompt | llm | StrOutputParser()

organize_chain = organize_prompt | llm | StrOutputParser()

summary_chain = summary_prompt | llm | StrOutputParser()

# Execute chain

text = "AI agents are systems that can reason, act, and adapt..."

facts = extract_chain.invoke({"input_text": text})

themes = organize_chain.invoke({"facts": facts})

summary = summary_chain.invoke({"themes": themes})

print(summary)

- This example demonstrates how each stage isolates a specific responsibility. The system becomes easier to debug and extend, since intermediate outputs can be inspected or modified.

Enhancing chains with tools

-

Prompt chains are not limited to model-only transformations. External tools can be inserted between steps to enrich the workflow.

-

For example:

- A retrieval step can fetch relevant documents

- A database query can validate extracted facts

- An API call can provide real-time data

-

This hybrid approach is closely related to Retrieval-Augmented Generation by Lewis et al. (2020), where retrieval is integrated into the generation pipeline to improve factual accuracy.

-

In practice, this turns a prompt chain into a flexible workflow that combines reasoning with external capabilities.

Prompt chaining as a building block for agents

-

Prompt chaining is more than a technique for structuring prompts. It is a foundational building block for agentic systems.

-

Many higher-level patterns rely on chaining:

- Planning uses chains to decompose tasks into subgoals

- Reflection uses chains to critique and refine outputs

- Routing uses chains to decide which path to take

- Tool use often involves chaining reasoning with action

-

In this sense, prompt chaining provides the scaffolding for more advanced behaviors. It enables systems to simulate structured thought processes and execute them reliably.

Failure modes

-

While powerful, prompt chaining introduces its own challenges:

- Latency: Multiple steps increase response time

- Cost: Each step requires an additional model call

- Error propagation: Incorrect outputs can cascade through the chain

- Over-fragmentation: Too many steps can make the system unnecessarily complex

-

These trade-offs must be carefully managed. In practice, effective chains strike a balance between decomposition and efficiency.

-

One common mitigation strategy is to validate intermediate outputs before passing them forward. Another is to selectively merge steps when they are tightly coupled.

Routing

-

Routing is an agentic design pattern that enables a system to dynamically select the most appropriate path, model, tool, or sub-agent based on the characteristics of the input. Instead of applying a single fixed workflow to every request, routing introduces conditional logic that directs tasks to specialized components, improving both performance and efficiency.

-

At a fundamental level, routing transforms an otherwise linear pipeline into a decision-driven system. This aligns with the broader principle that intelligence in complex systems often emerges not from uniform processing, but from specialization and selective execution.

Why routing is needed

-

As systems grow in complexity, a single model or workflow becomes insufficient for handling diverse inputs. Different tasks may require:

- Different reasoning strategies

- Different tools or APIs

- Different levels of computational cost

- Different domain expertise

-

Without routing, systems either overuse expensive resources or underperform on specialized tasks.

-

Routing addresses this by introducing a decision layer that determines how each input should be handled. This allows systems to:

- Improve accuracy by delegating to specialized components

- Reduce cost by using simpler models when appropriate

- Increase flexibility by supporting multiple workflows

-

This idea is closely related to modular AI systems and mixture-of-experts architectures. For example, Switch Transformers by Fedus et al. (2021) demonstrate how routing inputs to specialized subnetworks improves scalability and efficiency in large models.

The routing decision function

-

At its core, routing can be expressed as a decision function:

\[r(x) \rightarrow i\]- where \(x\) is the input and \(i\) is the selected route or component.

-

This decision can be implemented in several ways:

- A rule-based classifier

- A lightweight model

- A language model itself

- A hybrid of heuristics and learned signals

-

The output of the routing step determines which downstream process will handle the task.

-

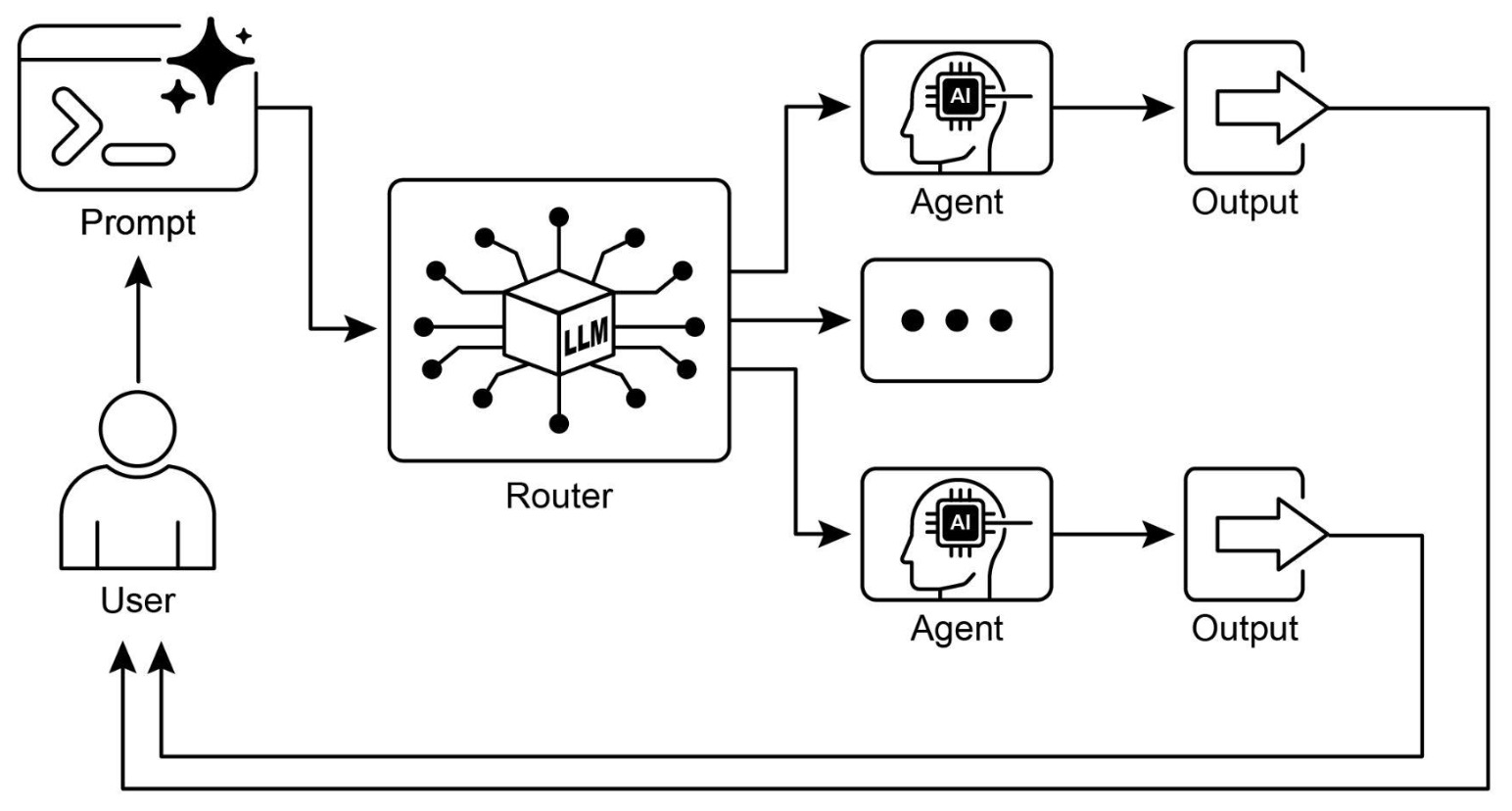

The following figure shows the routing pattern where inputs are directed to different processing paths based on classification using an LLM as a router.

Types of routing

-

Routing can take several forms depending on the system design.

-

Input-based routing:

-

The system analyzes the input and decides which path to take. For example:

- Questions about math are routed to a symbolic solver

- Questions about current events are routed to a retrieval pipeline

- Creative writing tasks are routed to a generative model

-

-

Tool routing:

-

The system selects which tool or API to use based on the task. This is common in agent systems where multiple tools are available.

-

This behavior is closely related to the mechanisms explored in Toolformer by Schick et al. (2023), where models learn when to invoke external tools.

-

-

Model routing:

-

Different models are used depending on task complexity:

- Lightweight models for simple queries

- Larger models for complex reasoning

-

This enables cost-performance optimization in production systems.

-

-

Agent routing:

- Tasks are delegated to different agents, each with a specialized role. This becomes particularly important in multi-agent systems.

Example

-

Consider a system that handles customer support queries. Without routing, all queries are processed the same way. With routing:

- Billing issues are sent to a financial agent

- Technical issues are sent to a troubleshooting agent

- General inquiries are handled by a conversational agent

-

This improves both response quality and system efficiency.

Implementation

- LangChain supports routing through router chains and conditional logic. A common approach is to use a classification step to determine the route.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Router prompt

router_prompt = ChatPromptTemplate.from_messages([

("system", "Classify the user query into one of: math, search, or general."),

("human", "{query}")

])

router_chain = router_prompt | llm | StrOutputParser()

def route(query):

route = router_chain.invoke({"query": query}).strip().lower()

return route

# Define handlers

def math_handler(query):

return f"Solving math problem: {query}"

def search_handler(query):

return f"Searching for: {query}"

def general_handler(query):

return f"General response: {query}"

# Routing logic

def handle_query(query):

route_type = route(query)

if "math" in route_type:

return math_handler(query)

elif "search" in route_type:

return search_handler(query)

else:

return general_handler(query)

print(handle_query("What is 25 * 17?"))

- This example demonstrates how a lightweight routing decision can direct queries to different handlers. In more advanced systems, each handler could itself be a complex chain or agent.

Routing with chains and tools

-

Routing becomes more powerful when combined with other patterns:

- With prompt chaining: Different chains can be selected dynamically

- With tool use: The system can choose the most appropriate tool

- With planning: Routing decisions can be made at multiple stages

- With multi-agent systems: Tasks can be distributed across agents

-

This composability makes routing a central mechanism in agent orchestration.

Failure modes

-

Routing introduces new challenges:

- Misclassification: Incorrect routing leads to poor results

- Ambiguity: Some inputs may not clearly map to a single route

- Overhead: The routing step adds latency and cost

- Fragmentation: Too many routes can make the system difficult to manage

-

To mitigate these issues:

- Use confidence thresholds and fallback paths

- Allow multiple routes for ambiguous inputs

- Continuously evaluate routing accuracy

- Keep routing logic interpretable when possible

Parallelization

-

Parallelization is an agentic design pattern that enables systems to execute multiple independent tasks simultaneously rather than sequentially. By distributing work across parallel branches, the system improves latency, throughput, and scalability while maintaining the ability to recombine results into a coherent output.

-

This pattern reflects a broader principle in intelligent systems: when tasks are independent or loosely coupled, executing them concurrently leads to significant efficiency gains. In agentic systems, where workflows often involve multiple sub-tasks such as retrieval, reasoning, validation, or generation, parallelization becomes a natural extension of prompt chaining and routing.

Why parallelization is needed

-

Sequential execution introduces unnecessary delays when tasks do not depend on each other. For example:

- Retrieving information from multiple sources

- Generating multiple candidate responses

- Evaluating outputs using different criteria

- Processing multiple inputs in batch

-

If these steps are executed one after another, total latency becomes the sum of all execution times. Parallelization reduces this to the maximum execution time among tasks:

\[T_{\text{parallel}} \approx \max(T_1, T_2, \dots, T_n)\]- instead of:

-

This reduction can be substantial in real-world systems, especially when individual steps involve network calls or model inference.

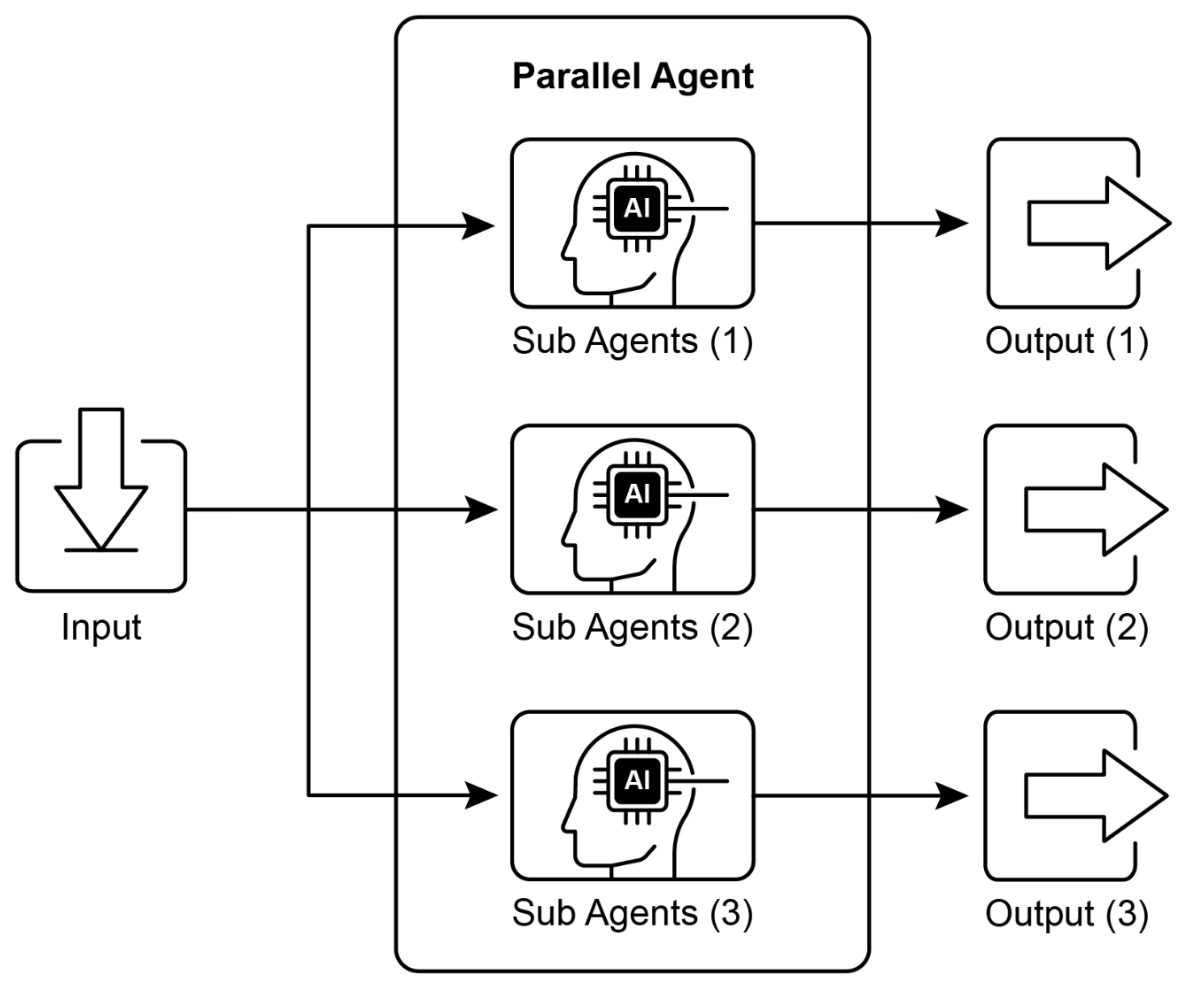

The following figure shows parallel execution of independent tasks using sub-agents and aggregation of their outputs.

Forms of parallelization

-

Parallelization can be applied in several ways depending on the system design.

-

Task parallelism:

-

Different tasks are executed simultaneously. For example:

- Running multiple retrieval queries across different databases

- Generating answers using different prompts

- Evaluating outputs with multiple scoring functions

-

Each task operates independently and produces its own output.

-

-

Data parallelism:

-

The same operation is applied to multiple inputs in parallel. For example:

- Processing multiple documents simultaneously

- Running the same prompt across different data samples

-

This is useful for scaling workloads across large datasets.

-

-

Model parallelism:

-

Different models are used simultaneously to process the same input. This can improve robustness by combining diverse perspectives.

-

This idea connects to ensemble methods in machine learning, where combining multiple models often yields better performance. For example, Deep Ensembles by Lakshminarayanan et al. (2017) demonstrate improved predictive uncertainty and robustness by aggregating outputs from multiple models.

-

Example

-

Consider a system that generates multiple candidate answers to a question and then selects the best one. Instead of generating answers sequentially, the system can:

- Generate multiple responses in parallel

- Evaluate each response independently

- Select or combine the best outputs

-

This approach improves both speed and quality, as it allows exploration of multiple reasoning paths simultaneously.

Implementation

- LangChain supports parallel execution through constructs like RunnableParallel, which allows multiple chains to run concurrently.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnableParallel

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Define different reasoning strategies

prompt_1 = ChatPromptTemplate.from_messages([

("system", "Answer concisely."),

("human", "{question}")

])

prompt_2 = ChatPromptTemplate.from_messages([

("system", "Answer with detailed reasoning."),

("human", "{question}")

])

chain_1 = prompt_1 | llm | StrOutputParser()

chain_2 = prompt_2 | llm | StrOutputParser()

parallel_chain = RunnableParallel(

concise=chain_1,

detailed=chain_2

)

result = parallel_chain.invoke({"question": "What is reinforcement learning?"})

print(result)

- This example runs two different reasoning strategies in parallel and returns both outputs. A downstream step could then select or merge the best result.

Aggregation and synchronization

-

Parallelization requires a mechanism to combine results from multiple branches. This step is often referred to as aggregation.

-

Common aggregation strategies include:

- Selection: Choose the best output based on a scoring function

- Voting: Combine outputs using majority or weighted voting

- Synthesis: Merge outputs into a unified response

- Filtering: Remove low-quality or inconsistent results

-

This step is critical because parallelization without proper aggregation can lead to fragmented or inconsistent outputs.

Parallelization in agentic systems

-

Parallelization is particularly powerful when combined with other patterns:

- With prompt chaining: Multiple branches can process different aspects of a task

- With routing: Different routes can be executed concurrently

- With multi-agent systems: Multiple agents can work simultaneously on different subtasks

- With retrieval: Multiple sources can be queried in parallel

-

This enables systems to handle complex workflows efficiently while maintaining modularity.

Failure modes

-

While parallelization improves performance, it introduces additional complexity:

- Resource contention: Parallel tasks may compete for computational resources

- Synchronization overhead: Combining results adds complexity

- Inconsistent outputs: Different branches may produce conflicting results

- Cost increase: Running multiple tasks simultaneously increases usage

-

To mitigate these issues:

- Limit the number of parallel branches

- Use lightweight models for exploratory branches

- Apply strong aggregation and validation mechanisms

- Monitor system performance and resource usage

Reflection

-

Reflection is an agentic design pattern that enables a system to evaluate and improve its own outputs through iterative self-critique. Rather than treating an initial response as final, the system introduces a structured feedback loop in which outputs are analyzed, corrected, and refined. This transforms the system from a one-pass generator into an adaptive process capable of improving its performance within the scope of a single task.

-

At its core, reflection operationalizes a simple but powerful idea: reasoning improves when a system is given the opportunity to revisit and critique its own work. This mirrors human problem-solving, where first drafts are rarely final and iterative revision leads to stronger, more accurate outcomes. By incorporating this loop, systems can identify weaknesses, correct errors, and enhance clarity without external intervention.

-

More broadly, reflection represents a shift from static generation to iterative improvement. It serves as a built-in mechanism for quality control, increasing reliability and robustness by enabling systems to detect and address their own mistakes. In the context of agentic design patterns, this makes reflection a foundational capability—one that brings machine reasoning closer to human-like processes, where refinement and revision are essential.

-

Ultimately, reflection allows systems to “learn” within a task itself, even in the absence of explicit retraining. By continuously reassessing and improving their outputs, they become more adaptive, accurate, and effective problem-solvers.

Why reflection is needed

-

Even advanced models frequently produce outputs that are:

- Incomplete

- Inconsistent

- Hallucinated

-

Poorly structured

-

In a single-pass system, these issues persist because there is no mechanism for correction. Reflection introduces a second stage where the system evaluates its output against criteria such as correctness, completeness, and coherence.

- This idea is supported by research such as Self-Refine: Iterative Refinement with Self-Feedback by Madaan et al. (2023), which shows that iterative self-feedback significantly improves output quality across tasks.

The reflection loop

-

Reflection can be formalized as an iterative process:

\[y_0 = f(x), \quad y_{t+1} = g(y_t, x)\]-

where:

- \(f(x)\) generates an initial output

- \(g(y_t, x)\) evaluates and refines the output

-

-

This process can be repeated multiple times until a stopping condition is met, such as:

- A quality threshold

- A fixed number of iterations

- Convergence of outputs

-

The result is a progressively improved response.

-

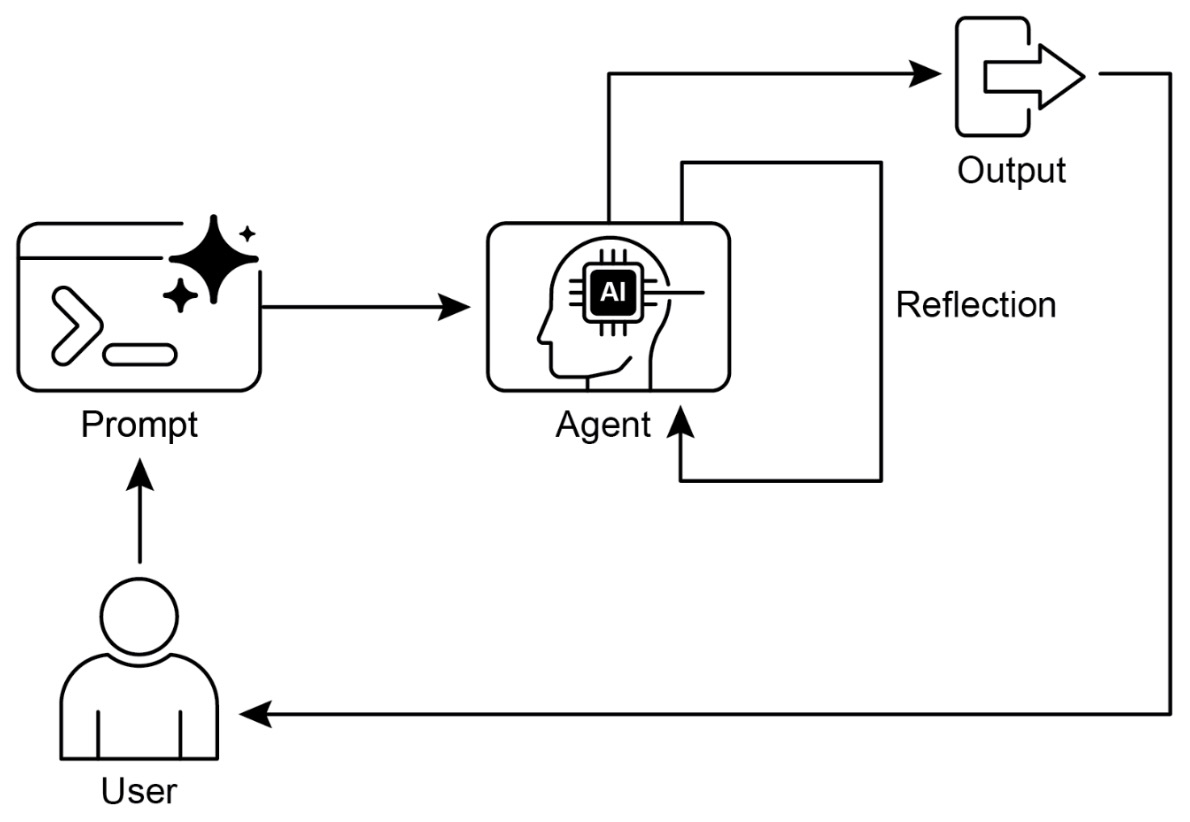

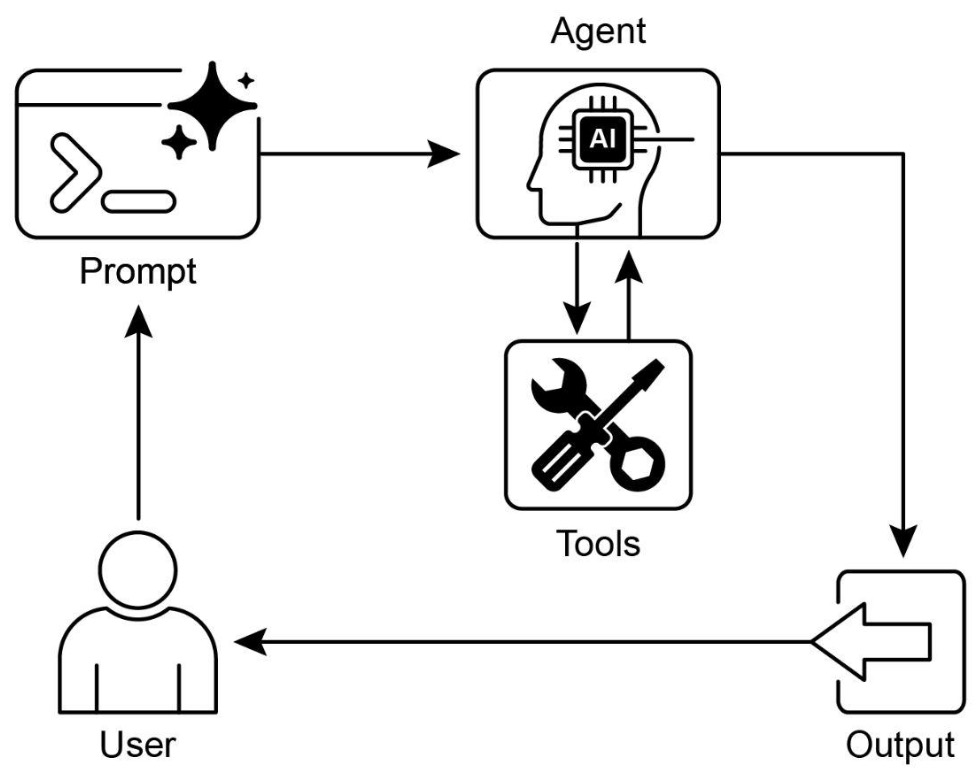

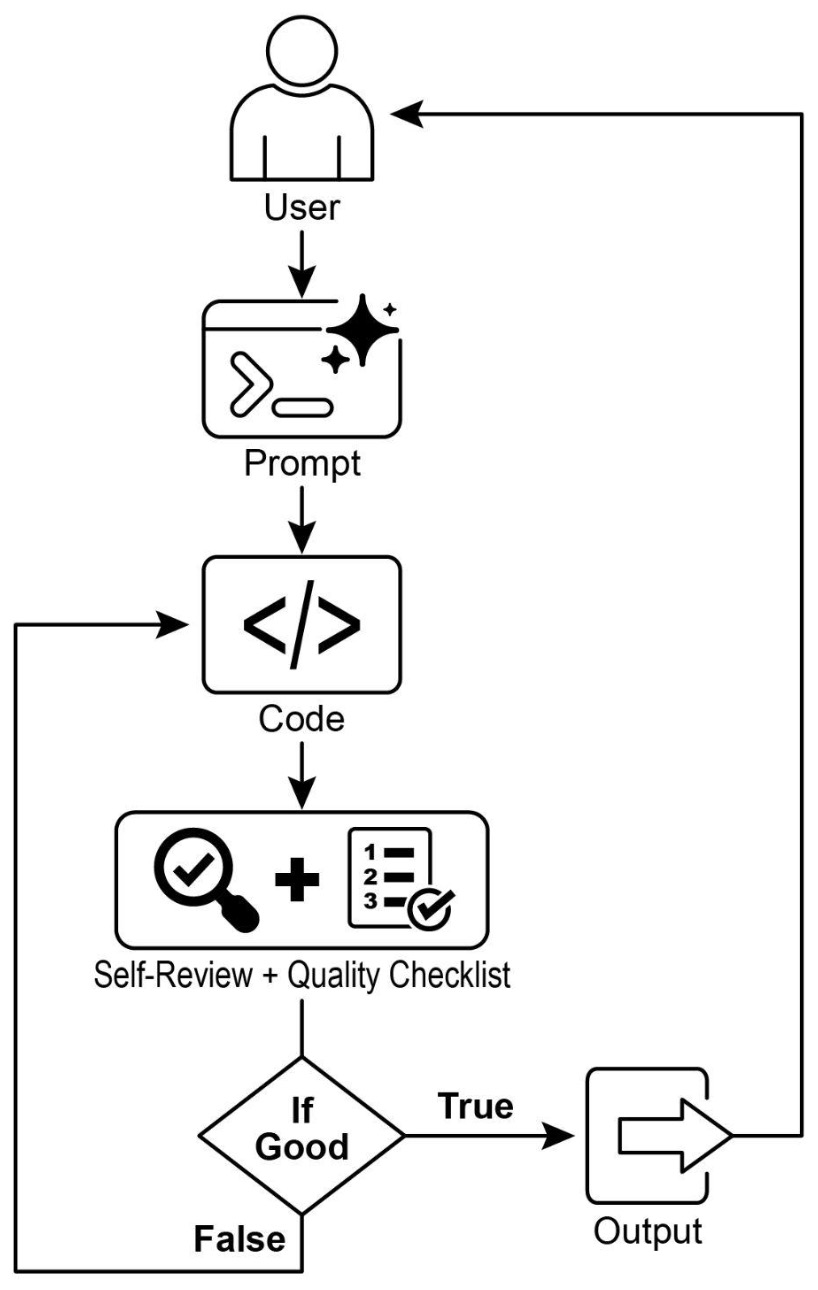

The following figure shows the self-reflection design pattern which undergoes iterative self-refinement with outputs being critiqued and improved over multiple passes.

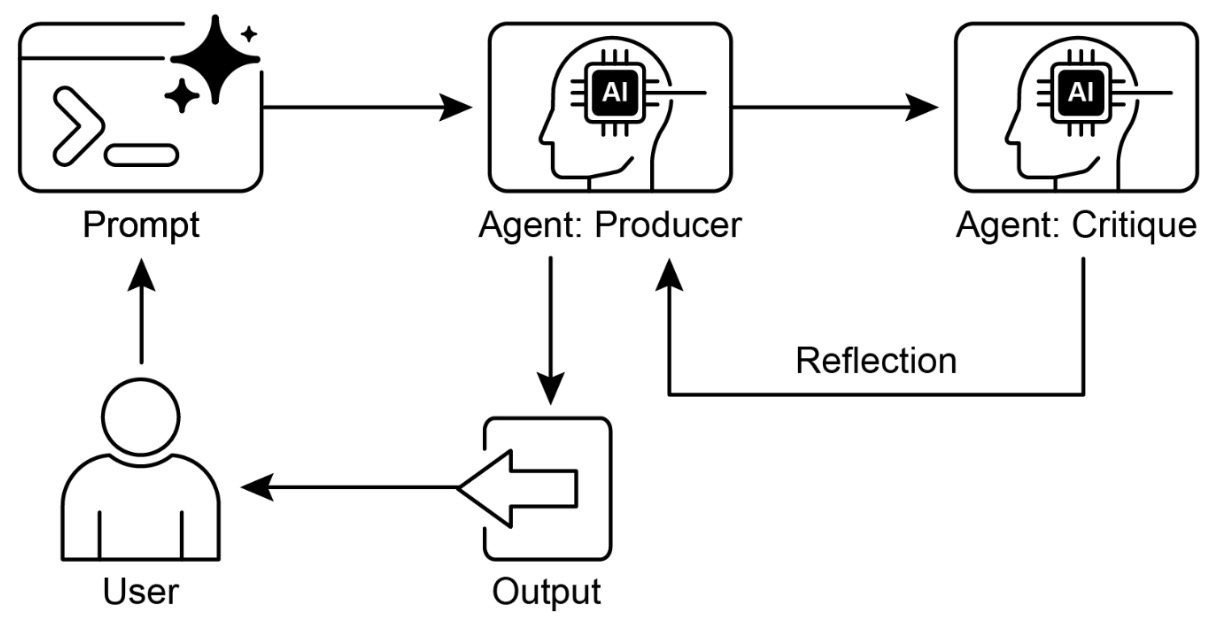

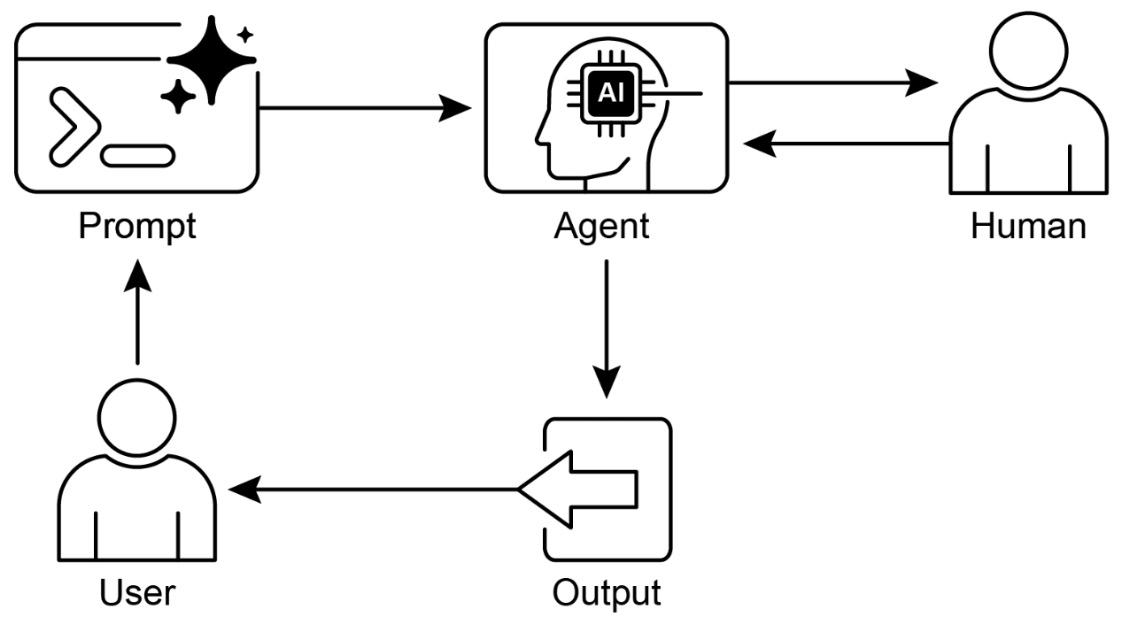

- The following figure shows the reflection design pattern with a producer and critique agent.

Types of reflection

-

Reflection can take several forms depending on how feedback is generated, as follows:

-

Self-critique:

-

The model evaluates its own output using a secondary prompt. For example:

- Identify errors in reasoning

- Check factual consistency

- Suggest improvements

-

-

External critique:

- A separate model or system evaluates the output. This can improve robustness by introducing diversity in evaluation.

-

Rule-based validation:

-

Outputs are checked against predefined constraints, such as:

- JSON schema validation

- Logical consistency checks

- Domain-specific rules

-

-

Human-in-the-loop reflection:

- A human provides feedback, which the system incorporates into subsequent iterations.

-

Example

-

Consider a system that generates code. A reflection-based workflow might:

- Generate initial code

- Analyze the code for errors or inefficiencies

- Revise the code based on feedback

- Repeat until the code meets quality criteria

-

This process significantly improves reliability compared to a single-pass generation.

Implementation

- LangChain can implement reflection by chaining generation and critique steps.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Step 1: Generate initial answer

generate_prompt = ChatPromptTemplate.from_messages([

("system", "Answer the question."),

("human", "{question}")

])

# Step 2: Critique answer

critique_prompt = ChatPromptTemplate.from_messages([

("system", "Critique the following answer for correctness and completeness."),

("human", "{answer}")

])

# Step 3: Improve answer

improve_prompt = ChatPromptTemplate.from_messages([

("system", "Improve the answer based on the critique."),

("human", "Answer: {answer}\nCritique: {critique}")

])

generate_chain = generate_prompt | llm | StrOutputParser()

critique_chain = critique_prompt | llm | StrOutputParser()

improve_chain = improve_prompt | llm | StrOutputParser()

question = "Explain how neural networks learn."

initial = generate_chain.invoke({"question": question})

critique = critique_chain.invoke({"answer": initial})

improved = improve_chain.invoke({

"answer": initial,

"critique": critique

})

print(improved)

- This example demonstrates a single iteration of reflection. In practice, this loop can be repeated multiple times for further refinement.

Reflection in agentic systems

-

Reflection plays a critical role in enabling agents to improve their behavior dynamically. It is often used in:

- Planning: Refining task decomposition

- Tool use: Verifying correctness of tool outputs

- Reasoning: Correcting logical errors

- Multi-agent systems: Providing feedback between agents

-

This aligns with the paradigm introduced in ReAct by Yao et al. (2022), where reasoning is continuously updated based on observations and intermediate results.

Failure modes

-

While reflection improves quality, it introduces trade-offs:

- Increased latency: Multiple iterations require additional model calls

- Cost overhead: Each refinement step adds computational cost

- Over-correction: Excessive refinement can degrade outputs

- Bias reinforcement: The model may reinforce its own mistakes

-

To mitigate these issues:

- Limit the number of reflection iterations

- Use structured evaluation criteria

- Introduce diversity in critique (e.g., multiple evaluators)

- Combine reflection with external validation

Tool Use

-

Tool use is an agentic design pattern that extends a system’s capabilities beyond its internal knowledge by enabling interaction with external functions, APIs, databases, and real-world environments. It transforms a language model from a purely reasoning engine into an action-oriented system capable of operating in practical contexts.

-

At its core, tool use embodies the principle that intelligence is not just about understanding what needs to be done, but also about executing those actions—whether that involves retrieving information, performing computations, or triggering workflows.

-

By bridging the gap between reasoning and execution, tool use shifts the role of AI from a static source of knowledge to a dynamic coordinator of capabilities. In agentic systems, this pattern is what allows models to move beyond simulation and actively engage with the world. As such, it represents a fundamental step in the evolution of AI: the point at which intelligence becomes operational, turning insight into real-world execution.

Why tool use is needed

-

Language models are inherently constrained:

- Their knowledge is limited to training data

- They cannot access real-time or proprietary information

- They cannot perform deterministic computations reliably

- They cannot directly interact with external systems

-

Tool use addresses these limitations by allowing the system to delegate specific tasks to specialized components.

-

For example:

- Use a search API to retrieve current information

- Use a calculator for precise numerical computation

- Query a database for structured data

- Call a service to execute transactions

-

This paradigm is strongly supported by research such as Toolformer by Schick et al. (2023), which demonstrates that models can learn to decide when and how to use tools, significantly improving performance on real-world tasks.

-

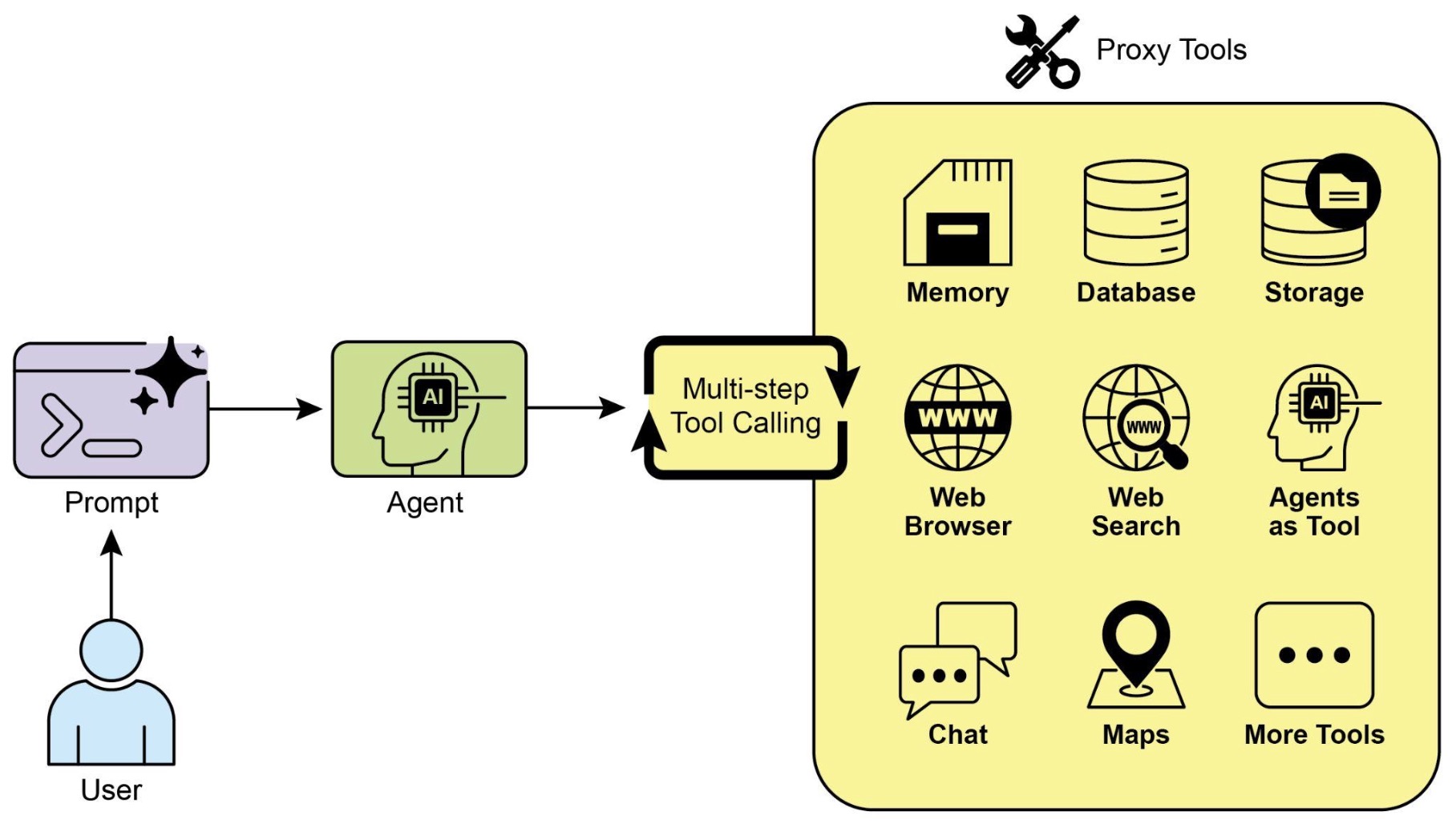

The following figure shows the integration of external tools into the agentic reasoning loop for action execution.

The tool interaction loop

- Tool use introduces an extended decision loop where the system must determine not only what to say, but what to do:

- After invoking a tool, the system observes the result and incorporates it into subsequent reasoning:

-

This creates a tight coupling between reasoning and execution, where actions directly influence future decisions.

-

This interaction pattern is central to modern agent frameworks and is exemplified by ReAct by Yao et al. (2022), where reasoning steps guide tool usage and observations refine subsequent reasoning.

-

The following figure shows the tool use design pattern.

Types of tools

-

Tools can take many forms depending on the application:

-

Information retrieval tools:

- Web search APIs

- Vector databases (RAG systems)

-

Knowledge bases

- These provide access to external knowledge and improve factual accuracy.

-

Computation tools:

- Calculators

- Code execution environments

-

Simulation engines

- These ensure correctness in tasks requiring precise computation.

-

Action tools:

- APIs for booking, payments, or transactions

- Workflow automation systems

-

Robotics interfaces

- These allow the system to affect the external world.

-

Validation tools:

- Schema validators

- Consistency checkers

-

Safety filters

- These ensure outputs meet required constraints.

-

Example

-

Consider a system tasked with answering a financial question: “What is the current stock price of AAPL, and how does it compare to last week?”

-

A tool-enabled system would:

- Recognize that real-time data is required

- Invoke a financial API to retrieve current and historical prices

- Compute the difference

- Generate a response

-

Without tool use, the model would either hallucinate or provide outdated information.

Implementation

- LangChain provides built-in abstractions for integrating tools into agent workflows.

from langchain.agents import initialize_agent, Tool

from langchain_openai import ChatOpenAI

# Define a simple calculator tool

def calculator(expression: str) -> str:

return str(eval(expression))

tools = [

Tool(

name="Calculator",

func=calculator,

description="Useful for solving math expressions"

)

]

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

agent = initialize_agent(

tools=tools,

llm=llm,

agent="zero-shot-react-description",

verbose=True

)

result = agent.run("What is (45 * 23) + 17?")

print(result)

- In this example, the agent decides when to invoke the calculator tool instead of attempting to compute the result internally. This improves both accuracy and reliability.

Tool selection and orchestration

-

A key challenge in tool use is deciding:

- Which tool to use

- When to use it

- How to interpret its output

-

This introduces a decision layer similar to routing, but focused specifically on action selection.

-

In more advanced systems, this can involve:

- Ranking multiple tools

- Composing multiple tool calls

- Handling tool failures and retries

-

This orchestration is central to building robust agentic systems.

Tool use in agentic systems

-

Tool use is deeply interconnected with other patterns:

- With routing: Selecting the appropriate tool

- With prompt chaining: Integrating tool outputs into multi-step workflows

- With reflection: Verifying and correcting tool results

- With planning: Sequencing multiple tool calls

-

This makes tool use one of the most critical enablers of real-world functionality.

Failure modes

-

Tool use introduces several challenges:

- Incorrect tool selection: The system may choose the wrong tool

- Tool misuse: Inputs to tools may be malformed

- Latency: External calls can be slow

- Error handling: Tools may fail or return unexpected results

-

To mitigate these issues:

- Provide clear tool descriptions

- Validate inputs and outputs

- Implement retries and fallbacks

- Monitor tool performance

Planning

-

Planning is an agentic design pattern that enables a system to break down a complex goal into a structured sequence of actions before execution. Instead of reacting myopically step by step, the system forms an explicit or implicit plan that guides its behavior across multiple steps, introducing foresight, coordination, and long-horizon reasoning.

-

At its core, planning shifts a system from reactive execution to goal-directed strategy. Rather than deciding only the immediate next action, the system reasons about how a sequence of actions can collectively achieve an objective. This marks a transition from local decision-making to a more global, strategic perspective.

-

By incorporating planning, agentic systems can anticipate dependencies, coordinate actions, and pursue goals with greater effectiveness. In this sense, planning is the pattern that transforms isolated actions into coherent strategy.

Why planning is needed

-

Reactive systems, even when combined with tools and reflection, often struggle with:

- Multi-step dependencies

- Long-horizon tasks

- Coordination across subtasks

- Efficient use of resources

-

Without planning, the system may:

- Take redundant or suboptimal actions

- Lose track of progress

- Fail to coordinate multiple steps effectively

-

Planning addresses these issues by introducing a structured representation of the task before execution begins.

-

This aligns with classical AI planning as well as modern LLM-based approaches. For example, Plan-and-Solve Prompting by Wang et al. (2023) shows that explicitly generating a plan before solving improves performance on complex reasoning tasks.

The planning process

-

Planning can be expressed as generating a sequence of actions:

\[\pi = (a_1, a_2, \dots, a_n)\]- where \(\pi\) is the plan and each \(a_i\) is an action or subtask.

-

Execution then follows:

-

The key distinction is that the sequence \(\pi\) is generated before or during execution, rather than emerging purely step-by-step.

-

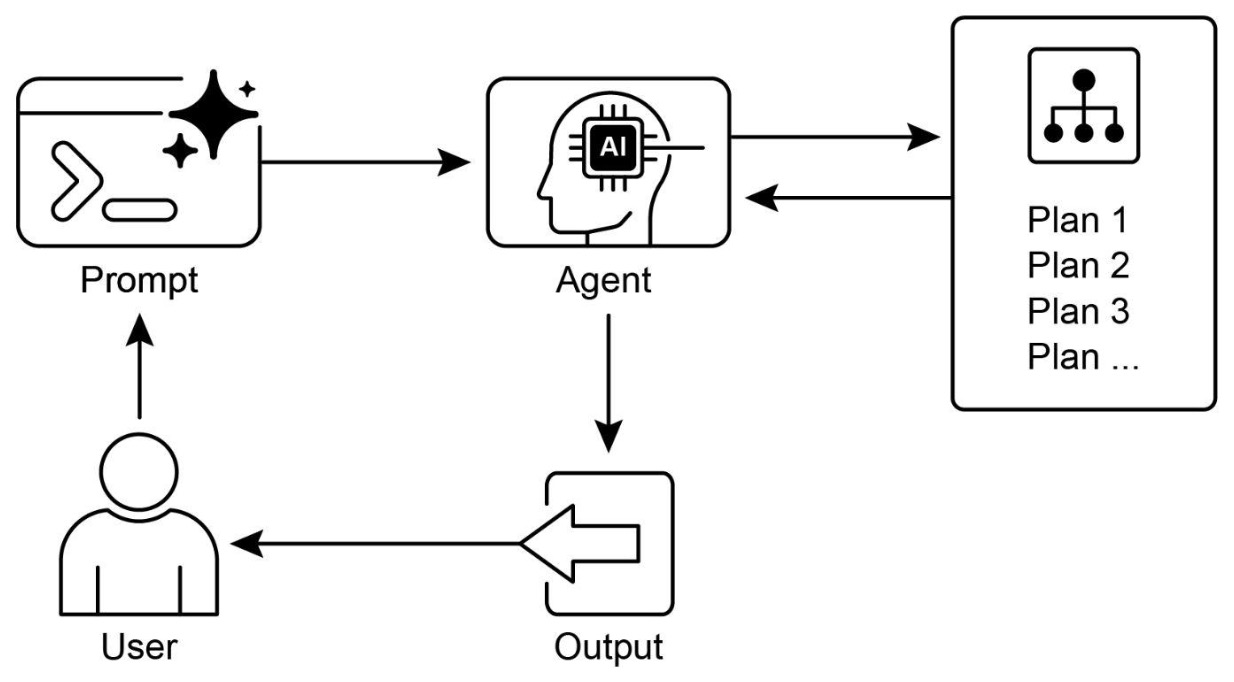

The following figure shows the planning design pattern which involves task decomposition into a structured plan before execution.

Types of planning

-

Planning can take several forms depending on how explicit and structured the plan is.

-

Static planning:

- The system generates a full plan upfront and executes it sequentially. This works well for well-defined tasks but can be brittle if conditions change.

-

Dynamic planning:

- The system updates its plan during execution based on new information. This introduces adaptability and resilience.

-

Hierarchical planning:

- Tasks are decomposed into subgoals and sub-subgoals, forming a tree structure. This is useful for complex problems with multiple layers of abstraction.

-

Iterative planning:

- The system alternates between planning and execution, refining its plan as it progresses.

-

These approaches reflect different trade-offs between structure and flexibility.

Example

-

Consider a task such as: “Plan a trip to Paris for three days.”

-

A planning-based system might:

- Identify key components: travel, accommodation, itinerary

- Break each component into subtasks

- Sequence the tasks logically

- Execute each step using tools (e.g., booking APIs, search)

-

Without planning, the system might jump between unrelated steps or miss important dependencies.

Implementation

- Planning can be implemented in LangChain by separating plan generation from execution.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Step 1: Generate plan

plan_prompt = ChatPromptTemplate.from_messages([

("system", "Break the task into a sequence of steps."),

("human", "{task}")

])

# Step 2: Execute each step

execute_prompt = ChatPromptTemplate.from_messages([

("system", "Execute the following step."),

("human", "{step}")

])

plan_chain = plan_prompt | llm | StrOutputParser()

execute_chain = execute_prompt | llm | StrOutputParser()

task = "Prepare a report on renewable energy trends."

plan = plan_chain.invoke({"task": task})

steps = plan.split("\n")

results = []

for step in steps:

result = execute_chain.invoke({"step": step})

results.append(result)

print(results)

- This example demonstrates a simple two-phase approach: first generate a plan, then execute each step sequentially.

Planning with tools and feedback

-

Planning becomes more powerful when combined with other patterns:

- With tool use: Each step in the plan can invoke specific tools

- With reflection: The plan can be evaluated and refined

- With routing: Different steps can be assigned to specialized components

- With parallelization: Independent steps can be executed concurrently

-

This creates a flexible system where planning guides execution but does not rigidly constrain it.

Planning in agentic systems

-

Planning is a key enabler of advanced agent behavior:

- It allows agents to handle long-term objectives

- It improves coordination across multiple actions

- It reduces inefficiencies in execution

- It enables proactive behavior

-

In multi-agent systems, planning often involves coordination across agents, where different agents are assigned different parts of the plan.

Failure modes

-

Planning introduces its own challenges:

- Overplanning: Excessive detail can reduce flexibility

- Plan brittleness: Static plans may fail in dynamic environments

- Error propagation: Flawed plans lead to flawed execution

- Complexity: Managing plans adds overhead

-

To mitigate these issues:

- Use dynamic or iterative planning

- Incorporate feedback loops

- Validate plans before execution

- Allow replanning when conditions change

Prioritization

- In complex, dynamic environments, agentic systems constantly face multiple competing actions, conflicting goals, and limited resources. Without a structured way to decide what to do next, they risk inefficiency, delays, or even complete failure to achieve their objectives. The prioritization design pattern addresses this challenge by enabling agents to evaluate, rank, and select tasks according to well-defined criteria, ensuring that effort is directed toward the most impactful actions.

- At its core, prioritization transforms an agent from a reactive executor into a strategic decision-maker: rather than treating all tasks equally, the agent continuously determines what matters most and aligns its behavior with overarching goals and constraints. As a result, prioritization becomes a cornerstone of agentic intelligence, allowing agents not just to act, but to decide what is worth acting on. By continuously evaluating and reordering tasks, agents demonstrate a form of strategic reasoning that closely mirrors human decision-making, a capability that is essential for building systems that are not only functional, but truly effective in real-world, high-complexity environments.

Core idea

-

Prioritization introduces a decision function over a set of candidate tasks:

\[a^* = \arg\max_{a \in \mathcal{A}} \mathcal{S}(a)\]-

where:

- \(\mathcal{A}\) is the set of possible actions or tasks

- \(\mathcal{S}(a)\) is a scoring function based on prioritization criteria

- \(a^*\) is the selected highest-priority action

-

-

This formalization highlights that prioritization is fundamentally an optimization problem under constraints.

Key components of prioritization

-

Effective prioritization typically involves four key components:

-

Criteria definition:

-

Agents define evaluation criteria to assess tasks. Common criteria include:

- Urgency: how time-sensitive the task is

- Importance: impact on primary objectives

- Dependencies: whether other tasks rely on it

- Resource availability: readiness of tools or data

- Cost-benefit tradeoff: effort versus expected outcome

- User preferences: personalization signals

-

These criteria define the agent’s notion of “value”.

-

-

Task evaluation:

-

Each candidate task is evaluated against the defined criteria. This can range from:

- Rule-based scoring (e.g., priority levels P0, P1, P2)

- Heuristic functions

- LLM-based reasoning over task descriptions

-

This step transforms qualitative information into comparable scores.

-

-

Scheduling and selection:

-

Based on evaluations, the agent selects the next action or sequence of actions. This may involve:

- Priority queues

- Greedy selection

- Integration with planning systems

-

This is where prioritization connects directly with planning and execution.

-

-

Dynamic re-prioritization:

-

As new information arrives or conditions change, priorities must be updated. This enables:

- Responsiveness to new events

- Adaptation to deadlines

- Recovery from failures or delays

-

Dynamic re-prioritization is essential for real-world environments where conditions are non-static.

-

-

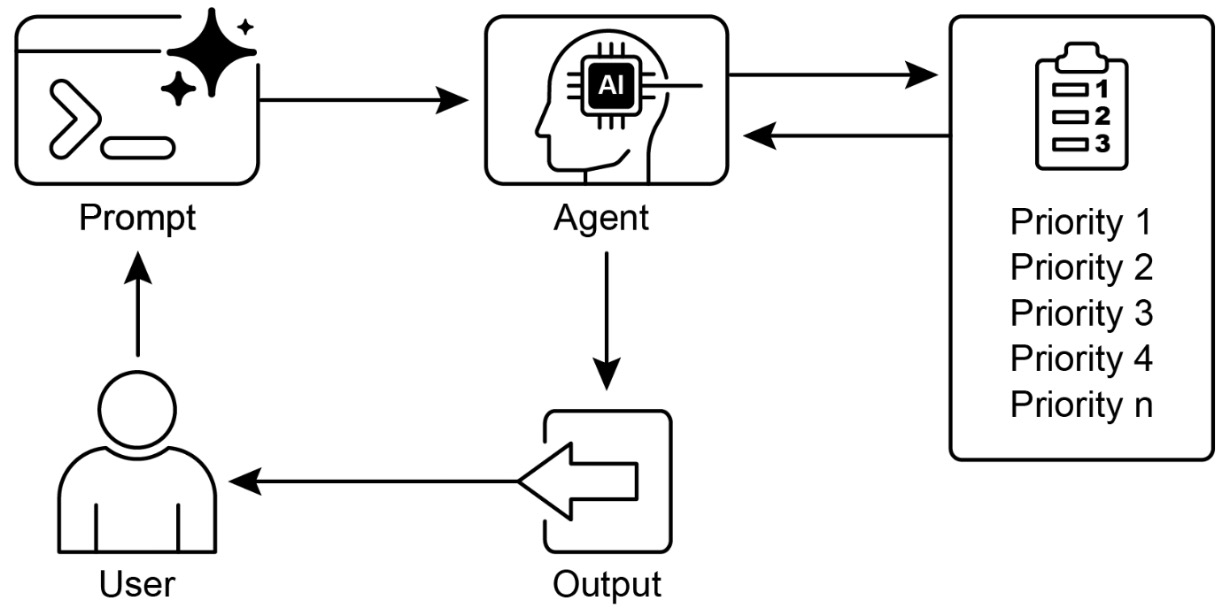

The following figure shows the prioritization design pattern and how tasks are evaluated and ordered based on defined criteria.

Levels of prioritization

-

Prioritization operates at multiple levels within an agentic system:

- Goal-level prioritization: selecting which high-level objective to pursue

- Plan-level prioritization: ordering sub-tasks within a plan

- Action-level prioritization: choosing the next immediate step

-

This multi-level structure mirrors hierarchical decision-making in human organizations.

Relationship to other patterns

-

Prioritization is deeply interconnected with other agentic design patterns:

- Planning: prioritization determines which plan steps execute first

- Routing: prioritization can influence which workflow or agent is selected

- Tool use: determines which tool invocation is most critical

- Goal monitoring: evaluates progress and adjusts focus

- Evaluation: provides signals that influence future prioritization

-

Together, these patterns form a decision-making backbone for the agent.

Real-world applications

-

Prioritization is fundamental across many domains:

- Customer support: urgent incidents (e.g., outages) are handled before routine requests

- Cloud computing: critical workloads receive resources before batch jobs

- Autonomous driving: collision avoidance overrides efficiency goals

- Financial trading: high-risk or high-reward trades are executed first

- Cybersecurity: severe threats are addressed before minor alerts

- Personal assistants: schedules and reminders are ordered by importance and timing

-

These examples demonstrate that prioritization is essential wherever decisions must be made under constraints.

Implementation

- The following example demonstrates a project manager agent that creates, prioritizes, and assigns tasks using tools.

from langchain_openai import ChatOpenAI

from langchain.agents import AgentExecutor, create_react_agent

from langchain_core.prompts import ChatPromptTemplate

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0.5)

prompt = ChatPromptTemplate.from_messages([

("system", """You are a Project Manager AI.

Always:

1. Create a task

2. Assign priority (P0 highest, P2 lowest)

3. Assign a worker

"""),

("human", "{input}")

])

agent = create_react_agent(llm, tools=[], prompt=prompt)

executor = AgentExecutor(agent=agent, tools=[], verbose=True)

executor.invoke({"input": "Create an urgent task to fix login issues"})

-

In practice, this system would integrate with:

- Task storage (memory layer)

- Tooling for updates and assignment

- Evaluation signals for reprioritization

Why prioritization matters

-

Without prioritization:

- Agents may waste resources on low-value tasks

- Critical deadlines may be missed

- Conflicting goals may cause indecision

- System behavior becomes unpredictable

-

With prioritization:

- Decision-making becomes structured and goal-aligned

- Resources are allocated efficiently

- Agents behave more intelligently and robustly

- Systems can scale to complex, multi-objective environments

Rule of thumb

- Use the prioritization pattern when an agent must autonomously manage multiple competing tasks or goals under constraints. It is especially critical in dynamic environments where conditions change and decisions must be made continuously.

Pattern Selection and Composition

Core Idea

-